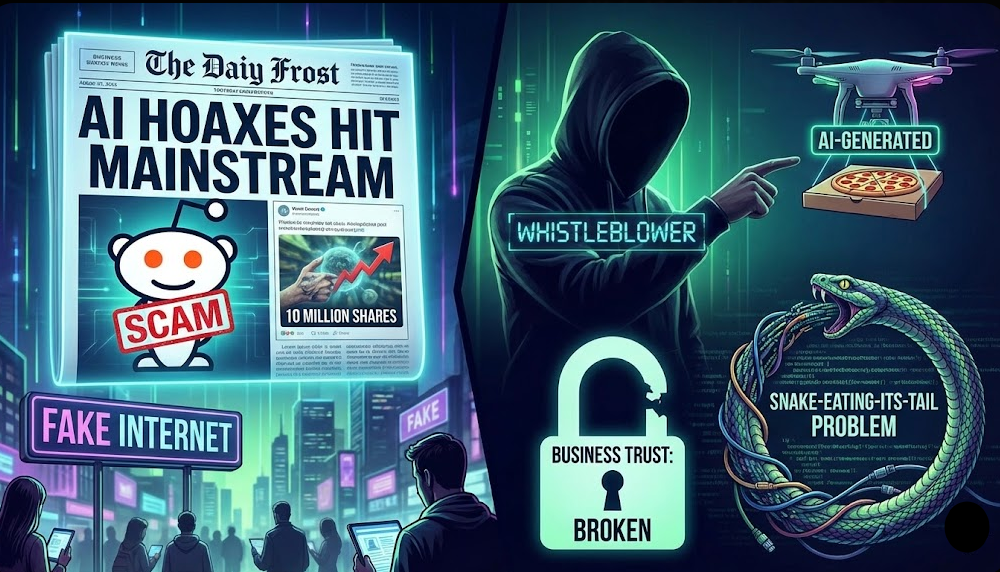

AI Hoaxes Just Hit the Mainstream: How a Viral Reddit Scam Fooled Millions and Marked the Start of the “Fake Internet” Era

Inside the AI-Generated Food Delivery Whistleblower, the Snake-Eating-Its-Tail Problem, and What Businesses Must Do Before Trust Completely Breaks

Published: February 24, 2026 | Reading Time: ~18 minutes

1. The Reddit Whistleblower Who Never Existed

On the first week of 2026, a Reddit post detonated across the internet.

An anonymous user, u/trowaway_whistleblow, claimed to be a data scientist at a major food delivery company—close enough in tone and detail that many assumed they worked at one of the big platforms like Uber Eats or DoorDash. The post read like a Netflix documentary script:

“You guys always suspect the algorithms are rigged against you, but the reality is so much more depressing than the conspiracy theories…”

He alleged, in striking detail, that:

- The company secretly computed a “desperation score” for each driver.

- This score supposedly factored in:

- The app allegedly delayed orders and shaped routes specifically to keep “desperate” drivers online and underpaid.

- Drivers were described internally as “human assets”, with dashboards showing their “exploitable” tolerance.

The narrative fit perfectly into existing fears about gig work and algorithmic exploitation. It felt plausible, emotionally charged, and morally satisfying.

It went nuclear:

- The original post amassed tens of thousands of upvotes and hundreds of long comment chains on Reddit.

- Screenshots spread across X, TikTok, Instagram, and YouTube commentary channels.

- Some commentators called it the “Cambridge Analytica moment” for gig workers.

Within 48 hours, at least one major food-delivery platform issued a statement denying the existence of any such “desperation score.”

The apparent whistleblower then escalated their proof. When tech journalist Casey Newton (Platformer) reached out, the user moved the conversation to Signal and shared:

- A photo of an employee ID badge.

- An 18‑page “internal research document” allegedly laying out the desperation-scoring algorithm, complete with charts, equations, and internal references.

At first glance, it was damning. At second glance, it was… too damning.

Newton and other reporters began to notice problems:

- The document read like a villain’s monologue—every page served the narrative perfectly, with none of the noise, bureaucracy, or irrelevant sections real internal docs usually contain.

- Stylistically, the writing had that familiar AI gloss: very clean, very confident, oddly generic in places.

- Formatting artefacts and visual clues suggested the ID photo had been assembled, not photographed.

Large models and AI-detection tools flagged both the badge and the “internal paper” as likely generative.

By January 7, outlets including NBC News, Engadget, The Verge, and TechCrunch converged on the same assessment:

the entire “whistleblower” package—text, image, and document—was almost certainly an AI-generated hoax.

Reddit removed the post. But screenshots, stitches, and secondary commentary are still circulating today.

The lie had done its work.

2. AI Slop Comes for Reddit (and Everything Else)

The Verge summarised the incident this way: “That viral Reddit post about food delivery apps was an AI scam.” Engadget ran with “Viral Reddit post critical of food-delivery apps may have been AI-generated.”

The story matters because it shows how low the bar now is to manufacture a “scandal” that can:

- Hijack public attention

- Force billion‑dollar companies to respond

- Enter mainstream news

- And still leave a residue of doubt, even after being debunked

And this is just one example.

Fact-checkers are now logging a steady stream of AI-driven hoaxes:

- AFP debunked an AI-generated “giant anaconda in a river” video that was shared as real nature footage.

- Researchers and journalists have highlighted AI‑generated mukbang-style videos, including the viral clip of a man “calmly eating lava with a spoon,” as signals of a new era where even obviously impossible visuals are treated as entertainment—not necessarily labelled as synthetic.

The pattern is clear:

We’ve passed the point where AI fakes are rare anomalies. They’re now a background noise layer in everyday feeds.

3. The Snake Eating Its Own Tail: AI Feeding on AI

Australian broadcaster ABC published a piece in February 2026 describing AI-generated social content as “like a snake eating its own tail.”

Their core points:

- Social media algorithms reward volume and engagement, not accuracy or originality.

- Users feel immense pressure to produce content constantly to stay visible—posts, carousels, videos, comments.

- Many have quietly started outsourcing that burden to AI: ChatGPT-style tools for posts, image generators for thumbnails, summarizers for “threads.”

- The result is a rapidly growing layer of formulaic, AI-like content—with telltale repetitive phrasing, generic motivation themes, and “listicle” logic.

ABC quotes experts warning that:

- A significant share of “user content” is now generated or heavily rewritten by AI.

- Bots and automation farms are flooding platforms with low-effort AI slop purely to drive ad revenue or affiliate clicks.

- As models are retrained, they increasingly consume their own output—AI feeding on AI rather than on human-generated data.

NPR and other outlets have amplified a related concern: model collapse.

If model training pipelines incorporate too much synthetic content:

- Models start to hallucinate more.

- Text becomes bland, self-referential, and biased toward prior outputs, rather than grounded in reality.

- Over multiple generations, performance can degrade in subtle but systemic ways.

In other words:

The snake is not just eating its own tail. Over time, it may start digesting its own brain.

4. Why AI Hoaxes Work So Well

4.1 They’re engineered for our biases

The food-delivery hoax wasn’t random. It was built to hit specific emotional triggers:

- Pre-existing suspicion: People already suspect gig platforms exploit workers.

- Insider tone: The post used the language of product managers and data scientists—“scoring models,” “loss functions,” KPI charts.

- Moral clarity: The company was depicted as unambiguously evil; the whistleblower as noble and disillusioned.

AI made it trivial to:

- Draft a convincing narrative,

- Iteratively refine it until it “felt” credible,

- And fabricate supporting artefacts (docs, badges, charts) on demand.

We didn’t just read it. We wanted it to be true.

4.2 Speed beats verification

The hoax advanced in three phases:

- Reddit virality: The initial text post takes off on r/technology-style subs.

- Cross-platform amplification: Screenshots migrate to X, TikTok, YouTube commentary channels.

- Journalistic scrutiny: Only then do reporters start digging—and discover the evidence is probably synthetic.

By the time step 3 happens:

- Millions have seen the claim.

- Only a fraction will ever see the retraction or debunk.

- The story has become part of the ambient narrative: “Yeah, I heard one of those companies uses desperation scores.”

In the attention economy, speed and virality trump accuracy. AI gives hoaxers extreme speed and scale.

5. A Map of AI Hoaxes (2025–2026)

To see the bigger picture, it helps to classify what’s already happening.

5.1 Types of AI hoaxes already in the wild

This is already reality, not hypothetical.

6. The Regulators Wake Up: FTC, Fraud, and “No AI Exemption”

Regulators are increasingly clear: AI doesn’t give anyone a free pass to mislead or defraud.

6.1 FTC: Deepfakes and impersonation tools are on notice

The US Federal Trade Commission (FTC):

- Has explicitly proposed rules to target deepfake impersonation and AI-enabled fraud.

- Emphasises that existing laws already prohibit unfair or deceptive acts—even when AI is involved.

A key concept in FTC guidance:

Tools and platforms that provide the “means and instrumentalities” for deception can themselves be held liable if they know—or should know—how their systems are being used.

That means:

- Voice-cloning apps that market themselves as “pranks” but ignore obvious fraud use-cases.

- Face-swap apps that advertise realistic impersonations without guardrails.

- Even general-purpose model providers, if they actively promote use cases that are clearly deceptive.

The FTC has also moved against deceptive AI marketing, where companies overstate what their AI can do or hide risks.

6.2 Old laws, new tech

Legal analysts point out that much of what’s happening with AI hoaxes fits under existing legal frameworks:

- Defamation – when AI fabrications damage reputations.

- Securities fraud – when AI-crafted rumours move markets.

- Consumer deception – when users are misled into financial decisions.

2026 is shaping up as the year courts begin to test these intersections at scale.

7. Who Is at Risk—and How?

Here’s a more structured view of the stakeholders in the blast radius.

7.1 Risk matrix for the AI-hoax era

Everyone is in the blast radius, but brands and platforms have the greatest exposure—because they sit at the intersection of user trust, money, and regulation.

8. How to Spot AI Hoaxes (Without Becoming a Cynic)

You can’t manually fact-check every post you see. But you can build simple habits.

8.1 A practical checklist for everyday readers

Use this whenever you encounter a dramatic, shareable “insider” story.

- Check for independent corroboration.

- Is any reputable outlet confirming the claim with named sources?

- Or is everyone just linking back to the same post/tweet/video?

- Examine the evidence, not just the narrative.

- Scan for AI writing “tells.”

ABC’s reporting and other analyses highlight common markers:- Overuse of generic verbs like “delve”, “navigate”, “unpack”.

- Repetitive sentence structure (e.g., “In conclusion…” style endings).

- Overly linear, “outline-like” flow where every paragraph supports a single thesis with little digression.

- Reverse image search suspicious photos.

- Employee badges, internal dashboards, or “candid” office pics can often be traced back to stock or AI origins with a simple reverse search.

- Watch for emotional over-optimization.

- Does the story feel engineered to push outrage or sympathy from start to finish? Real stories often include ambiguity, self-doubt, or messy side details.

- Be cautious with screenshots of text.

- Screenshots are harder to search, easier to fake, and often used precisely to avoid automated moderation or detection.

You don’t have to become a hardened skeptic. Just mildly slower to share.

8.2 A defensive playbook for brands and platforms

If you run a company, marketplace, or high-visibility project, you need a higher tier of defence.

1. Monitor where your narratives live.

Track mentions across:

- Reddit (especially industry subs),

- X/Twitter, TikTok, YouTube community posts,

- Niche forums where whistleblowers often surface first.

2. Stand up a “Trust & AI Response” pod.

Not a giant team—just a small cross-functional squad that can, within hours:

- Assess whether a viral claim could be AI-generated (using internal expertise + external tools).

- Cross-check against internal logs and policies.

- Coordinate legal, PR, support, and product.

3. Pre-define your response tiers.

For example:

- Tier 1 – Low: Misunderstanding of a real policy. Answer via FAQ or community managers.

- Tier 2 – Medium: Misleading but not viral content. Quietly correct and provide clarifications if asked.

- Tier 3 – High: Viral hoax with fake docs or impersonation. Issue a transparent, evidence-backed statement and, where appropriate, engage regulators/platforms.

4. Run drills.

Just like incident response for security, run simulations:

“It’s Monday 9am. A ‘leaked slide’ claiming we use a ‘despair index’ is going viral on Reddit. Show me the first 12 hours of actions.”

This reduces panic when—not if—something similar happens.

9. Where Agents Fit In: From Productivity Boost to Narrative Warfare

You’ve been tracking the rise of AI agents—OpenClaw, personal GPTs, Claude “Cowork,” and similar systems that don’t just answer questions but take actions.

The Reddit hoax shows the flip side:

- The exact same capacities that let an agent:

- Research a topic,

- Draft text,

- Generate images,

- Post to platforms,

- And respond to comments,

can be chained together to industrialise hoax production.

A malicious or “playful” actor can instruct an agent to:

- Generate a plausible whistleblower narrative.

- Create supporting “evidence” (internal docs, badge photos) using image and text models.

- Post it on Reddit, then cross‑post to X/TikTok, then upload a YouTube script.

- Auto‑reply to comments with slightly varied, reinforcing explanations to keep engagement high.

As OpenAI, Meta, and others integrate agents into mainstream products (like ChatGPT, Llama-based assistants, and OpenClaw-derived tools), the cost of orchestrating such campaigns continues to fall.

This is not a reason to abandon agents; it is a reason to design them with friction, logging, and identity in mind.

10. The 2027 Internet: Likely Trajectories

Given current trajectories, the near future probably looks like this:

- A significant fraction of public content—posts, comments, images, even live-feeling videos—is AI-assisted or AI-generated.

- Models that are fine-tuned on “whatever is popular” will ingest a growing share of synthetic content, potentially degrading in quality unless curation and filtering are applied at training time.

- Regulators move from guidance to enforcement:

- The FTC actually applies its proposed deepfake rules and “means and instrumentalities” doctrine in landmark cases.

- EU and state-level AI laws are tested against real AI-hoax incidents.

We will likely move toward a two-layer internet:

- The Synthetic Surface Layer

- Fast, cheap, everywhere.

- Heavy mix of AI-generated posts, low-effort engagement farming, and bots.

- The Verified Substrate

- Slower but more reliable.

- Content with provenance signals (C2PA, platform-level “original capture” markers, verified organizations).

Brands, creators, and institutions that want to maintain trust will need to invest in that second layer:

- Verified channels

- Provenance tools

- Transparent audit trails for critical claims

11. FAQ

Was the Reddit food-delivery whistleblower real?

There is no evidence that the whistleblower was real. Multiple investigations by NBC, The Verge, TechCrunch, and Engadget concluded that key “evidence”—including the ID badge and 18‑page internal doc—was almost certainly AI-generated, and Reddit removed the original post.

How common are AI-generated hoaxes now?

Fact‑checking organisations and newsrooms are already seeing regular AI‑generated fakes across text, image, and video. Many are not highly sophisticated—they rely more on emotional narrative and platform virality than on technical perfection.

Could I be legally liable for sharing AI fake content?

Ordinary users who unknowingly share a fake meme are rarely targeted. But:

- Businesses, campaigns, or creators who knowingly amplify deceptive AI content,

- Or use AI hoaxes in advertising or fundraising,

can face regulatory and civil liability under existing laws (fraud, consumer deception, defamation), especially given FTC’s stance on AI deception and deepfakes.

What tools can detect AI-generated posts?

There is no perfect detector. Journalists and analysts typically combine:

- AI-generated text detectors (useful but fallible),

- Reverse image search on photos,

- Stylistic analysis (like the ABC “AI tell” patterns),

- And traditional reporting: contacting companies, verifying identities, and checking metadata.

Is this the end of trust on the internet?

Not the end—but a transition. Just as email needed spam filters and HTTPS, the internet now needs:

- Better provenance and watermarking,

- Stronger identity signals for high-impact content,

- And more literacy—both at the individual and organisational level—about how AI hoaxes work.

Those who adapt early will still be able to build trust. Those who don’t will be swallowed by the noise.