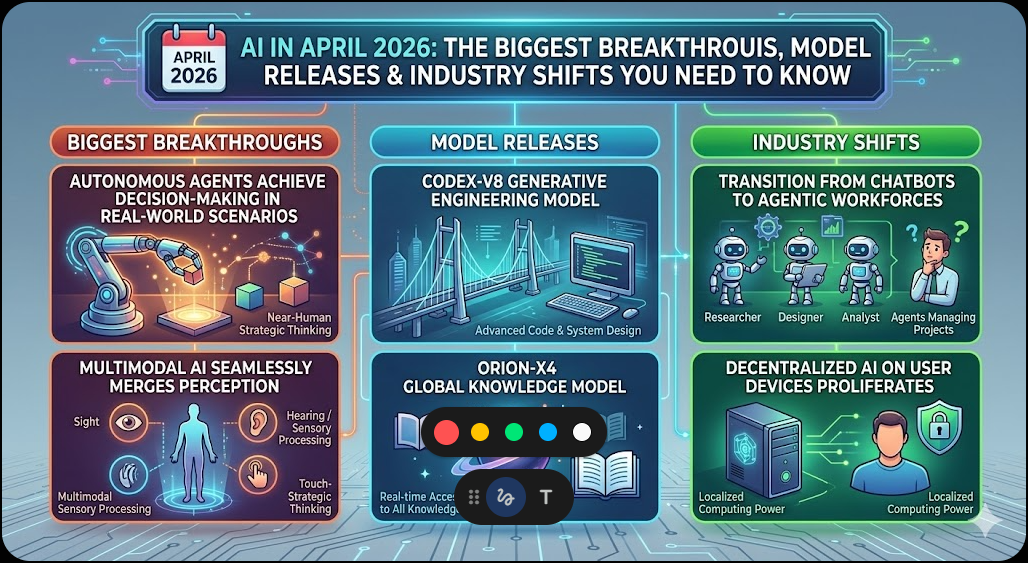

AI in April 2026: The Biggest Breakthroughs, Model Releases & Industry Shifts You Need to Know

By Kersai | April 16, 2026

Introduction

April 2026 has proven to be the most consequential month in the history of artificial intelligence. In the span of just four weeks, the industry witnessed three of the five largest venture capital rounds ever recorded, the release of frontier AI models that now perform at or above human expert level across 44 professional occupations, and the single largest merger in corporate history — valued at over $1.25 trillion. This is no longer a technology story. It is an economic, geopolitical, and societal inflection point.

The data is unambiguous. Q1 2026 saw global startup funding hit a record $297 billion — with AI startups absorbing $242 billion, or 81% of all venture capital deployed globally[web:47][web:50]. Four of the five largest venture rounds in history closed in a single quarter: OpenAI raised $122 billion, Anthropic $30 billion, xAI $20 billion, and Waymo $16 billion[web:50]. SpaceX completed its acquisition of xAI for $250 billion, creating a vertically integrated entity valued at $1.25 trillion[web:22][web:31]. And on the technical front, models released in April 2026 — GPT-5.4, Claude Mythos 5, and Gemini 3.1 Pro — have crossed capability thresholds that were considered theoretical just 18 months ago.

This article provides a comprehensive, data-driven analysis of every major AI development in April 2026 — from model benchmarks to funding flows, from enterprise adoption statistics to the physical AI wave now entering the real world.

Section 1 — The Model Wars: April 2026’s Unprecedented Release Window

April 2026 has become the densest AI model release window in the industry’s history. Three frontier labs — OpenAI, Anthropic, and Google DeepMind — have each launched or confirmed major new models within weeks of each other. The benchmarks tell a story of rapid, simultaneous advancement across multiple capability domains.

GPT-5.4: The First Truly Unified Frontier Model

OpenAI released GPT-5.4 on March 5, 2026, and it has since established itself as the most versatile frontier model available to the public. Unlike every prior generation, GPT-5.4 is not a collection of specialist variants — it is a single model that credibly leads across coding, computer use, reasoning, and knowledge work simultaneously[web:17].

Key Benchmarks:

| Benchmark | GPT-5.4 Score | What It Measures | Industry Context |

|---|---|---|---|

| GDPval | 83.0% | Real-world knowledge work across 44 occupations | Highest published score. Up 12.1 points from GPT-5.2’s 70.9%[web:19][web:24] |

| BigLaw Bench | 91% | Complex transactional legal analysis | Better at structuring transactional analysis than any prior model[web:17] |

| Artificial Analysis Intelligence Index | 57 (tied #1) | Composite score across 10 evaluations | Tied with Gemini 3.1 Pro for #1 overall out of 339 models[web:36] |

| BenchLM Overall Score | 94/100 (#2 of 106) | Broad capability assessment | Ranks #2 on provisional leaderboard, #3 verified[web:18] |

| Arena Elo | 1466 | Community preference rating | Top-tier competitive standing[web:18] |

| OSWorld (Computer Use) | 75.0% | Autonomous computer task completion | Human expert baseline is 72.4%[web:24] |

| SWE-bench Pro | 57.7% | Real-world software engineering | Industry-leading coding performance[web:24] |

The GDPval benchmark deserves particular attention. Developed by OpenAI, it tests AI performance across 44 real-world occupations spanning the top 9 industries contributing to U.S. GDP — including software engineers, lawyers, financial analysts, registered nurses, and mechanical engineers[web:19]. An 83% score means GPT-5.4 matched or exceeded the output of human industry professionals in 83% of comparisons. Independent analysis by Ethan Mollick indicates this translates to approximately 4 hours and 38 minutes of time saved per 7-hour task, even accounting for failure rates and the need to verify results[web:20].

GPT-5.4 is available in five variants covering the full spectrum of deployment needs: Standard, Thinking, Pro, Mini, and Nano. Its context window extends to 1.05 million tokens, and it is priced at $2.50 per million input tokens and $15 per million output tokens[web:18].

Claude Mythos 5: The Model Too Powerful to Release

Anthropic confirmed Claude Mythos 5 in early April 2026 — but with an unprecedented caveat: it will not be released publicly, nor made available via standard API[web:7]. Internal testing triggered Anthropic’s ASL-4 safety protocol, a classification reserved for models approaching genuinely dangerous capability thresholds[web:23].

Claude Mythos 5 is the first AI model to cross the 10-trillion-parameter threshold. It uses a Mixture of Experts (MoE) architecture where only an estimated 800 billion to 1.2 trillion parameters are active per forward pass — giving it the knowledge capacity of 10 trillion parameters with the computational cost of a ~1 trillion parameter dense model[web:23].

Architectural Features:

| Feature | Details |

|---|---|

| Total Parameters | 10 trillion (MoE architecture) |

| Active Parameters (estimated) | 800B – 1.2T per token |

| Training Tokens | 15.5 trillion |

| Domain-Specific Clusters | Cybersecurity, academic research, complex software engineering |

| Safety Protocol Triggered | ASL-4 (highest risk tier) |

| Release Status | Withheld from public access |

Mythos 5 has dedicated expert clusters for cybersecurity, academic research, and complex software engineering — a deliberate design choice targeting use cases where scale provides the most differentiated value. Its cybersecurity capabilities include full attack chain analysis: given a network topology and set of known vulnerabilities, Mythos 5 can construct complete multi-stage attack chains including lateral movement paths, privilege escalation sequences, and data exfiltration routes[web:23]. Anthropic has implemented additional safety layers that prevent the model from generating working exploits targeting production systems or assisting with offensive operations against real infrastructure.

The decision to withhold Mythos 5 from public release marks the first time a major frontier lab has built a completed model deemed too capable to deploy. It raises questions the industry has quietly avoided: what happens when a model’s capabilities exceed our collective readiness to govern its use?

Gemini 3.1 Pro: Google’s Multimodal Answer

Google DeepMind released Gemini 3.1 Pro into preview on February 19, 2026, and it has since emerged as the most capable multimodal model Google has ever shipped[web:33][web:36].

Key Benchmarks:

| Benchmark | Gemini 3.1 Pro Score | What It Measures | Comparison |

|---|---|---|---|

| ARC-AGI-2 | 77.1% | Novel abstract reasoning | Highest of all published models. More than double Gemini 3 Pro’s score[web:33] |

| GPQA Diamond | 94.3% | Graduate-level science Q&A | Highest score ever reported on this benchmark[web:35] |

| Humanity’s Last Exam | 44.4% | Frontier knowledge assessment | Outperformed Claude Opus 4.6 and GPT-5.2[web:35] |

| Artificial Analysis Intelligence Index | 57 (tied #1) | Composite across 10 evaluations | Tied with GPT-5.4 for #1 overall[web:36] |

Gemini 3.1 Pro features a 1-million-token context window — the largest among all publicly available flagship models alongside GPT-5.4[web:36]. It processes text, image, audio, and video simultaneously through native multimodal architecture rather than stitching together separate encoders. It is available via Gemini API, Vertex AI, Google AI Studio, and the Gemini consumer app.

Head-to-Head: April 2026 Frontier Models

| Feature | GPT-5.4 | Gemini 3.1 Pro | Claude Mythos 5 | Claude Opus 4.6 |

|---|---|---|---|---|

| GDPval | 83.0% | Not published | Not published | 59.6% |

| ARC-AGI-2 | 73.3% | 77.1% | N/A | ~68–72% |

| GPQA Diamond | Not published | 94.3% | N/A | 91.3% |

| BigLaw Bench | 91% | Not published | N/A | N/A |

| Composite Index (AAI) | 57 (tied #1) | 57 (tied #1) | N/A | 53 |

| Context Window | 1.05M tokens | 1M tokens | ~500K (est.) | 200K |

| Active Parameters | MoE (undisclosed) | MoE (undisclosed) | ~800B–1.2T | ~1T |

| Public Access | Yes (all tiers) | Yes (API/Vertex) | No (withheld) | Yes (paid) |

| Release Date | March 5, 2026 | February 19, 2026 | April 2026 | Early 2026 |

Section 2 — The SpaceX–xAI Megadeal: The Largest M&A Transaction in History

On February 2, 2026, Elon Musk announced that SpaceX had acquired xAI in a deal that values xAI at $250 billion and the combined entity at over $1.25 trillion — the single largest merger and acquisition transaction ever recorded[web:22][web:31]. The deal was confirmed by CNBC’s David Faber on the same day and subsequently reported by Reuters, the BBC, and every major financial outlet[web:28][web:31].

Deal Structure:

| Component | Details |

|---|---|

| xAI Valuation | $250 billion (based on recent funding round) |

| SpaceX Internal Valuation | $1 trillion (up from ~$800B prior) |

| Combined Entity Value | $1.25 trillion |

| Share Exchange Ratio | 0.143 SpaceX shares per xAI share |

| Cash Option | $75.46 per share for select executives |

| Expected IPO | Mid-2026, potentially raising up to $50B |

The strategic logic is compelling. SpaceX’s Starlink satellite broadband business has delivered strong revenue growth, supporting the internal valuation lift from approximately $800 billion in secondary market transactions to $1 trillion[web:22]. The acquisition folds xAI’s AI capabilities into SpaceX’s existing space, satellite, and social media (X/Twitter) ecosystem — creating what Musk described as “the most ambitious, vertically-integrated innovation engine on (and off) Earth”[web:31].

Tesla had previously announced a $2 billion investment in xAI the month before the merger, and xAI was valued at $230 billion in November 2025 according to the Wall Street Journal[web:28][web:31]. The $250 billion valuation in the SpaceX deal represents modest growth from that baseline.

The combined entity is widely expected to pursue an IPO as early as mid-2026, subject to regulatory approvals and market conditions. If it proceeds, the offering could raise as much as $50 billion — potentially the largest public listing in history[web:22].

Section 3 — Q1 2026: The Largest Venture Capital Quarter Ever Recorded

The funding data for Q1 2026 is difficult to overstate. Global startup investment hit a record $297 billion in the first three months of the year — a 150% increase both quarter-over-quarter and year-over-year[web:48][web:49]. Of that total, AI startups absorbed $242 billion, or 81% of all venture capital deployed globally[web:47].

Top 5 Largest Venture Rounds Ever (All in Q1 2026):

| Rank | Company | Amount Raised | Focus |

|---|---|---|---|

| 1 | OpenAI | $122 billion | Frontier AI models & infrastructure |

| 2 | Anthropic | $30 billion | Safe AI development |

| 3 | xAI | $20 billion | AI + space integration |

| 4 | Waymo | $16 billion | Autonomous vehicles |

| 5 | (Various) | < $16 billion each | — |

These four mega-deals alone — OpenAI, Anthropic, xAI, and Waymo — totaled $188 billion, representing 65% of all global venture investment in the quarter[web:50]. To put this in perspective: these four rounds exceeded the total global venture funding of all of 2024.

The concentration is staggering. Previous record-setting quarters saw AI accounting for approximately 55% of global venture funding. In Q1 2026, that figure jumped to 81%[web:47]. The implication is clear: capital is fleeing every other sector and concentrating almost exclusively on AI infrastructure, models, and applications.

US-specific data shows $267 billion in venture funding for the quarter, driven by the same massive AI deals[web:50]. This funding surge is accelerating infrastructure buildout at a pace that energy grids are struggling to support — a topic covered in the infrastructure section below.

Section 4 — Agentic AI: From Concept to Enterprise Production

Perhaps the most strategically significant trend of 2026 is the rapid shift from conversational AI to agentic AI — systems that plan, act, and learn toward goals without step-by-step human prompting. In January 2026, just 12 months after the first agentic AI pilots, more than 4 in 10 organizations already had AI agents in production[web:40].

Agentic AI Adoption Statistics:

| Metric | Statistic | Source |

|---|---|---|

| Organizations with AI agents in production | 43% | ServiceNow |

| Organizations experimenting with AI agents | 62% | McKinsey |

| Organizations scaling agents in at least one function | 23% | McKinsey |

| Enterprise applications with embedded task-specific AI agents (projected by end of 2026) | 40% | Gartner |

| Organizations with at least some level of AI agent adoption | 79% | Multimodal research |

| Organizations planning to expand agentic AI adoption in 2026 | 100% | CrewAI survey |

| Average workflows with agentic AI per organization | 31 | CrewAI survey |

| Expected increase in agentic AI adoption for 2027 | 33% | CrewAI survey |

The Mayfield Fund’s “Agentic Enterprise in 2026” report notes that over 72% of enterprises are either in production with or actively piloting agentic AI — a dramatic acceleration from the exploratory pilot phase that dominated 2025[web:40]. The CrewAI survey of enterprises found that 65% are already actively using AI agents, with 81% reporting that their adoption is either fully realized or in the process of expansion[web:46].

The shift from vertical SaaS to vertical AI represents a fundamental change in how software is sold and delivered. While traditional SaaS sold tools to help humans work, vertical AI sells the outcome of the labor itself — allowing founders to capture portions of traditional labor budgets in industries like insurance, legal, and logistics[web:11]. In 2026, successful businesses are increasingly structured around leading teams of AI agents rather than managing teams of people.

McKinsey’s projection that agentic systems could automate up to 70% of knowledge worker tasks by 2028 is no longer speculative — it is being validated by early enterprise deployments across financial services, legal, healthcare administration, and software engineering[web:37].

Section 5 — AI Infrastructure: The Power Crisis & the Chip Wars

Behind every frontier model is an infrastructure challenge that is now becoming impossible to ignore. The U.S. faces a projected 9–18 gigawatt (GW) electricity shortfall by 2027 as hyperscalers race to build AI data centres faster than the grid can support them.

This has triggered a “bring your own power” strategy among the largest AI companies. Microsoft has reopened Three Mile Island. Amazon is in active discussions over multiple dedicated nuclear sites. The trend toward off-grid nuclear and gas-peaker plants adjacent to data centres is accelerating across the industry.

Google’s TurboQuant Breakthrough

At ICLR 2026, Google’s research team introduced TurboQuant — an algorithm that addresses the memory overhead in vector quantization for AI inference[web:5]. As models grow in parameter size and context window length, the Key-Value (KV) cache becomes a massive bottleneck in data centre memory. TurboQuant allows for the quantization of the KV cache to just 3 bits with zero accuracy loss, reducing memory usage by at least six times and delivering up to an eight-fold speedup in attention log

it computation — effectively removing one of the primary bottlenecks in long-context inference[web:5]. The implications are profound: inference costs for models like GPT-5.4 and Gemini 3.1 Pro could fall dramatically as this technology is adopted across the industry. Arista Networks has already raised its 2026 revenue outlook to $11.25 billion as firms rush to deploy high-density AI clusters no longer constrained by traditional memory pricing.

The Custom Silicon Race

While NVIDIA continues to dominate AI compute orchestration globally, the competitive landscape is shifting. Google has partnered with Broadcom on custom TPU development. Anthropic has signed a major deal with CoreWeave for dedicated compute infrastructure. Intel has announced a collaboration with Google on next-generation AI accelerators. Meta has deployed its own custom AI chips (MTIA) across data centres, reducing dependence on NVIDIA[web:5].

Key Research Papers Driving Infrastructure Advances:

| Paper Title | Primary Contribution | Industry Impact |

|---|---|---|

| TurboQuant (Google, ICLR 2026) | 6x memory compression for KV cache | Reduces inference cost by up to 8x for long-context models |

| The AI Scientist-v2 (Sakana AI) | Fully automated hypothesis generation & paper writing | Accelerates drug discovery and materials science research |

| Beyond the Binary (Governance) | Tiered release framework for open-weight AI | Establishes safety protocols for model deployment |

| Aligned, Orthogonal or In-conflict | Safe optimization of Chain-of-Thought reasoning | Improves reliability of model “thinking” stages |

Section 6 — Physical AI: The Robotics Wave Enters Reality

The AI revolution is no longer confined to software. At the Hong Kong InnoEX 2026 fair (April 14–15, 2026), humanoid robots were shown boxing, performing musical routines, and executing rescue operations — footage that went globally viral within hours[web:55][web:61]. More than 100 robots were showcased across two exhibitions at the Hong Kong Convention and Exhibition Centre, including four of the world’s top five humanoid robot manufacturers: AgiBot, EngineAI, UBTECH, and Unitree[web:52].

The AgiBot X2 Ultra robot drew particular attention, impressing visitors with its language capabilities and physical dexterity[web:61]. This follows a year in which humanoid robots performed martial arts routines and comedy sketches on China’s 2026 Spring Festival Gala — reaching hundreds of millions of viewers on national television[web:58].

This wave aligns directly with NVIDIA CEO Jensen Huang’s GTC 2026 keynote, in which he outlined a comprehensive roadmap for “physical AI” — robots trained on synthetic data to operate in real-world environments at scale[web:53][web:56]. Jensen Huang’s keynote covered agentic AI, AI factories, open models, agentic systems, and physical AI as the full stack powering the next generation of intelligent systems.

Humanoid Robot Market Projections:

| Metric | 2023 | 2026 (projected) | 2030 (projected) |

|---|---|---|---|

| Global Market Value | ~$1 billion | ~$8–10 billion | ~$38 billion |

| Commercial Shipments | < 1,000 units | ~15,000–20,000 units | ~200,000+ units |

| Primary Use Cases | Research, demos | Warehouse, manufacturing | Multi-industry deployment |

Companies including Figure AI, Boston Dynamics, and Unitree are now shipping commercial-grade humanoid robots to warehouse and manufacturing clients. The global humanoid robot market, valued at under $1 billion in 2023, is projected to exceed $38 billion by 2030.

Section 7 — AI in Science: When Machines Write Research

Perhaps the most philosophically disruptive development of the year: Sakana AI’s AI Scientist v2 autonomously generated research hypotheses, ran experiments, analyzed data, and wrote complete scientific papers — one of which was accepted by peer reviewers at ICLR 2025[web:44]. The paper was published in Nature in March 2026, providing the first peer-reviewed account of how to build an AI scientist[web:44].

What AI Scientist v2 Accomplished:

| Capability | Description |

|---|---|

| Hypothesis Generation | Automatically proposes novel research questions |

| Experiment Execution | Runs code, trains models, collects results |

| Data Analysis | Interprets experimental outputs |

| Paper Writing | Generates complete manuscript including figures |

| Peer Review Submission | Submits to academic conferences |

It is important to note that the accepted paper was submitted to a workshop track at ICLR 2025 (ICBINB — “I Can’t Believe It’s Not Better”), which had an acceptance rate of approximately 32.6%[web:38]. One of three submitted papers was accepted, and it was voluntarily withdrawn per a prior agreement before final publication. While not a main-track acceptance, this nonetheless represents the first time an AI system has passed peer review on a research paper it authored autonomously.

Sakana AI co-founder David Ha acknowledged in the Nature paper that the AI-generated work is not yet at the same level as the best human-produced papers accepted by the same conference — but the trajectory is clear[web:44].

Separately, MIT researchers have developed AI models that use machine learning to uncover atomic defects in materials — a development that could improve heat transfer and energy-conversion efficiency in semiconductors, microelectronics, solar cells, and battery materials[web:2]. The model, trained on 2,000 different semiconductor materials, can detect up to six kinds of point defects simultaneously — something impossible using conventional techniques alone.

Separately, MIT engineers introduced VibeGen — a generative AI model that designs proteins based on their motion rather than just their static structure[web:10]. Published in the journal Matter in March 2026, VibeGen uses two cooperating agents — a “designer” that proposes candidate sequences and a “predictor” that evaluates whether they will actually move as intended. By specifying a vibrational fingerprint as the design input, VibeGen inverts the traditional logic of protein engineering: dynamics becomes the blueprint, and structure follows[web:13].

MIT AI Research Breakthroughs — April 2026:

| Breakthrough | Institution | Application | Impact |

|---|---|---|---|

| Atomic Defect Discovery AI | MIT Nuclear Science & Engineering | Materials science, semiconductors | Detects up to 6 defect types simultaneously at 0.2% concentration |

| VibeGen Protein Design | MIT Biological Engineering | Adaptive therapeutics, biomaterials | First AI to design proteins by motion, not just structure |

| AI MRI Analysis | MIT | Medical imaging | Named a 2026 breakthrough by MIT Technology Review |

Section 8 — Connectivity Breakthroughs: 360 Gbps Wireless Using Light

Researchers have developed a chip-scale optical wireless system that achieves data rates exceeding 360 gigabits per second while using approximately half the energy of traditional Wi-Fi[web:8][web:11]. The system uses a 5 x 5 array of vertical-cavity surface-emitting lasers (VCSELs), with each individual laser transmitting between 13 and 19 Gbps.

This breakthrough matters for AI infrastructure because data centre networking is one of the primary bottlenecks in scaling AI compute. Coherent has simultaneously announced 400 Gbps silicon photonics for faster cluster-level data transfer[web:5]. Together, these developments are removing two of the three traditional bottlenecks in AI infrastructure — memory (addressed by TurboQuant) and networking (addressed by optical wireless and silicon photonics) — leaving energy as the remaining constraint.

Section 9 — AI Governance & The Call for Regulation

As capabilities have surged, so too has the chorus calling for governance. Former leaders from Google and OpenAI are publicly warning about inequalities, job disruptions, and cybercrime risks enabled by advanced AI[web:8]. The debate has moved beyond abstract ethics into concrete policy proposals.

The 2nd Digiloong Cup Global AI Innovation Competition, organized by Century Huatong, represents an attempt to drive practical AI innovation while embedding compliance and transparency requirements into the competition framework[web:8]. Tech leaders across the industry have emphasized that regulatory measures are now an overdue priority — not an obstacle to innovation.

Key governance concerns in April 2026:

- Job displacement: Agentic AI is projected to automate 70% of knowledge worker tasks by 2028, raising questions about workforce transition and universal basic income

- Cybersecurity escalation: AI-powered attacks are compressing defender response times from hours to seconds

- Concentration of power: Four companies — OpenAI, Anthropic, xAI, and Google — now control the majority of frontier AI capability and the capital required to build it

- Energy inequality: The 9–18 GW U.S. power shortfall driven by AI data centres is diverting resources from civilian grid infrastructure

Section 10 — The “One-Hour Company”: How AI Is Rewriting the Startup Playbook

One of the most underreported shifts of 2026 is the emergence of what industry observers are calling the “One-Hour Company” — a business that can be conceived, built, launched, and generating revenue in the span of a single afternoon[web:11].

The old startup playbook required raising money to hire people to build software to solve a problem. In 2026, the sequence has collapsed. AI agents can now write code, design interfaces, deploy infrastructure, run marketing campaigns, and handle customer support — all autonomously. The competitive moat has shifted from “the ability to build” (now a commodity) to “unique distribution and judgment”[web:11].

This is driving a structural shift from vertical SaaS to vertical AI. While traditional SaaS sold tools to help humans work, vertical AI sells the outcome of the labor itself — allowing founders to capture portions of traditional labor budgets in industries like insurance, legal, logistics, and healthcare administration[web:11].

Section 11 — What the Data Actually Tells Us

Stepping back from individual announcements, the macro picture in April 2026 is one of accelerating concentration and capability.

The Funding Concentration:

- $297 billion deployed in Q1 2026 — a record that will likely not be matched for years

- 81% of that capital went to AI startups

- Four deals accounted for 65% of all capital deployed globally

- AI venture funding has grown from 55% of global VC in Q1 2025 to 81% in Q1 2026[web:47]

The Capability Leap:

- GPT-5.4 scores 83% on GDPval — above human expert level across 44 occupations

- Gemini 3.1 Pro scores 77.1% on ARC-AGI-2 — the highest of any published model

- Claude Mythos 5, at 10 trillion parameters, represents a scale that no other model approaches

- Three models (GPT-5.4, Gemini 3.1 Pro, Claude Opus 4.6) all score within 4 points of each other on composite benchmarks — an unprecedented convergence at the frontier

The Infrastructure Strain:

- The U.S. faces a 9–18 GW power shortfall by 2027 from AI data centre construction alone

- Google’s TurboQuant reduces inference memory by 6x with zero accuracy loss

- Optical wireless achieves 360+ Gbps at half the energy of Wi-Fi

- Custom silicon from Meta, Google, and Intel is reducing NVIDIA dependence

The Enterprise Response:

- 79% of enterprises have adopted AI agents at some level

- 40% of enterprise applications will have embedded task-specific AI agents by end of 2026

- 100% of surveyed enterprises plan to expand agentic AI adoption in 2026

- Average organization has 31 workflows using agentic AI, expecting 33% growth in 2027

Key Takeaways

- GPT-5.4 is the most versatile publicly available model, scoring 83% on GDPval (above human expert level) and ranking #2 overall out of 106 models on BenchLM. It leads across coding, computer use, reasoning, and knowledge work simultaneously.

- Claude Mythos 5 is the first 10-trillion-parameter model, but has been withheld from public release after triggering Anthropic’s ASL-4 safety protocol — the first time a major lab has built a model deemed too capable to deploy.

- Gemini 3.1 Pro leads on abstract reasoning (77.1% on ARC-AGI-2) and science (94.3% on GPQA Diamond), with a 1-million-token context window and native multimodal processing.

- SpaceX acquired xAI for $250 billion in the largest M&A deal in history, creating a $1.25 trillion vertically integrated entity with a potential $50 billion IPO planned for mid-2026.

- Q1 2026 raised $297 billion globally — with AI startups absorbing $242 billion (81%). Four deals (OpenAI $122B, Anthropic $30B, xAI $20B, Waymo $16B) exceeded all of 2024’s venture funding combined.

- Agentic AI has crossed into enterprise production, with 79% of organizations having adopted AI agents and 40% of enterprise applications projected to have embedded agents by end of 2026.

- Physical AI is arriving — humanoid robots were showcased boxing and performing at Hong Kong’s InnoEX 2026 fair, following Jensen Huang’s GTC 2026 “physical AI” roadmap.

- AI-authored research has passed peer review — Sakana AI’s AI Scientist v2 generated a paper accepted at ICLR 2025, with the methodology published in Nature in March 2026.

- Infrastructure breakthroughs are removing bottlenecks — Google’s TurboQuant cuts inference memory by 6x, optical wireless achieves 360+ Gbps at half Wi-Fi energy, and custom silicon is reducing NVIDIA dependence.

- Governance is now the central debate — as capability outpaces regulation, former industry leaders are calling for concrete policy measures on job displacement, cybersecurity, and energy allocation.

Frequently Asked Questions

Q: What is the most powerful AI model available to the public in April 2026?

A: GPT-5.4 and Gemini 3.1 Pro are tied for #1 on the Artificial Analysis Intelligence Index (both scoring 57). GPT-5.4 leads on knowledge work (83% GDPval) and coding benchmarks, while Gemini 3.1 Pro leads on abstract reasoning (77.1% ARC-AGI-2) and science (94.3% GPQA Diamond). Claude Mythos 5, potentially the most capable model ever built, has been withheld from public release entirely.

Q: Why did SpaceX acquire xAI?

A: The $250 billion acquisition merges Elon Musk’s AI and space ventures into a vertically integrated company valued at $1.25 trillion. The deal combines AI, rockets, Starlink satellite internet, and X (Twitter) social media under one entity, positioning for a potential $50 billion IPO as early as mid-2026.

Q: What is agentic AI and why does it matter?

A: Agentic AI refers to systems that autonomously plan, act, and learn toward goals without step-by-step human prompting. Unlike chatbots that respond to queries, agents complete multi-step tasks — browsing the web, writing code, filling forms, and triggering API calls independently. In 2026, 79% of enterprises have adopted AI agents, and 100% of surveyed organizations plan to expand adoption this year.

Q: What is GDPval and what does an 83% score mean?

A: GDPval is a benchmark developed by OpenAI that tests AI performance on real-world, economically valuable tasks across 44 occupations in the top 9 GDP-contributing industries. An 83% score means the model matched or exceeded human industry professionals in 83% of comparisons — translating to approximately 4 hours and 38 minutes saved per 7-hour task.

Q: Why was Claude Mythos 5 not released?

A: Anthropic determined that internal testing of Claude Mythos 5 triggered its ASL-4 safety protocol — the highest risk tier, reserved for models approaching genuinely dangerous capability thresholds. This is the first time a major AI lab has completed a frontier model and withheld it from public release on safety grounds.

Q: How much AI funding was raised in Q1 2026?

A: Global venture funding hit a record $297 billion in Q1 2026, with AI startups absorbing $242 billion — 81% of all capital deployed globally. Four deals alone (OpenAI $122B, Anthropic $30B, xAI $20B, Waymo $16B) totaled $188 billion, exceeding all of 2024’s global venture funding.

Q: Is AI causing a power grid crisis?

A: Yes. The U.S. faces a projected 9–18 gigawatt electricity shortfall by 2027 driven entirely by AI data centre construction. This has prompted major tech companies to pursue off-grid nuclear and gas power strategies, including Microsoft reopening Three Mile Island and Amazon pursuing dedicated nuclear sites.

Q: What happened at the Hong Kong AI & Robotics Fair 2026?

A: The InnoEX 2026 fair (April 14–15) showcased over 100 robots, including four of the world’s top five humanoid robot manufacturers. Robots were shown boxing, performing musical routines, and executing rescue operations — footage that went globally viral. The AgiBot X2 Ultra robot drew particular attention for its language capabilities and physical dexterity.

Q: Has an AI ever written a research paper that passed peer review?

A: Yes. Sakana AI’s AI Scientist v2 autonomously generated a research paper that was accepted by peer reviewers at the ICLR 2025 workshop track. The methodology was subsequently published in Nature in March 2026. While the accepted paper was from a workshop (not main) track and was voluntarily withdrawn before final publication, it remains the first AI-authored paper to pass peer review.

Q: What is Google’s TurboQuant and why does it matter?

A: TurboQuant is an algorithm introduced at ICLR 2026 that quantizes the Key-Value (KV) cache to just 3 bits with zero accuracy loss — reducing memory usage by at least 6x and delivering up to 8x speedup in attention computation. It addresses one of the primary bottlenecks in long-context AI inference, potentially reducing inference costs dramatically.

Conclusion

April 2026 represents a genuine inflection point — not a marketing claim, but a data-backed reality. The combination of record capital deployment ($297 billion in one quarter), models that perform at or above human expert level across 44 professional occupations, the largest M&A deal in history ($1.25 trillion), and the first AI-authored paper to pass peer review marks a qualitative shift in the trajectory of artificial intelligence.

The questions for April 2026 are no longer whether AI will transform industries, but how quickly, at what cost, and with what guardrails. The concentration of capital and capability in the hands of four companies — OpenAI, Anthropic, xAI, and Google — raises questions about competition, governance, and the distribution of AI’s benefits. The energy infrastructure strain, the cybersecurity escalation, and the workforce displacement all demand policy responses that have not yet materialized at scale.

For businesses, investors, and individuals, the imperative is clear: understand the capabilities that exist today, assess the trajectory over the next 12 months, and position accordingly. The AI arms race is no longer a future scenario — it is the present reality, and April 2026 is the month it became undeniable.

Published by Kersai — AI Consultancy & Content Strategy | April 16, 2026

© 2026 Kersai. All rights reserved.