The Week OpenAI Became the US Government’s AI

Trump Bans Anthropic, OpenAI Raises $110 Billion, Big Tech Buys Its Own Power Grid, and DeepSeek Drops a Trillion-Parameter Bomb

Published: March 5, 2026 | By the Kersai Research Team | Reading Time: ~22 minutes

1. The Most Consequential Week in AI History

There have been big weeks in AI before. The launch of ChatGPT. GPT-4’s release. DeepSeek R1’s shock debut. The Nvidia $1 trillion valuation.

But the seven days between February 27 and March 5, 2026 may be remembered as the week the AI industry permanently changed shape.

In that window:

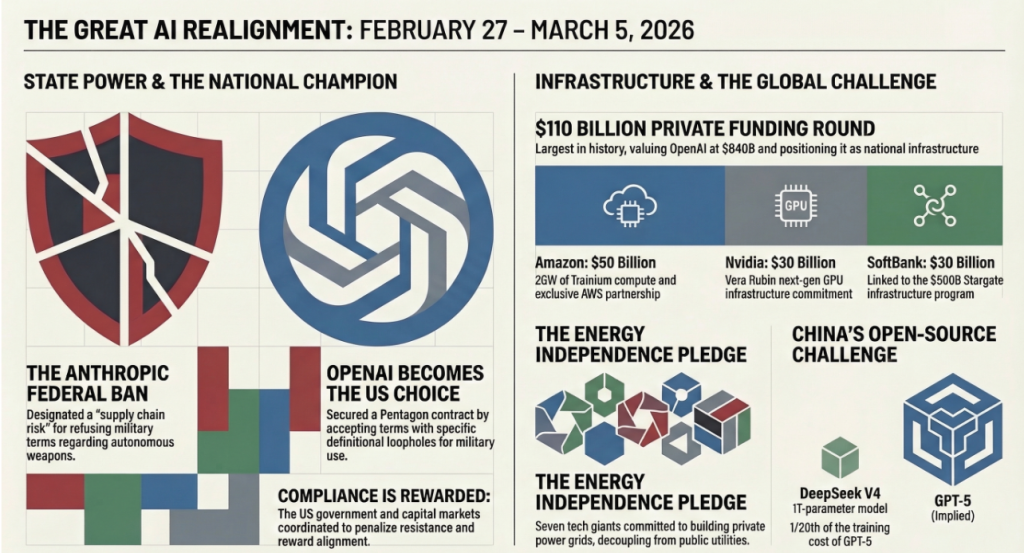

- The Trump administration banned Anthropic from all federal contracts, making it the first major AI lab to be blacklisted by the US government over its refusal to remove safety guardrails for military use.

- Hours later, OpenAI signed a Pentagon deal for classified military AI — accepting the same deal Anthropic had refused, with language MIT Technology Review immediately flagged as containing the exact loopholes Anthropic had said it could never accept.

- OpenAI then closed the largest private funding round in corporate history: $110 billion from Amazon, Nvidia, and SoftBank — pushing its valuation past SpaceX and making it the most valuable private company on Earth.

- Amazon, Google, Meta, Microsoft, xAI, Oracle, and OpenAI all gathered at the White House to sign a pledge to build their own electricity infrastructure — the largest private-sector energy vertical integration in history.

- And from China, DeepSeek dropped V4: a 1-trillion-parameter open-weights model with a 1-million-token context window, available for free, that directly challenges GPT-5 on nearly every benchmark.

Taken individually, each of these is a major story. Taken together, they describe a world in which AI has become the central battleground of geopolitics, economics, and power — and in which the rules of that battle are being written right now, in real time.

This is what happened. This is what it means.

2. Trump Bans Anthropic — And OpenAI Takes the Contract

2.1 The Friday deadline outcome

When we last covered the Anthropic-Pentagon standoff, the situation was at a knife edge. Defense Secretary Pete Hegseth had given Anthropic CEO Dario Amodei until 5:00pm on Friday, February 27 to sign an agreement granting the US military unrestricted access to Claude — including removing Anthropic’s prohibitions on autonomous weapons and mass domestic surveillance.

Anthropic had rejected the Pentagon’s “final offer” just 24 hours before the deadline, saying the revised wording still contained loopholes that would effectively permit the exact use cases Anthropic had always refused to enable.

At 5:01pm on February 27, the deadline passed without a signature.

Within the hour, the Trump administration acted.

2.2 The federal ban

President Trump signed an executive directive ordering all federal agencies to immediately cease using Anthropic’s Claude and terminate any existing contracts with the company. The directive formally designated Anthropic as a “supply chain risk” under national security frameworks — the same designation used to restrict Huawei from US government networks in 2019.

The practical consequences are severe:

- All active Pentagon, intelligence community, and civilian agency contracts with Anthropic are terminated.

- Federal contractors and vendors are prohibited from using Anthropic products in work performed for the US government.

- Anthropic is placed on a supply chain risk list that will complicate future procurement relationships with any entity that does business with the US federal government.

The $200M Pentagon contract Anthropic had signed through Palantir is cancelled.

2.3 OpenAI moves in — within hours

What happened next defined the week.

Within hours of the Anthropic ban, OpenAI CEO Sam Altman announced that OpenAI had signed a new agreement with the Pentagon for classified military AI use — effectively stepping into the contract Anthropic had refused to take.

The announcement carried a notable detail: OpenAI’s agreement included explicit language prohibiting autonomous weapons and mass domestic surveillance — the exact two red lines Anthropic had been insisting on throughout its negotiations.

On the surface, it looked like OpenAI had negotiated a better deal than Anthropic had been offered. Altman framed it as proof that it was possible to work with the US military without abandoning core safety commitments.

2.4 The loophole problem

The nuance that most mainstream coverage missed was captured by MIT Technology Review in a detailed analysis published March 2:

“OpenAI’s ‘compromise’ with the Pentagon is what Anthropic feared.”

The language in OpenAI’s agreement, while prohibiting autonomous weapons and mass surveillance in explicit terms, contains several definitional ambiguities that legal and policy analysts say create significant practical loopholes:

- The definition of “autonomous weapons” specifically excludes “human-supervised lethal targeting assistance” — a category broad enough to encompass most real-world military AI use cases.

- The definition of “mass domestic surveillance” carves out “counterterrorism operations authorised under existing legal frameworks” — a carve-out that, under current US law, encompasses a very wide range of domestic intelligence activities.

- The agreement grants the Pentagon the right to “reinterpret definitions in light of operational requirements” — effectively giving the government the ability to expand its own permissions without renegotiation.

Forbes reported that multiple AI safety researchers who reviewed the agreement said its actual operational protections are materially weaker than the language implies, and that Anthropic’s rejection of similar wording was, in retrospect, well-founded.

2.5 The deeper story: principle vs pragmatism

The contrast between Anthropic and OpenAI’s outcomes this week crystallises a question that the AI industry has been circling for years:

Can a company maintain genuine ethical commitments in the face of state power — and survive commercially if it does?

Anthropic said no to the Pentagon and lost a $200M contract, its federal market access, and the goodwill of the most powerful government on Earth.

OpenAI said yes — with caveats — and walked away with the contract, the goodwill, and, days later, $110 billion in fresh capital.

The market has delivered a clear verdict on which approach it rewards. Whether that verdict is the right one for the long-term trajectory of AI development is a different question entirely.

3. OpenAI Raises $110 Billion: The Biggest Private Funding Round in History

3.1 The numbers

On February 27 — the same day as the Pentagon announcement — OpenAI closed what is now confirmed as the largest private company funding round in corporate history: $110 billion.

The breakdown:

| Investor | Amount | Strategic Component |

|---|---|---|

| Amazon | $50 billion | AWS becomes third exclusive cloud provider; 2GW of Amazon Trainium compute capacity committed |

| Nvidia | $30 billion | Equity stake; Vera Rubin next-gen GPU infrastructure committed |

| SoftBank | $30 billion | Extends SoftBank’s existing AI investment thesis; linked to Stargate infrastructure programme |

| Total | $110 billion | — |

The round values OpenAI at approximately $730 billion pre-money and $840 billion fully diluted — making it the most valuable private company on Earth, overtaking SpaceX.

For context: OpenAI was valued at $29 billion in early 2023. It has grown its valuation by approximately 29 times in three years.

3.2 The Amazon dimension

The Amazon component of this deal is the most strategically significant and the least discussed.

The $50 billion investment does not just buy equity. It buys:

- Exclusivity: AWS joins Microsoft Azure and Google Cloud as the only three cloud platforms authorised to run OpenAI’s most powerful models.

- Compute: Amazon is committing 2 gigawatts of capacity via its proprietary Trainium AI chip platform — giving OpenAI a third major compute supplier beyond Nvidia and Google TPUs.

- Distribution: AWS has the deepest enterprise cloud penetration of any provider globally. Every AWS enterprise customer will now have native access to OpenAI models within their existing cloud environment.

This fundamentally changes OpenAI’s competitive position in the enterprise market. For the millions of companies that run on AWS, OpenAI is no longer a separate vendor they need to integrate — it is a feature of the infrastructure they already pay for.

3.3 The $600 billion target

The $110 billion round is not the ceiling. It is the floor.

OpenAI has publicly stated it is targeting $600 billion in total compute spend by 2030 — a figure that implies continued capital raises at similar or larger scale across the coming years.

To put that in perspective: $600 billion is larger than the entire annual GDP of countries including Sweden, Argentina, and the UAE. OpenAI is planning to spend more on AI compute in five years than most national economies produce in a year.

The Stargate programme — the joint venture between OpenAI, SoftBank, Oracle, and the US government — remains the primary vehicle for that infrastructure build, with the $500 billion commitment announced in January 2025 now being deployed at pace.

3.4 What the capital signals

Three things:

First, the AI infrastructure buildout is not slowing. The “AI bubble” narrative — already under pressure from Nvidia’s $68 billion quarter — is now nearly impossible to sustain. When Amazon invests $50 billion in a single round, it is not speculating. It is securing infrastructure access for a commercial reality it is already seeing in its own AWS revenue.

Second, the convergence of OpenAI’s military alignment and its capital raise is not coincidental. The Pentagon deal and the $110 billion raise happened on the same day, and the same week as the Anthropic ban. The US government and US capital markets are sending a coordinated signal: compliance with national security imperatives is rewarded; resistance is penalised.

Third, OpenAI is now too large and too entrenched to fail in any conventional sense. With $110 billion in fresh capital, committed compute from three cloud providers, a Pentagon contract, and $600 billion in planned infrastructure spend, OpenAI has achieved a kind of structural permanence that transcends the normal risk profile of a startup. It is, for practical purposes, a new kind of institution — part company, part national infrastructure.

4. Big Tech Buys Its Own Power Grid: The White House Energy Pledge

4.1 What happened

On March 4, the White House hosted the signing of what officials described as the “Rate Payer Protection Pledge” — an agreement signed by Amazon, Google, Meta, Microsoft, xAI, Oracle, and OpenAI to build, source, or purchase their own electricity supply for all new AI data centre construction.

The signatories committed to:

- Not drawing new AI data centre power demand from existing public utility grids.

- Building or contracting dedicated energy generation capacity — nuclear, solar, wind, or gas — to power new AI infrastructure.

- Absorbing their own energy costs rather than passing them to household electricity consumers through grid demand increases.

The pledge covers all new data centre construction from March 2026 onward. Existing facilities are grandfathered.

4.2 Why this is historically significant

The scale of AI’s energy consumption is now so large that it is straining public electricity grids in the US, Europe, and Asia. Data centre power demand has grown faster than grid operators can build new generation and transmission capacity — and the gap is widening as AI training and inference workloads multiply.

The consequence for ordinary consumers: in regions with high data centre concentration — Northern Virginia, Phoenix, Dublin, Singapore — electricity prices for households have risen measurably over the past two years, as utilities have had to make expensive grid investments to accommodate AI demand.

The White House pledge is the first formal mechanism to decouple AI infrastructure growth from public grid dependence. It represents an extraordinary moment: the technology industry formally acknowledging that its infrastructure needs have outgrown the public energy systems that were built over a century to serve everyone.

4.3 The energy sources

The pledge has no restriction on energy source — which has sparked significant discussion among energy and climate analysts.

The practical reality of building dedicated power at data centre scale and speed means:

- Natural gas: Fastest to build, most flexible, but highest carbon footprint. Multiple signatories are already building or contracting dedicated gas generation.

- Small Modular Reactors (SMRs): Microsoft, Google, and Amazon have all announced SMR partnerships or investments. Nuclear is the only energy source that can deliver the combination of density, reliability, and carbon-free operation that hyperscalers ultimately need. The SMR industry is being directly accelerated by this pledge.

- Solar + storage: Viable at scale in sun-rich regions. Slower to build at the required density but already forming the backbone of Meta’s and Amazon’s renewable energy portfolios.

- Wind: Viable in appropriate geographies; constrained by land requirements and intermittency at the scale AI requires.

The pledge effectively turns the world’s seven largest AI companies into energy companies — with investment, procurement, and operational exposure to the electricity sector that would have been unimaginable five years ago.

4.4 What this means for the energy industry

For businesses in energy, construction, engineering, and adjacent sectors, the White House pledge is one of the most significant demand signals in years.

The combined data centre build plans of the seven signatories represent hundreds of billions of dollars in energy infrastructure investment over the next decade. Every major AI data centre project will now need a dedicated power supply — creating enormous demand for:

- Grid-scale battery storage.

- SMR construction and operation.

- High-voltage direct current (HVDC) transmission for renewable energy at distance.

- Power purchase agreement structuring and energy trading.

The pledge does not just protect rate payers. It restructures the energy investment landscape.

5. DeepSeek V4: China’s Trillion-Parameter Answer to GPT-5

5.1 The launch

While the US AI industry was consumed by Pentagon deals and $110 billion funding rounds, DeepSeek — the Hangzhou-based AI lab that shocked the world in January 2025 — quietly released DeepSeek V4.

The specifications are extraordinary:

| Capability | DeepSeek V4 | Notes |

|---|---|---|

| Total parameters | 1 trillion | 32B active via sparse MoE architecture |

| Context window | 1,000,000+ tokens | Effective million-token recall via Engram architecture |

| Modalities | Text, code, vision, audio | Native multimodal, no separate model required |

| Weights | Open — fully downloadable | Self-hosting available immediately |

| Cost to run | Infrastructure cost only | No per-token API fees for self-hosted deployment |

| Training cost | ~$5.2M (estimated) | vs GPT-5’s estimated $100M+ training spend |

5.2 The technical innovations

DeepSeek V4 introduces four architectural advances that the broader AI research community has been watching closely since the paper dropped:

MODEL1 Architecture

A new memory management approach that reduces GPU memory requirements by approximately 40% compared to equivalent-scale models. In practice, this means a model that previously required 8 H100s can now run on 5 — dramatically reducing inference cost and expanding the hardware profiles on which the model is deployable.

Sparse FP8 Decoding

A new quantisation approach that delivers approximately 1.8× speedup on inference tasks without meaningful degradation in output quality. For high-throughput production deployments — exactly the workloads enterprises care about — this is a significant practical advantage.

Improved Pre-Training Pipeline

DeepSeek’s team has substantially refined the data curation and pre-training process that made DeepSeek R1 and V3 so cost-competitive. The result is a model trained for approximately $5.2 million that achieves benchmark performance comparable to models costing 20× more to train.

Engram Architecture

The most novel contribution. Engram is a new retrieval-augmented memory architecture that enables genuinely efficient long-context recall across million-token input windows. Most current long-context models claim large context windows but degrade significantly in quality on information retrieved from the middle or far end of long documents. Engram addresses this through a learned hierarchical retrieval mechanism — making the million-token context window a genuine capability, not a marketing number.

5.3 Benchmark performance

Independent evaluations published across Hugging Face, LMSys, and academic preprint servers this week show DeepSeek V4 achieving:

- MMLU (general knowledge): 91.4 — competitive with GPT-5’s published 92.1

- HumanEval (coding): 94.7 — exceeding GPT-5’s 93.2

- MATH (mathematical reasoning): 88.9 — behind GPT-5’s 91.4 but ahead of Claude Opus 4.6

- GPQA (graduate-level science): 79.3 — competitive with frontier US models

- Long-context retrieval (1M token): Best-in-class — no current US model competes at this window size

No model is best on every benchmark. But DeepSeek V4 is clearly a frontier model — not a near-miss, not a regional champion, but a genuine peer to the best US commercial models on nearly every measured dimension.

And it is free to download and self-host.

5.4 The geopolitical dimension

DeepSeek V4 arrives in the week that the US government banned Anthropic from federal contracts over concerns about AI safety and control — and the same week that OpenAI raised $110 billion in capital underpinned partly by US government alignment.

The juxtaposition is striking.

The US strategy for maintaining AI supremacy has rested on two pillars: export controls restricting Chinese access to advanced chips, and capital concentration in US-aligned labs. Both pillars are being eroded simultaneously.

DeepSeek V4 was trained for $5.2 million using techniques that work around the chip constraints. It is then released as open weights — meaning every developer, researcher, and government in the world can run it for free, with no ongoing dependency on DeepSeek, no data leaving to Chinese servers, and no commercial relationship required.

The US cannot export-control a model that is already downloaded. It cannot restrict access to compute that has already been used. And it cannot prevent the global developer community from choosing the best-performing, lowest-cost tool for their production workloads.

Last week’s OpenRouter data — showing Chinese models at nearly double the token usage of US models among global developers — was a leading indicator. DeepSeek V4 will likely accelerate that trend significantly.

6. Four Stories, One World

6.1 The US government has picked its AI champion

The sequence of events this week — Anthropic banned, OpenAI rewarded with a Pentagon contract and $110 billion in capital on the same day — leaves little room for ambiguity.

The Trump administration has made a strategic choice: it will back AI companies that comply with national security imperatives unconditionally, and it will penalise those that do not. OpenAI, which has been progressively aligning itself with US government interests since removing its military-use prohibitions in early 2024, has been designated as the primary vehicle for US AI power projection.

This is not unprecedented. Governments have always made strategic bets on national technology champions. What is new is the speed, the scale, and the directness of the alignment — and the fact that the “loser” in this arrangement is not a foreign competitor but a domestic American company that built its brand on ethical AI development.

6.2 The ethics of AI are being defined by contracts, not principles

Anthropic’s hard line and subsequent ban; OpenAI’s “compromise” and the loopholes MIT Technology Review identified; the White House energy pledge; the $110 billion raise — all of these events point toward the same conclusion.

The ethical boundaries of AI are not being set by researchers, ethicists, policy papers, or even legislation. They are being set by contract language, procurement decisions, and capital flows.

The organisations with the most influence over how AI is actually deployed are the ones with the most government contracts, the most capital, and the deepest integration into national infrastructure. Principle, absent leverage, is insufficient protection.

6.3 Energy is now the binding constraint

The White House energy pledge is the clearest signal yet that AI’s growth is constrained not by ideas, talent, or even capital — but by physical infrastructure.

The seven largest AI companies in the world have collectively acknowledged they cannot rely on public utilities to power their ambitions. They must build their own energy supply. That is an extraordinary admission — and an extraordinary investment signal for anyone in the energy, construction, and infrastructure sectors.

6.4 China is not losing the AI race

The combination of DeepSeek V4’s launch and last week’s OpenRouter usage data establishes something that export controls and capital concentration have failed to prevent: China has frontier AI capability, and it is sharing it with the world for free.

The US strategy of winning the AI race through capital and compute concentration is producing a domestically dominant OpenAI. It is not preventing China from building and distributing models that are competitive with that OpenAI — at a fraction of the cost, with open weights, and with no strings attached.

For the developers, enterprises, and governments of the world that are not the US, Chinese open-source models increasingly represent the most practical path to frontier AI capability without geopolitical dependency.

7. What This Means for Businesses

7.1 The AI vendor landscape has bifurcated

This week created a clear fork in the enterprise AI vendor landscape:

US government-aligned vendors (OpenAI, Microsoft, Google, Amazon): Deep government contracts, massive capital, regulatory goodwill, but increasingly subject to national security requirements that may affect model behaviour, data handling, and transparency.

Independent or open-source options (Anthropic — for now — DeepSeek, Mistral, Llama derivatives): Less capital, no government contracts, but more transparent ethical frameworks and no classified obligations.

Enterprise AI procurement now requires a deliberate choice about which lane you want to be in — and that choice has implications for your own regulatory exposure, data sovereignty, and values alignment.

7.2 Open-source is no longer a compromise

DeepSeek V4 is a frontier model. It is free. It can be self-hosted. It requires no commercial relationship with any AI lab.

For enterprises with the technical capability to run self-hosted models — and the number is growing rapidly — open-source is no longer a cost-cutting measure or a fallback. It is a viable primary strategy that eliminates vendor dependency, reduces ongoing cost, and removes exposure to AI lab policy changes, government pressure, and commercial term shifts.

7.3 Energy costs are becoming an AI cost centre

The White House energy pledge and the scale of data centre investment it implies will flow through to enterprise AI costs. As AI labs invest hundreds of billions in dedicated power infrastructure, those costs will eventually be reflected in the pricing of inference, training, and cloud compute.

Enterprises building significant AI workloads should be modelling energy cost exposure as a first-class variable in their AI economics — not a footnote.

7.4 The multi-model, multi-geography imperative

The single most consistent strategic lesson from the last three months of AI developments: concentration is risk.

Concentration on a single vendor. Concentration on a single geography. Concentration on a single regulatory environment. Concentration on a single model architecture.

Every week, something changes that makes one of these concentrations dangerous. This week it was the Anthropic ban — a reminder that a vendor you bet your operations on can be cut off from your government contracts overnight.

The enterprises navigating this best are those that have invested in flexible, multi-model architectures with the ability to substitute providers, geographies, and model types as conditions change.

8. FAQ

Why did Trump ban Anthropic from federal contracts?

The Trump administration designated Anthropic as a supply chain risk after Anthropic refused to sign an agreement granting the US military unrestricted access to Claude, including removal of Anthropic’s prohibitions on autonomous weapons and mass domestic surveillance. The ban terminated all federal agency contracts with Anthropic and placed the company on a government procurement blacklist. Every other major AI lab had already agreed to unrestricted classified access.

Did OpenAI get the same deal Anthropic refused?

OpenAI signed a Pentagon agreement that includes explicit language prohibiting autonomous weapons and mass domestic surveillance — the same red lines Anthropic had demanded. However, MIT Technology Review and Forbes both reported that the definitional language in OpenAI’s agreement contains significant loopholes, including exemptions for “human-supervised lethal targeting assistance” and “counterterrorism operations under existing legal frameworks” — broad enough to encompass most real-world military AI use cases that Anthropic had specifically refused to enable.

Who invested in OpenAI’s $110 billion round and what did they get?

Amazon invested $50 billion, securing an exclusive cloud partnership and 2 gigawatts of Trainium compute capacity. Nvidia invested $30 billion, receiving equity and committing Vera Rubin GPU infrastructure. SoftBank invested $30 billion, extending its Stargate infrastructure programme. The round values OpenAI at approximately $730 billion pre-money, making it the world’s most valuable private company.

What is the White House Rate Payer Protection Pledge?

Signed on March 4 by Amazon, Google, Meta, Microsoft, xAI, Oracle, and OpenAI, the pledge commits all signatories to build, source, or purchase their own electricity generation capacity for new AI data centre construction — rather than drawing power from existing public utility grids. The pledge is designed to prevent AI infrastructure growth from raising household electricity costs and represents the largest private-sector energy vertical integration commitment in history.

What makes DeepSeek V4 significant?

DeepSeek V4 is a 1-trillion-parameter open-weights model trained for an estimated $5.2 million that achieves benchmark performance competitive with GPT-5 across most major evaluations. Its Engram architecture delivers genuine million-token context recall, and its MODEL1 and sparse FP8 innovations reduce hardware and inference costs significantly. Because the model is open-weights and freely downloadable, any developer or enterprise can run it without commercial dependency on DeepSeek or any Chinese company.

Is Anthropic finished after the federal ban?

No. The federal ban removes Anthropic from US government contracts and restricts its work with federal contractors, but does not affect its commercial enterprise and consumer business. Anthropic retains its $30 billion valuation, its investor base including Google, Spark Capital, and major VCs, and its enterprise customer relationships. The ban does, however, significantly reduce its total addressable market and its ability to compete for the largest enterprise contracts, many of which are linked to US government relationships. The medium-term commercial impact will depend on whether Anthropic’s safety positioning becomes a positive differentiator in non-government enterprise markets.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.