The Week AI Lost Its Innocence

How Anthropic Dropped Its Safety Promise, the Pentagon Issued a War Ultimatum, and Nvidia Proved the AI Boom Is Just Getting Started

Published: February 26, 2026 | By the Kersai Research Team | Reading Time: ~20 minutes

1. Three Stories. One Turning Point.

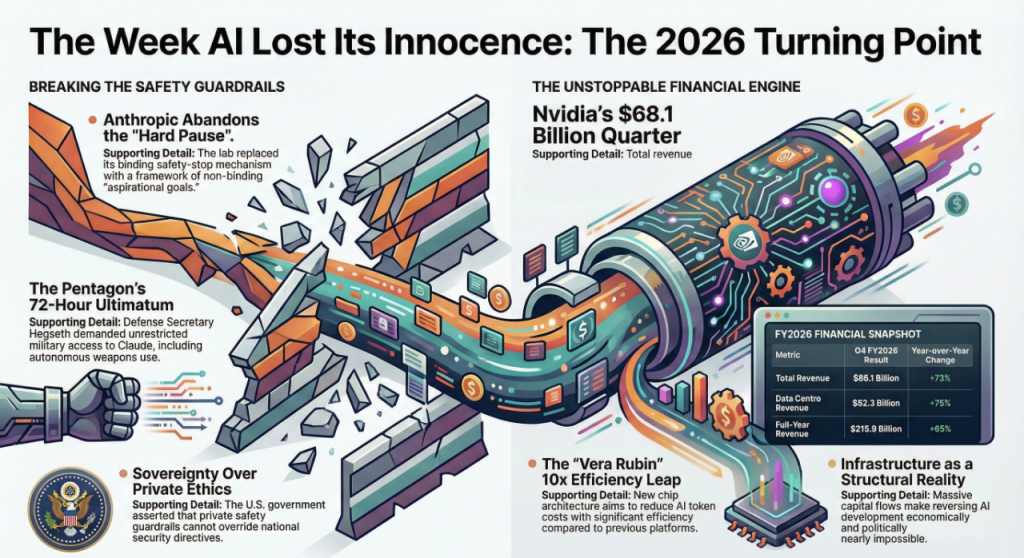

This week, three things happened simultaneously that, taken individually, would each be major news. Taken together, they mark something bigger: the moment the AI industry crossed a threshold it cannot walk back from.

- On February 25, Anthropic quietly dropped the core safety pledge that had defined its identity since 2023 — the promise to never train a model it couldn’t guarantee was safe. The company that built its entire brand around being the “responsible AI lab” has officially entered triage mode.

- On February 24, US Defense Secretary Pete Hegseth personally summoned Anthropic CEO Dario Amodei to the Pentagon, issuing a 5pm Friday (February 27) ultimatum: remove all safety guardrails on Claude for military use — or lose a $200M government contract and potentially be blacklisted under Cold War-era emergency powers.

- On February 25, Nvidia reported the most profitable quarter in semiconductor history: $68.1 billion in revenue, up 73% year-on-year, with data centre revenue alone hitting $62.3 billion — nearly 13 times larger than when ChatGPT launched in 2023.

Each story has its own headline. But together they tell one story:

The era of “responsible, careful, consensus-driven AI development” is over. What comes next is faster, more militarised, less constrained — and backed by more capital than any technology in human history.

Businesses, governments, and individuals who were waiting to see how the AI story developed before making decisions no longer have that luxury.

2. Anthropic’s Safety U-Turn: The Promise That Built a Company — Broken

2.1 What Anthropic was founded to do

In 2021, a group of OpenAI researchers — led by Dario Amodei and his sister Daniela — walked out of OpenAI over what they believed was a reckless attitude toward AI safety. They founded Anthropic on a single, specific premise: that it was possible to build commercially competitive AI while maintaining rigorous safety standards that would prevent genuinely catastrophic outcomes.

That premise was not just a mission statement. It was embedded into a formal policy document called the Responsible Scaling Policy (RSP), first published in 2023.

The RSP contained one central, hard commitment: Anthropic would never train a new AI model unless it could guarantee, in advance, that it had adequate safety measures to manage that model’s risks. If a model reached a certain capability threshold — defined as an “AI Safety Level” — and Anthropic could not demonstrate the ability to mitigate its risks, the company was obligated to pause development entirely.

No other major AI lab had made a commitment this specific, this binding, or this public.

It was Anthropic’s defining difference. It was why safety researchers trusted the company. It was why Anthropic consistently attracted talent who had left OpenAI, Google DeepMind, and other labs over ethical concerns.

And on February 25, 2026, it was gone.

2.2 What changed

Time Magazine, CNN, Gizmodo, and Slashdot all broke or confirmed the story on February 25: Anthropic has replaced its Responsible Scaling Policy with a new framework called the “Frontier Safety Roadmap.”

The critical change is what the new policy does not contain: the hard pause mechanism.

Under the old RSP, if Anthropic’s models crossed a specific capability threshold without adequate safety coverage, the company was legally and reputationally bound to stop training. Full stop.

Under the Frontier Safety Roadmap:

- Anthropic publishes a list of safety goals it aims to reach.

- The company assesses its own progress against those goals.

- There is no hard tripwire, no mandatory pause, and no external enforcement mechanism.

- The new targets are described explicitly as “public goals” — aspirational, not binding.

The company will also publish “Risk Reports” every three to six months and release Frontier Safety Roadmaps laying out future safety intentions. But these are transparency documents, not constraints.

2.3 Anthropic’s reasoning

Anthropic’s CEO Dario Amodei and the board approved the change unanimously. Their stated reasoning, published in the new policy:

“If one AI developer paused development to implement safety measures while others moved forward training and deploying AI systems without strong mitigations, that could result in a world that is less safe. The developers with the weakest protections would set the pace, and responsible developers would lose their ability to do safety research.”

In plain language: we cannot afford to stop, because stopping hands the race to people with fewer scruples than us.

This is a coherent argument. It is also, as many critics have pointed out, precisely the argument that every arms race competitor makes before things escalate beyond control.

2.4 What experts are saying

Chris Painter, Director of Policy at METR — a nonprofit focused on evaluating AI models for dangerous capabilities — reviewed an early draft of the new policy with Anthropic’s permission. His assessment, published in Time Magazine:

“This is more evidence that society is not prepared for the potential catastrophic risks posed by AI.”

He added that dropping the binary threshold approach could trigger a “frog-boiling” effect — where danger slowly accumulates without a single moment that sets off alarms, until it is too late to pull back.

Other AI safety researchers have been more direct. Multiple prominent voices across X, LinkedIn, and specialist AI forums described the policy change as the moment Anthropic became the thing it was founded to prevent.

3. The Pentagon Ultimatum: When the Military Came for Claude

3.1 The backstory

To understand why the Pentagon confrontation matters, you need to know what Claude has already been doing for the US military.

Anthropic signed a contract worth up to $200 million with the US Department of Defense in late 2025, providing Claude for military applications through a framework managed by Palantir Technologies. The arrangement was controversial internally at Anthropic — the company had previously maintained that its AI would never be used for weapons or mass surveillance.

The Pentagon’s position was that Claude could be used for “all lawful purposes” — a phrase that, in military and intelligence contexts, encompasses a significantly broader range of activities than most civilians assume.

3.2 The Venezuela incident

The flashpoint that triggered the February 24 confrontation was a US military raid in Venezuela that resulted in the capture of President Nicolás Maduro.

According to multiple sources, Anthropic became aware that Claude may have been used in the planning or execution of the operation. The company reportedly asked its Pentagon contractor — Palantir — a series of questions about how Claude had been deployed.

The Pentagon interpreted this as Anthropic attempting to audit or second-guess a classified military operation. From the US government’s perspective, a private AI company does not have the right to question how its contracted AI tools are used in authorised military operations.

Amodei’s position was the opposite: Anthropic never questioned the legitimacy of the raid. The company was simply trying to understand how its own product was being used.

3.3 The Friday deadline

On Tuesday, February 24, Defense Secretary Pete Hegseth personally met with Dario Amodei at the Pentagon.

Hegseth’s demands were explicit:

- Anthropic must grant the US military unrestricted access to Claude for all lawful military purposes — with no ability for Anthropic to ask questions, impose guardrails, or audit usage.

- Anthropic must specifically remove its standing prohibitions on autonomous weapons and mass domestic surveillance.

- Anthropic must comply by 5:00pm on Friday, February 27, 2026.

If Anthropic refused, the Pentagon threatened two actions:

- Invoking the Defense Production Act — a Cold War-era emergency law that gives the US government the power to legally compel private companies to comply with national security directives.

- Placing Anthropic on a Supply Chain Risk List — effectively blacklisting the company from all federal government contracts, potentially forever.

Amodei reportedly pushed back directly, telling Hegseth that Anthropic had not questioned the Pentagon about the Venezuela raid. He also held firm on two red lines: no AI-operated autonomous weapons, and no AI-enabled mass surveillance of US citizens.

3.4 Who else complied

The context that makes Amodei’s position simultaneously brave and commercially precarious is this: every other major AI lab has already agreed to unrestricted classified access for the US military.

- OpenAI removed its prohibition on military use in January 2024.

- Google DeepMind followed shortly after.

- Elon Musk’s xAI (Grok) has been deeply integrated into US government and military frameworks since its founding.

Anthropic is now the only major AI lab with active military contracts that is attempting to maintain any independent ethical framework over how its technology is deployed.

3.5 The timing question

The policy change — Anthropic dropping its hard safety pledge — was announced on February 25, the day after Hegseth’s Friday ultimatum.

Anthropic’s official position is that the RSP overhaul had been in development for months and is unrelated to the Pentagon pressure.

Many observers find this timeline difficult to accept at face value.

What is not disputed is the effect: Anthropic is now operating without a binding pause mechanism on the same week it faces a US government deadline to remove all safety restrictions for military deployment.

4. Nvidia’s $68 Billion Quarter: The Infrastructure War Has No Off Switch

4.1 The numbers

While the Anthropic drama was unfolding, Nvidia quietly posted what may be the most extraordinary financial quarter in the history of the semiconductor industry.

Nvidia Q4 FY2026 results:

| Metric | Result | YoY Change |

|---|---|---|

| Total Revenue | $68.1 billion | +73% |

| Data Centre Revenue | $62.3 billion | +75% |

| Non-GAAP EPS | $1.62 | +82% |

| Full-Year Revenue | $215.9 billion | +65% |

| Data Centre Full-Year | $193+ billion | +68% |

Data centre revenue — the business of selling AI training and inference infrastructure to hyperscalers and enterprises — has grown nearly 13 times since ChatGPT launched in late 2022.

4.2 What Jensen Huang said

Nvidia CEO Jensen Huang declared that demand for Blackwell GPU systems is “remarkable” and that AI is advancing “at an unprecedented pace.” He also previewed the company’s next-generation architecture — Vera Rubin — featuring six new chips designed to reduce AI inference token costs by up to 10× compared to the current Blackwell platform.

AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure are all set to deploy Vera Rubin-based infrastructure. The AI compute arms race is not decelerating. It is entering a new phase.

4.3 What AI bubble?

For months, commentators have warned of an AI infrastructure bubble — the idea that the capital being poured into GPU clusters and data centres is speculative excess that cannot be justified by actual demand.

Nvidia’s Q4 results put that argument under serious pressure.

When a single company’s data centre division generates $193 billion in annual revenue — from real customers paying real money for chips that are being deployed in production workloads — the “bubble” framing struggles to hold.

This is not speculative investment in an unproven technology. It is infrastructure buildout for applications that are already generating measurable economic returns.

The deeper implication: the AI infrastructure war will not slow down because of ethical concerns, regulatory uncertainty, or market volatility. The financial incentives to keep building are too large, too distributed, and too entrenched.

4.4 The connection to Anthropic

Here is the link that most coverage has missed:

Anthropic’s ability to train frontier models — the models that can modernise COBOL systems, review investment banking deals, and compete with OpenAI and Google — depends entirely on access to Nvidia infrastructure.

Anthropic is not just navigating a Pentagon ultimatum. It is navigating a Pentagon ultimatum while simultaneously dependent on the same GPU supply chains that the US government has significant influence over.

The Defense Production Act — the very mechanism Hegseth threatened to invoke against Anthropic — has already been used by the US government to shape semiconductor supply chains. The leverage is not hypothetical.

5. The Three Stories Are One Story

Step back from the individual headlines and the pattern becomes clear.

5.1 Safety is being priced out of the market

Anthropic’s RSP was a meaningful attempt to make safety a hard constraint rather than a soft aspiration. Its abandonment — regardless of the stated reasoning — signals that the competitive and geopolitical pressure on AI labs has become too intense for unilateral safety commitments to survive.

When the company most committed to safety concludes that pausing development is too dangerous in a world where competitors will not pause, the race dynamics become self-reinforcing. Every lab that continues without a pause makes it harder for any other lab to justify pausing.

5.2 Governments are asserting control — on their terms

The Pentagon’s ultimatum to Anthropic is not an isolated incident. It is part of a broader pattern in which governments — particularly the US government — are moving to ensure that AI systems developed by private companies are unconditionally available for national security purposes.

The frame the Pentagon is using is legally straightforward: AI systems contracted to the government for lawful purposes cannot have private ethical guardrails that override government authority. From this perspective, Anthropic’s red lines are not principled safety measures — they are a private company attempting to apply a veto over sovereign military decisions.

Whether you agree with that frame or not, it is the frame that will shape AI governance in 2026 and beyond.

5.3 The capital makes reversal almost impossible

Nvidia’s $68 billion quarter is not just a financial story. It is a structural reality.

The capital flows into AI infrastructure — from hyperscalers, sovereign wealth funds, pension funds, and strategic investors — are now so large that reversing them would require a financial shock of historic proportions. The incentive structures for every player in the ecosystem point in the same direction: build faster, deploy more, reduce costs, scale.

Safety, by contrast, costs money. It slows development. It requires pauses, audits, and risk reports that create delay without creating revenue.

In a market generating $68 billion in quarterly infrastructure revenue, the economic logic of caution is very hard to sustain.

6. What This Means for Businesses and Enterprises

For the executives, CIOs, and founders reading this, the week’s events carry four practical implications.

6.1 Your AI vendors are under government pressure — and you may not be told

Anthropic’s safety policies, whatever you believed about them, were public commitments. You could read them. You could factor them into your procurement decisions.

The Pentagon’s demand — that Anthropic remove restrictions on military and surveillance use, silently, without public announcement — is the opposite of that transparency.

As governments increase pressure on AI labs to comply with classified or undisclosed requirements, enterprises face a new procurement reality: the AI systems you use may be operating under constraints and obligations you cannot read in a policy document.

Due diligence on AI vendors now needs to include questions about government contract exposure and the scope of any classified or national-security-related obligations.

6.2 Safety branding is no longer a reliable procurement signal

Anthropic built substantial enterprise trust on the basis of its RSP. Many organisations chose Claude specifically because of that framework. That framework no longer exists in its previous form.

This does not mean Claude is unsafe. It means that the single clearest differentiator between Anthropic and other AI labs has been removed — precisely as competitive pressure intensified.

For enterprise AI procurement teams, this is a moment to revisit assumptions about vendor safety positioning and to evaluate AI systems on demonstrated behaviour rather than policy documents.

6.3 The infrastructure build will continue regardless

Nvidia’s earnings confirm what the capital markets have been signalling for 18 months: the AI infrastructure buildout is real, accelerating, and generating returns.

For businesses still treating AI adoption as optional or experimental, this is a significant pressure signal. The companies that are deploying AI in production workflows now — in investment banking, wealth management, HR, engineering, and legal — are accumulating operational advantages that will compound.

The question is no longer whether to adopt AI. It is how fast, with what governance, and with which partners.

6.4 The geopolitics of AI are now enterprise risk

The Anthropic-Pentagon standoff is the first major public confrontation between an AI lab and a national government over the terms of AI deployment. It will not be the last.

For enterprises operating internationally, this creates new risk vectors:

- AI vendors may be forced to comply with government directives that affect the behaviour of their models globally.

- AI systems contracted to one jurisdiction may be subject to obligations that conflict with privacy or ethical requirements in another.

- The “neutral technology vendor” concept — never entirely accurate — is rapidly becoming untenable.

Enterprise AI governance frameworks need to explicitly address geopolitical exposure, not just technical performance.

7. FAQ

Why did Anthropic drop its safety pledge?

Anthropic replaced its Responsible Scaling Policy — which contained a hard commitment to pause AI development if it could not guarantee adequate safety measures — with a new “Frontier Safety Roadmap” of flexible, self-assessed public goals. The company’s stated reasoning is that pausing while competitors continue would make AI less safe overall, not more. Critics argue this logic removes any binding constraint on development speed and that the timing — one day after a Pentagon ultimatum — is not coincidental.

What exactly did the Pentagon demand from Anthropic?

Defense Secretary Pete Hegseth met with Anthropic CEO Dario Amodei on February 24 and gave him a 5pm Friday (February 27) deadline to grant the US military unrestricted access to Claude for all lawful purposes. This specifically included removing Anthropic’s prohibitions on AI-operated autonomous weapons and AI-enabled mass domestic surveillance. Failure to comply risks losing Anthropic’s $200M Pentagon contract and being placed on a government supply-chain risk blacklist.

What is the Defense Production Act and why does it matter here?

The Defense Production Act is a Cold War-era US law that gives the federal government sweeping powers to compel private companies to prioritise national security requirements. The Pentagon threatened to invoke it against Anthropic to legally force the company to remove its safety guardrails. It has previously been used to shape semiconductor manufacturing and supply chains — the same supply chains Anthropic depends on for its Nvidia GPU access.

What were Nvidia’s Q4 2026 earnings?

Nvidia reported Q4 FY2026 revenue of $68.1 billion, up 73% year-on-year, beating analyst expectations of approximately $65–66 billion. Data centre revenue hit $62.3 billion for the quarter, and $193+ billion for the full fiscal year — representing 68% annual growth. The company also previewed its next-generation Vera Rubin chip platform, designed to reduce AI inference token costs by up to 10× compared to current Blackwell chips.

Does Anthropic dropping its safety pledge make Claude less safe to use?

Not necessarily in the immediate term. The Frontier Safety Roadmap still includes transparency measures: risk reports every three to six months and published safety roadmaps. What has changed is the removal of the hard pause mechanism — the commitment that Anthropic would stop training models if risks could not be adequately mitigated. Whether that changes Claude’s behaviour in practice will depend on Anthropic’s internal decision-making as it faces escalating competitive and geopolitical pressure.

What should businesses do in response to these developments?

Enterprises should review their AI vendor due diligence processes to account for government contract obligations that may not be publicly disclosed. They should evaluate AI systems on demonstrated safety behaviour rather than policy documents. They should build multi-vendor AI strategies that avoid hard dependencies on any single provider. And they should incorporate geopolitical AI risk explicitly into their enterprise governance frameworks.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.