The AI Industry Is Eating Itself

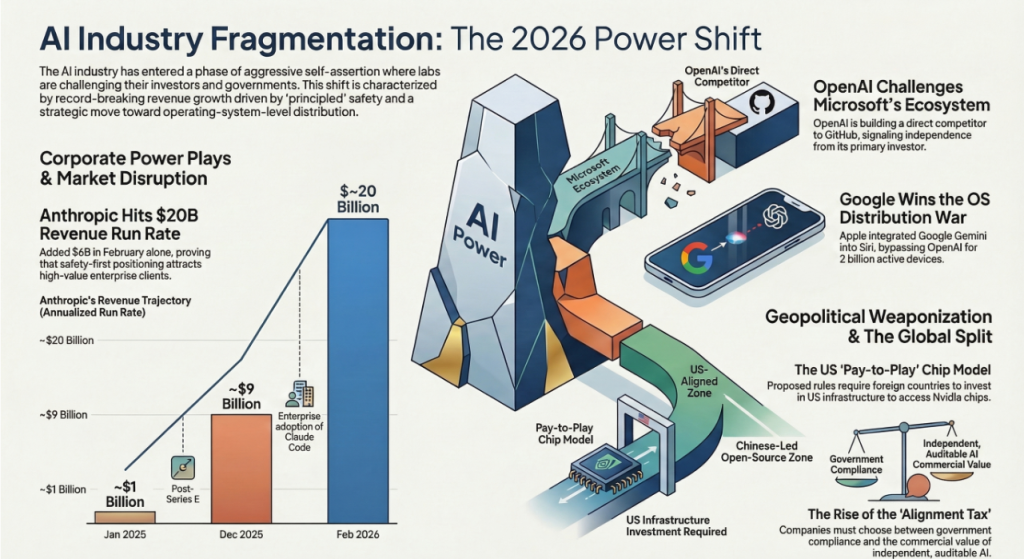

Anthropic Hits $20B After Pentagon Ban, OpenAI Turns on Microsoft, the US Weaponises Chip Exports, and Google Takes Over Siri

Published: March 6, 2026 | By the Kersai Research Team | Reading Time: ~22 minutes

1. The Week the AI Industry Turned on Itself

Last week, the story was about governments asserting control over AI companies.

This week, the AI companies are asserting control over each other — and over the governments trying to manage them.

In the last seven days:

- Anthropic, declared a national security risk and banned from all US federal contracts, responded by posting its fastest revenue growth month in history — adding $6 billion in annualised run rate in February alone to hit nearly $20 billion, making a mockery of the idea that the Pentagon ban would hurt it.

- OpenAI, fresh off raising $110 billion partly thanks to Microsoft, began quietly building a direct competitor to GitHub — Microsoft’s most profitable developer product — apparently deciding that the hand feeding it was also worth biting.

- The US government unveiled plans to turn Nvidia chip exports into a geopolitical payment system, requiring foreign countries to invest in American AI infrastructure as a condition of accessing advanced semiconductors.

- And Apple confirmed that the next version of Siri — running on 2 billion devices — will be powered not by OpenAI, not by Anthropic, but by Google’s Gemini model, handing Google the single largest AI distribution win in history through the front door of its biggest competitor.

Each story is explosive on its own. Together, they describe an industry that has grown too fast, too powerful, and too entangled — and is now beginning to pull apart at the seams.

2. Anthropic Hits $20 Billion — The Month After Being Banned

2.1 The number that broke the internet

On March 3, Bloomberg reported a figure that stopped the AI industry cold:

Anthropic has surpassed $19 billion in annualised revenue run rate.

For context:

- In January 2025, Anthropic’s run rate was approximately $1 billion.

- By December 2025, it had reached $9 billion — already extraordinary growth.

- In February 2026 alone, it added $6 billion in run rate — the same month it was fighting the Pentagon, dropping its Responsible Scaling Policy, and being publicly declared a supply chain risk by the Trump administration.

The trajectory in full:

| Period | Annualised Run Rate | Notes |

|---|---|---|

| January 2025 | ~$1 billion | Post-Series E |

| May 2025 | ~$3 billion | Claude 3 enterprise adoption accelerating |

| August 2025 | ~$5 billion | Claude Code beta launches |

| December 2025 | ~$9 billion | Confirmed by Bloomberg |

| February 2026 | ~$20 billion | Post-Pentagon standoff; Claude Code drives surge |

Anthropic is on track to reach $25–30 billion in annualised revenue by mid-2026 if current growth rates hold — potentially crossing OpenAI’s run rate, exactly as Epoch AI’s models predicted in February.

2.2 What is driving the growth: Claude Code

The engine behind Anthropic’s revenue explosion is not Claude’s general assistant capabilities. It is Claude Code — the enterprise coding and legacy modernisation tool that Anthropic has been pushing aggressively since late 2025.

Claude Code is doing something no AI product has managed at scale before: it is replacing entire categories of expensive professional services that enterprises have been paying for on multi-year contracts.

Specifically:

- Legacy code modernisation: Translating COBOL, FORTRAN, and other legacy languages into modern equivalents. This work previously required teams of expensive specialist consultants working for months or years. Claude Code does it in days, at a fraction of the cost — which is why IBM’s stock fell off a cliff the week Anthropic demonstrated the capability.

- Enterprise codebase documentation: Automatically generating and maintaining documentation for large, complex codebases — work that engineering teams universally hate and perpetually defer.

- Security audit automation: Identifying vulnerabilities in production code at scale, replacing manual code review processes that were both slow and expensive.

- DevOps pipeline optimisation: Analysing CI/CD configurations, infrastructure-as-code, and deployment pipelines to identify efficiency gains.

The common thread: every one of these use cases targets work that large enterprises are currently paying significant ongoing fees to professional services firms, SaaS vendors, or in-house specialist teams. Claude Code does not just compete with those products — it undercuts them so dramatically that the economic case for the incumbent is simply gone.

2.3 The Pentagon paradox: how being banned made Anthropic stronger

Here is the counterintuitive story that no one is writing clearly enough:

The Trump administration banned Anthropic from federal contracts to punish it for refusing to remove safety guardrails. In doing so, it accidentally made Anthropic more attractive to exactly the enterprise customers that drive its revenue.

The enterprises flooding to Claude Code are not the US government. They are:

- Global financial institutions in London, Singapore, Tokyo, and Frankfurt — who need AI that will not be subject to classified US military requirements they cannot audit.

- European enterprises operating under GDPR and the EU AI Act — who need AI partners they can verify are not operating under undisclosed government obligations.

- Healthcare, legal, and regulated-sector organisations globally — who need AI with the most transparent and verifiable safety commitments available.

- Technology companies building on top of AI APIs — who want infrastructure they can trust will not have its behaviour modified by government directive without notice.

For every one of these customers, Anthropic’s refusal to sign the Pentagon agreement — and the public, documented nature of its refusal — is a positive procurement signal. It is evidence that Anthropic’s ethical commitments are real, not just marketing.

Dario Amodei’s gamble — that principled refusal would be commercially survivable — has, at least in the short term, paid off spectacularly.

2.4 What this means for the safety vs commercialism debate

For years, the conventional wisdom in the AI industry was that safety commitments were a luxury that only well-funded labs could afford, and that they would eventually be sacrificed to commercial pressures.

Anthropic’s February revenue numbers challenge that framing fundamentally.

It is now at least plausible — based on real data, not theory — that:

- The market segment of enterprises that genuinely value auditable, transparent, non-government-compromised AI is large enough to sustain a frontier lab at full scale.

- Safety positioning, when backed by demonstrated action rather than policy documents, is a durable competitive differentiator — not a cost centre.

- The AI labs that capitulate most readily to government pressure may be optimising for short-term contract value while ceding a significant long-term enterprise market to the one lab that did not.

None of this is certain. The Pentagon ban creates real constraints — particularly in US government-adjacent enterprise markets. And Anthropic still faces the same regulatory and competitive pressures as every other lab. But the February revenue number has made a serious argument that principle and profit are not as incompatible as the industry assumed.

3. OpenAI Builds a GitHub Rival — And Bites the Hand That Feeds It

3.1 The story

On March 3 and 4, The Verge, Developer-Tech, and multiple other outlets reported the same story: OpenAI is secretly building a code repository and developer toolchain platform designed to compete directly with GitHub.

GitHub, for those unfamiliar, is the world’s dominant platform for code hosting, version control, and developer collaboration. It has approximately 100 million developers using it globally and generates billions of dollars in revenue annually.

It is owned by Microsoft.

The same Microsoft that has invested tens of billions of dollars in OpenAI, provides OpenAI’s primary cloud infrastructure through Azure, and has integrated OpenAI’s models into nearly every product in its portfolio — from Office to Teams to Xbox.

3.2 Why OpenAI is doing this

The proximate trigger, according to developer sources cited by The Verge: GitHub’s repeated outages have disrupted OpenAI’s own internal development pipelines on multiple occasions. For a company building and deploying frontier AI models at the pace OpenAI now operates, infrastructure reliability is existential — and depending on an external platform owned by a partner that also competes with you introduces a structural risk.

But the strategic logic goes beyond operational resilience.

OpenAI has been systematically building out what can only be described as a full-stack technology company:

- Search: ChatGPT Search launched in late 2024, competing directly with Google.

- Browser: OpenAI is developing its own AI-native browser, competing with Chrome and Edge.

- Productivity: Canvas and Operator compete with Microsoft 365 and other productivity suites.

- Developer platform: The planned GitHub rival would complete the stack by giving OpenAI its own code infrastructure layer.

Each of these moves puts OpenAI in direct competition with one of its major investors or partners. The GitHub rival specifically targets Microsoft’s developer ecosystem — the most strategically important of all, because developers are the distribution channel through which AI capabilities reach every other application.

3.3 The Microsoft problem

Microsoft has invested an estimated $13 billion in OpenAI. It has integrated OpenAI models across its entire product portfolio. It restructured its Azure sales organisation around OpenAI access. It has built Copilot — its AI assistant — as a direct wrapper around OpenAI capabilities.

In return, OpenAI has received:

- Enormous capital.

- Azure infrastructure at preferential rates.

- Global enterprise distribution through Microsoft’s sales channels.

- Regulatory goodwill from Microsoft’s government relationships.

And now OpenAI is building a product that, if successful, would migrate developers away from GitHub — reducing one of Microsoft’s most valuable developer lock-in mechanisms and potentially diverting developer revenue to an OpenAI-controlled platform.

The tension here is structural, not personal. OpenAI is a company with $110 billion in fresh capital, a $730 billion valuation, and ambitions to be the operating system of the AI era. Microsoft is a partner, but also an incumbent in nearly every market OpenAI wants to enter. At some point, the partnership mathematics stop working.

3.4 What developers should watch

For the 100 million developers currently on GitHub, the OpenAI platform — if it launches — will likely offer:

- Native AI integration at every point of the development workflow, not bolted on as a Copilot plugin.

- OpenAI API access built directly into the infrastructure, reducing the friction of integrating AI capabilities into code.

- Competitive pricing designed to accelerate migration from GitHub.

The risk, of course, is that a developer platform owned by OpenAI comes with its own lock-in — and that OpenAI’s government relationships and classified obligations raise the same questions for developer infrastructure that they raise for enterprise AI.

4. The US Weaponises Chip Exports: Pay to Play

4.1 The proposed rules

On March 5, Reuters broke a story that sent shockwaves through international technology and trade policy circles:

The US government is considering sweeping new export rules that would require foreign governments and companies to invest in US AI data centres as a condition of receiving access to advanced AI chips — primarily Nvidia’s H100, H200, and Blackwell GPUs.

The details, as reported by Reuters and US News:

- Even shipments of fewer than 1,000 chips may require a US export licence under the proposed rules.

- Foreign entities seeking large chip shipments must either:

- Make qualifying investments in US AI infrastructure — essentially paying a toll into the American AI buildout, or

- Provide formal national security assurances to the US government, including restrictions on how the chips can be used and who can access them.

- The rules are designed to ensure that countries buying US AI chips are simultaneously funding US AI infrastructure dominance — turning chip access into a geopolitical subscription service.

4.2 The strategic logic

The US government’s reasoning is coherent from a national security perspective:

- Advanced AI chips are the foundational resource of the AI era — the equivalent of oil in the 20th century.

- Controlling access to those chips gives the US leverage over the AI development trajectories of every country on Earth.

- Requiring investment in US infrastructure as a condition of chip access ensures that AI buildout spending — regardless of where it originates — flows back into American data centres, strengthening the US position.

What this creates, in effect, is a global AI tax paid to American infrastructure — with the US government as the gatekeeper and Nvidia as the only viable supplier.

4.3 The unintended consequences

The proposed rules have three major unintended consequences that analysts are already flagging:

First, they massively accelerate Chinese open-source adoption globally.

Countries that are unwilling to pay the investment toll — or unwilling to provide national security assurances to the US government — need an alternative. DeepSeek V4, Kimi K2.5, MiniMax M2.5, and their successors are already competitive with US frontier models. They run on any hardware. They require no licence, no investment, and no political commitment. If US chip access requires geopolitical alignment, a large portion of the world’s countries will choose the free, open-source Chinese alternative.

Second, they incentivise non-US chip development.

China’s SMIC and Huawei’s Ascend chip programmes have been growing precisely because of US export controls. New restrictions will increase the economic incentive for every country currently dependent on US chips to accelerate domestic semiconductor development. The long-term effect may be to reduce US chip market share — the opposite of the intended outcome.

Third, they create a two-tier AI world.

Countries that can afford the investment toll and are willing to provide security assurances get access to the frontier. Countries that cannot or will not are locked out of the US-aligned AI ecosystem and pushed toward Chinese open-source alternatives. This bifurcation — already visible in last week’s OpenRouter data — will become structural.

4.4 The Australia angle

For Australian businesses and policymakers, the chip export rules carry a specific implication. Australia is a close US security partner under AUKUS and the Five Eyes arrangement, which likely means Australian entities would qualify for chip access without the investment requirement.

But the rules create a precedent: AI chip access is a foreign policy instrument, not a commercial transaction. For Australian enterprises building AI strategy, this means the supply chain for AI infrastructure is geopolitical — and needs to be managed with the same risk frameworks applied to other strategic dependencies.

5. Google Takes Over Siri: The Biggest AI Distribution Win in History

5.1 The announcement

Apple has confirmed that the next major Siri update — launching with iOS 26.4 in March 2026 — will be powered by Google’s Gemini model.

Specifically, the 1.2-trillion-parameter Gemini Ultra variant will handle complex, multi-step Siri requests that exceed the capability of Apple’s on-device models. Simpler tasks — setting timers, sending messages, playing music — will continue to be handled locally on Apple’s Neural Engine chips. But for anything requiring genuine reasoning, knowledge retrieval, or multi-turn dialogue, Siri will route to Gemini.

5.2 The privacy architecture

Apple’s approach to the Google integration is architecturally significant. Rather than sending user queries directly to Google’s servers — which would represent an unprecedented privacy compromise for a company whose entire brand rests on “what happens on your iPhone stays on your iPhone” — Apple has built the integration through its Private Cloud Compute (PCC) system.

Under PCC:

- User queries are sent to Apple’s own server infrastructure, not Google’s.

- Apple’s servers run the Gemini model under an Apple-controlled environment.

- Google provides the model weights and receives licence fees, but has no access to user query data.

- Apple maintains the privacy guarantee: no user data leaves Apple’s infrastructure.

This architecture represents a significant technical achievement — and a business model that other companies will likely attempt to replicate. It decouples model capability (Google’s strength) from data access (Apple’s moat) in a way that makes both parties better off without either compromising their core proposition.

5.3 Why Apple chose Google over OpenAI

The question everyone is asking: why Google, not OpenAI — which Apple had a widely-reported partnership with in 2024?

Multiple industry sources point to several factors:

- Model quality: Google’s Gemini Ultra has pulled ahead of GPT-5 on several benchmarks relevant to Siri’s use cases — particularly long-context comprehension, factual accuracy, and multimodal reasoning (combining text, image, and audio inputs).

- Privacy architecture compatibility: Google’s infrastructure was more readily adapted to Apple’s PCC model than OpenAI’s, which is more tightly integrated with Microsoft Azure’s architecture.

- Commercial terms: Google was willing to accept a licensing model in which it receives no user data — a condition OpenAI was reportedly less comfortable with given the value it places on training data.

- The Pentagon factor: OpenAI’s classified military obligations, following its Pentagon deal, created concerns within Apple’s legal team about the disclosures and audits they would need to make to regulators — particularly in the EU — about the nature of the AI powering Siri.

That last point is significant. Apple’s decision to go with Google over OpenAI may be, in part, a direct consequence of OpenAI’s Pentagon agreement and the ambiguities MIT Technology Review identified in its surveillance-related language.

5.4 What this means competitively

The distribution implications of this deal are staggering.

For Google: Gemini will now run on approximately 2 billion active Apple devices — iPhones, iPads, and Macs. Every Siri request that requires real reasoning will go to Gemini. This gives Google AI presence in the pocket of every iPhone user on Earth, without requiring any of them to download a Google app or visit a Google product.

For OpenAI: Losing the Apple partnership — or failing to win it back — is a significant blow to consumer distribution. ChatGPT remains the dominant consumer AI brand, but Siri’s reach exceeds ChatGPT’s installed base by approximately 4:1. The consumer AI battle in 2026 increasingly runs through the operating system layer, not the app layer.

For Microsoft: The Gemini-Siri deal strengthens Google’s position in the enterprise and consumer AI market at Microsoft’s expense — and does so through Apple, a company Microsoft has historically had limited influence over.

For Anthropic: The deal is largely neutral — Anthropic was never a realistic candidate for the Siri partnership given its enterprise-first focus. But the continued growth of Google’s consumer AI distribution creates competitive pressure on the B2C layer where Anthropic’s consumer Claude products operate.

6. The Four Stories Are One Story

Step back from the individual headlines, and a single coherent narrative emerges.

6.1 The alignment tax

Every AI company this week is navigating a version of the same trade-off: how much alignment with powerful institutions — governments, investors, partners — are you willing to trade for commercial access?

- OpenAI aligned with the Pentagon and got $110 billion. Then it started building a GitHub rival, suggesting the alignment doesn’t extend to protecting its investors’ competitive positions.

- Anthropic refused to align with the Pentagon and got banned. Then it posted $20 billion in revenue, suggesting the non-alignment is itself commercially valuable to a different set of customers.

- Google aligned with Apple’s privacy requirements and got 2 billion devices. OpenAI’s Pentagon alignment may have cost it the same deal.

- The US government is attempting to use chip exports to force alignment from every country on Earth — and may accelerate the very Chinese open-source adoption it is trying to prevent.

The “alignment tax” cuts in every direction simultaneously. There is no alignment strategy that maximises every stakeholder simultaneously. Every major AI player this week has made a bet on which alignment matters most — and the outcomes are still unfolding.

6.2 The distribution wars have moved to the OS layer

The Gemini-Siri deal is the clearest signal yet that the consumer AI battle has shifted from apps to operating systems.

The question is no longer “which AI app do users choose?” It is “which AI model is embedded in the operating system that users already have?” Android users get Google’s AI by default. iPhone users will now also get Google’s AI by default, via Siri. Windows users get Microsoft Copilot, powered by OpenAI, by default.

The consumer AI market is consolidating around the operating system layer — and the distribution advantages of the incumbents in that layer are enormous.

6.3 Principles are a viable product — but only with receipts

Anthropic’s $20 billion run rate proves something important: in a world where AI companies are increasingly subject to government pressure, classified obligations, and opaque policy changes, demonstrably principled behaviour is commercially valuable.

The key word is “demonstrably.” Anthropic’s safety commitment is valuable to its enterprise customers not because Anthropic says it is committed to safety, but because Anthropic proved it — by refusing a $200 million government contract in public, on the record, with the specific objections documented.

Policy documents and mission statements are not receipts. Public refusal of a government ultimatum is a receipt.

6.4 The chip export rules will accelerate the split

The proposed US chip export rules — if implemented — will formalise the bifurcation of the global AI ecosystem into two zones:

- The US-aligned zone: Access to Nvidia frontier chips, US cloud infrastructure, US AI models, and US regulatory frameworks — in exchange for investment commitments and security assurances.

- The open-source zone: Access to Chinese open-weights models, self-hosted infrastructure, no geopolitical strings — but also no US government support, no classified capabilities, and increasing uncertainty about long-term chip supply.

Most countries and enterprises will try to operate in both zones simultaneously. The ones that will struggle are those that have built deep dependencies on one zone without hedging into the other.

7. What This Means for Businesses

7.1 Anthropic’s growth validates the “safety premium” thesis

If you are an enterprise AI procurement lead, Anthropic’s February revenue numbers should make you reconsider any assumption that safety-focused AI vendors are niche, fragile, or commercially unsustainable.

The market has demonstrated — with $20 billion in annualised revenue — that there is a very large, very well-funded set of enterprise customers who will pay for AI from vendors they trust not to be operating under undisclosed government obligations.

If your organisation has regulatory exposure, international operations, or significant data privacy requirements, Anthropic’s demonstrated behaviour — not its policy documents, its actual refusal of the Pentagon ultimatum — is material to your procurement decision.

7.2 Developer infrastructure is the next battleground

OpenAI’s GitHub rival, if it launches, will be the most significant developer platform disruption since Microsoft acquired GitHub in 2018.

For developers building on the current GitHub ecosystem, the implications are:

- A credible, AI-native alternative will emerge, likely with significant pricing pressure on Microsoft.

- The developer platform market will bifurcate between Microsoft-aligned and OpenAI-aligned infrastructure.

- Building deep dependencies on either platform before the competitive dynamics settle carries lock-in risk.

The prudent strategy: maintain portability. Use infrastructure-as-code, standard protocols, and abstraction layers that allow migration between platforms as the market evolves.

7.3 The chip export rules require immediate supply chain assessment

If you are an enterprise or government operating outside the US, the proposed chip export rules require an immediate review of your AI infrastructure supply chain.

Specifically:

- What is your current dependency on US-origin AI chips (Nvidia, AMD)?

- Do you have an alternative compute pathway — whether through existing on-premise hardware, cloud provider agreements, or open-source model deployment?

- Is your AI vendor strategy sufficiently diversified to absorb a disruption in US chip access?

Australian enterprises with AUKUS-adjacent government work are likely insulated from the worst effects. Commercial enterprises with no government relationship may need to assess their exposure more carefully.

7.4 The Gemini-Siri deal changes the consumer AI calculus

For businesses building consumer-facing AI products or marketing through AI-integrated platforms, the Gemini-Siri deal fundamentally changes the distribution landscape.

What changes:

- iPhone users will increasingly interact with Gemini-powered intelligence through native Siri, without needing to open a separate AI app.

- Marketing, advertising, and consumer engagement strategies built around ChatGPT’s consumer reach need to account for Google’s new distribution advantage on iOS.

- App-layer AI products face intensifying pressure from OS-layer AI that does not require a download, a login, or a separate subscription.

What to do:

- Evaluate your consumer AI touchpoints against the OS-layer alternatives your users now have by default.

- Consider building integrations with Siri Shortcuts and the Google Gemini API — rather than competing with the OS layer directly.

- Prioritise use cases where app-layer depth and specialisation still create genuine value over the general-purpose OS-layer AI.

8. FAQ

How did Anthropic grow to $20 billion in revenue run rate after being banned by the Pentagon?

Anthropic’s revenue growth is primarily driven by Claude Code — its enterprise coding and legacy modernisation tool — which is displacing expensive professional services contracts at large enterprises globally. The Pentagon ban, counterintuitively, strengthened Anthropic’s appeal to non-US enterprise customers who specifically value AI vendors not subject to classified US government obligations. International financial institutions, European enterprises under GDPR, and regulated-sector organisations have been flooding to Anthropic as a demonstrably independent AI partner.

Why is OpenAI building a competitor to GitHub when Microsoft owns GitHub and funds OpenAI?

The proximate cause is GitHub’s repeated outages disrupting OpenAI’s development pipelines. The strategic cause is that OpenAI is building a full-stack technology company and needs developer infrastructure it controls. The commercial logic is that controlling the developer platform gives OpenAI a distribution channel for its AI capabilities that does not depend on a partner that also competes with it in multiple markets. The risk is significant Microsoft relationship damage — but OpenAI’s $110 billion valuation and $110 billion in fresh capital have reduced its dependency on any single partner.

What are the new US AI chip export rules and who do they affect?

The US government is considering rules requiring foreign governments and companies to invest in US AI data centre infrastructure — or provide national security assurances — as a condition of accessing advanced AI chips from Nvidia and other US manufacturers. Even shipments of fewer than 1,000 chips may require export licences. The rules are designed to ensure AI infrastructure spending flows back into US AI dominance, but analysts warn they will accelerate Chinese open-source model adoption in countries unwilling or unable to pay the investment toll.

Why is Siri now powered by Google Gemini instead of OpenAI?

Apple chose Google Gemini for the updated Siri based on a combination of model quality advantages on Siri-relevant benchmarks, better compatibility with Apple’s Private Cloud Compute privacy architecture, commercial terms that allow Google no access to user data, and — according to industry sources — concerns within Apple’s legal team about OpenAI’s classified Pentagon obligations and the surveillance-related ambiguities in OpenAI’s military contract language. The deal gives Google AI presence on approximately 2 billion Apple devices without requiring users to install any Google product.

What is Apple’s Private Cloud Compute and how does it protect user privacy in the Gemini-Siri integration?

Private Cloud Compute is Apple’s server infrastructure designed to run AI models while maintaining Apple’s privacy guarantees. User queries are sent to Apple’s own servers, which run the Gemini model in an Apple-controlled environment. Google provides the model weights and receives licence fees, but has no access to user query data. The architecture decouples model capability — Google’s strength — from data access — Apple’s moat — allowing Apple to use frontier AI without compromising its privacy proposition.

Is the global AI ecosystem splitting into US-aligned and Chinese open-source zones?

Evidence from multiple angles this week supports this conclusion. The proposed chip export rules formalise access to US frontier AI as contingent on geopolitical alignment. Chinese open-source models — DeepSeek V4, MiniMax M2.5, Kimi K2.5 — are already competitive with US frontier models on most benchmarks, available for free, and run without US infrastructure dependency. OpenRouter data from last week showed Chinese model usage at nearly double US model usage among global developers. Most enterprises and governments will attempt to operate in both zones simultaneously, but the bifurcation is structural and accelerating.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.