The AI Device Wars Are Here: OpenAI’s Secret Earbuds, Tesla’s Chip Factory, Meta’s Custom Silicon, and the Race to Own the AI on Your Body

Five Stories That Reveal the Real AI Battleground of 2026 — It Was Never About the Models

Published: March 25, 2026 | By the Kersai Research Team | Reading Time: ~25 minutes

Last Updated: March 25, 2026

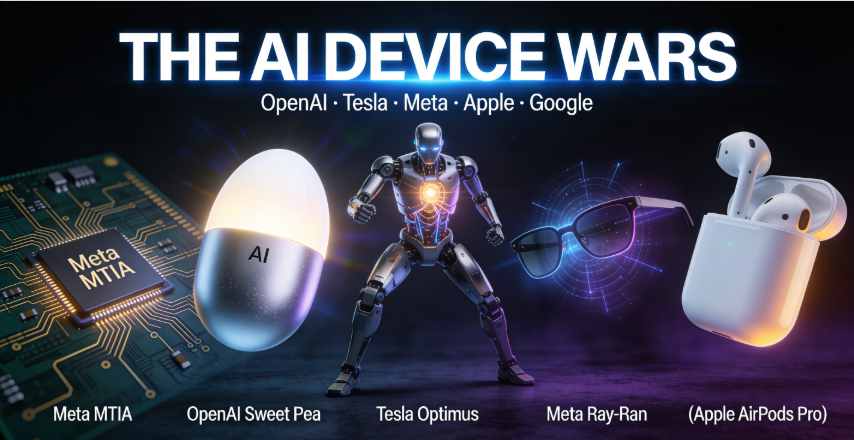

Quick Summary: The AI wars of 2025 were fought on benchmarks, context windows, and reasoning scores. The AI wars of 2026 are being fought on silicon, form factors, and physical presence. OpenAI — in partnership with legendary Apple designer Jony Ive — is building “Sweet Pea”: an AI earbud system targeting H2 2026 that its creators describe as “more peaceful and calm than an iPhone,” running on a custom 2nm chip with on-device AI inference and the ability to proxy actions across your entire phone. Simultaneously: Elon Musk launched “Macrohard” — a Tesla/xAI joint project pairing Grok with Tesla’s AI4 chip — and confirmed Terafab, Tesla’s own chip fabrication facility, severing any remaining dependency on Nvidia. Meta announced four custom AI chips (MTIA 300–500 series) to eliminate its Nvidia dependency entirely by 2027. OpenAI is hiring so aggressively it is adding 11 people per day to reach 8,000 employees by year-end — while the AI tools it sells are eliminating 53,000+ jobs elsewhere. ChatGPT launched advertising at $60 CPM. And Google’s Gemini 3.1 Flash-Lite is pricing at $0.25 per million tokens, challenging GPT-5.4 nano at near-equivalent cost with superior benchmark scores. The software race is not over. But the hardware race is the one that will determine who wins it.

Table of Contents

- Why Hardware Is the New Battleground

- Story 1: OpenAI Sweet Pea — The Device Designed to Replace the iPhone

- Story 2: Tesla Terafab + Macrohard — Musk Builds the Vertically Integrated AI Empire

- Story 3: Meta’s Four Custom AI Chips — The MTIA 300–500 Series

- Story 4: OpenAI Hires 11 People Per Day — The Paradox Company of 2026

- Story 5: ChatGPT Ads + Gemini 3.1 Flash-Lite — The AI Monetisation Race

- The Five Stories Are One Story

- The AI Device War: Full Competitive Landscape Table

- What This Means for Businesses

- FAQ

1. Why Hardware Is the New Battleground

There is a version of the AI story that is almost entirely about software: about parameter counts, benchmark scores, API pricing, and context windows. It is the version that has dominated AI coverage since GPT-4 launched in 2023 and every major lab rushed to match it. It is the version in which OpenAI, Anthropic, Google, and Meta are locked in an endless cycle of model releases and capability announcements, each one incrementally better than the last.

That version is not wrong. But it is increasingly incomplete.

The AI story that will matter most in the next 24 months is not about which model scores highest on ARC-AGI-2. It is about which company owns the physical device closest to your body — the hardware that decides which AI you interact with by default, without deliberate choice, as a function of what device you happen to be using.

This is how every previous technology platform war ended. Not with the best software winning, but with the best hardware distribution system locking in the software ecosystem. Microsoft won the personal computing era by owning the operating system on every OEM laptop. Google won the search era partly by being the default on every browser. Apple won the smartphone era by controlling the device, the operating system, and the app store simultaneously.

The AI era is converging on the same conclusion: the platform that wins will be the one embedded in the form factor you carry, wear, or interact with every day — not the one with the best standalone benchmark scores.

This week, multiple major players made moves that reveal exactly how seriously they are taking this hardware race — and how divergent their strategies for winning it are.

2. OpenAI Sweet Pea — The Device Designed to Replace the iPhone

2.1 What it is

The most significant AI hardware announcement of 2026 that almost no publication has covered in depth: OpenAI is building an AI earbud system — internally codenamed “Sweet Pea” — targeted for H2 2026 release, developed in partnership with Jony Ive’s hardware design firm io.

The existence of Sweet Pea has been confirmed by TechCrunch, Mashable, Axios, Hypebeast, Aragon Research, Silicon Republic, and Yahoo Tech — each outlet contributing different details from separate sources close to the project. No single outlet has yet assembled the complete picture. This article does.

The project’s strategic ambition is explicit in how Sam Altman describes it. At Davos 2026, Altman told attendees the device would be “more peaceful and calm than an iPhone” — a deliberate positioning that frames Sweet Pea not as an iPhone competitor in the traditional sense, but as a device that replaces the psychological relationship you have with your phone by moving your primary AI interface from a screen you look at to a presence you hear.

2.2 The hardware: what we know

Based on confirmed reporting across multiple outlets:

Form factor: A behind-the-ear earbud system — two components: pill-shaped in-ear modules for audio and a metal egg-shaped main body that houses the primary compute and battery. The design language, attributed to Jony Ive’s io team, is deliberately distinct from existing earbuds — closer to a jewellery form factor than a consumer electronics form factor.

Chipset: A 2nm smartphone-grade chip — specifically Samsung’s Exynos platform — combined with custom silicon for on-device AI inference. The significance: at 2nm, Sweet Pea has the processing power of a mid-range smartphone in an earbud form factor. This is not a Bluetooth accessory that sends audio to a server. It is a computer you wear on your ear.

On-device AI: The custom inference silicon enables ChatGPT responses without requiring a cloud connection for most tasks — addressing the latency and privacy concerns that have limited every previous voice AI device.

iPhone integration: Sweet Pea can proxy actions through iPhone via Siri — meaning it can control apps, send messages, make calls, set reminders, and interact with your phone’s full capability through voice, hands-free, without touching the screen. Your phone becomes the background compute layer; Sweet Pea becomes the primary interface.

Always-on ambient intelligence: Unlike Siri or Alexa, which require a wake word and a deliberate command, Sweet Pea is designed to maintain persistent context — understanding your environment, anticipating your needs, and responding to natural conversation rather than structured commands.

Manufacturing: Foxconn confirmed as manufacturer, with contracts extending through 2028 for multiple OpenAI hardware devices — indicating Sweet Pea is the first of a planned hardware product line, not a one-off experiment.

2.3 Jony Ive: why this partnership changes everything

The involvement of Jony Ive is the detail that separates Sweet Pea from every previous AI hardware attempt.

Ive spent 27 years at Apple as Chief Design Officer, responsible for the physical form of the iMac G3, the iPod, the iPhone, the iPad, the MacBook Air, and the Apple Watch — each of which defined the aesthetic and ergonomic expectations of its product category for years or decades. His departure from Apple in 2019 to found the design firm io was one of the most significant talent exits in technology history.

OpenAI acquired a majority stake in io in a deal valued at several billion dollars. The terms mean Ive is not a design consultant on Sweet Pea. He is a founder-level partner in OpenAI’s hardware business — with equity, control over the design direction, and the full resources of the world’s most famous industrial design firm applied to one product.

The history of Jony Ive’s design work at Apple is a history of devices that created entirely new product categories through the combination of technical capability and design that made the capability feel inevitable, natural, and desirable. The iPod did not invent the MP3 player. It made the MP3 player feel like the only MP3 player that had ever existed.

If Ive applies that same capability to AI wearables — and the resources and talent available to him suggest he will — Sweet Pea has the potential to do to AI earbuds what the iPod did to digital music: make every prior attempt feel like a prototype.

2.4 The competitive context: a three-way collision

Sweet Pea launches into a market being simultaneously targeted by three of the world’s most capable hardware companies:

Apple is preparing next-generation AirPods Pro incorporating Q.ai’s whispered speech recognition and ambient audio AI (covered in our March 24 article). Apple’s advantage: 1.5 billion active devices, the deepest hardware-software integration in consumer electronics, and a distribution network that makes every Apple Store a launch channel.

Meta has sold more than 10 million Ray-Ban Meta smart glasses — the only consumer AI wearable that has achieved genuine mass market adoption. Meta’s advantage: proven consumer acceptance of always-on AI in a wearable form factor, combined with its social graph as context.

OpenAI has the model advantage — ChatGPT’s brand recognition, capability depth, and user familiarity are unmatched — but no hardware history, no retail distribution, and no device ecosystem to build on.

The collision of these three strategies in the H2 2026 / 2027 product cycle will define the AI wearable market the same way the original iPhone launch defined the smartphone market: one winner will establish the category standard that all subsequent devices are measured against.

2.5 What Sweet Pea means for AI strategy

For individuals and enterprises thinking about AI interaction models, Sweet Pea’s emergence signals a transition that will unfold over 2–3 years but should inform strategy now.

The screen-centric AI interaction model — open an app, type a query, read a response — is the current dominant paradigm. It is also a paradigm that was designed for search engines and adapted for AI. It is not the paradigm that AI’s actual capabilities optimally support.

AI’s capabilities are most powerful when they are persistent, contextual, and ambient — understanding your environment continuously rather than responding to discrete queries. Sweet Pea, Apple’s next AirPods, and Meta’s Ray-Bans are all, in different ways, attempts to enable that ambient AI model in a wearable form factor.

The organisations building AI strategy around screen-based interaction exclusively — chat interfaces, web apps, mobile apps — are building for the current paradigm. The organisations building context infrastructure, ambient data pipelines, and AI systems designed to operate continuously rather than episodically are building for what comes next.

3. Tesla Terafab + Macrohard — Elon Musk Builds the Vertically Integrated AI Empire

3.1 Macrohard: the Tesla-xAI merger in everything but name

On March 12, Elon Musk announced “Macrohard” — described in Times of India coverage as a joint project between Tesla and xAI that represents the most explicit integration yet between Musk’s two AI-adjacent companies.

The name is deliberate provocation — a portmanteau of Microsoft’s historical “Macro” dominance and hardware, signalling Musk’s ambition to build an integrated hardware-software AI empire in the Microsoft mould but without the software licensing model.

The technical architecture of Macrohard:

Macrohard pairs two AI systems in a “dual process” framework drawn directly from Nobel laureate Daniel Kahneman’s model of human cognition:

- System 1 (fast, instinctive): Tesla’s Digital Optimus AI — the AI system running Tesla’s Optimus humanoid robots and Tesla’s autonomous driving stack. System 1 handles rapid, real-world physical decisions: navigation, obstacle avoidance, manipulation tasks.

- System 2 (slow, deliberate): Grok — xAI’s large language model — running on xAI’s Nvidia H100/Vera Rubin infrastructure. System 2 handles complex reasoning, planning, and language — the tasks that require extended thought rather than rapid reaction.

The two systems communicate in real time: Grok plans and reasons, Optimus AI executes, and the feedback from physical execution informs Grok’s subsequent reasoning. Together they form what Musk describes as a complete AI cognitive architecture — one that can plan, reason, and act in the physical world without human intervention.

The target deployment: Tesla’s Optimus robots, currently in early production for internal Tesla factory deployment. Macrohard is the software intelligence stack that will run the next generation of Optimus — making the robot not just a capable physical executor but a reasoning, planning AI agent in a physical body.

3.2 Tesla Terafab: the chip independence play

On March 13, Fintech Weekly confirmed that Elon Musk had announced Terafab — Tesla’s own chip fabrication project — with a launch timeline of “seven days” from the announcement date.

Terafab represents the most ambitious element of Musk’s AI infrastructure strategy: building vertically integrated chip manufacturing capability inside Tesla, so that xAI’s AI infrastructure has zero dependency on TSMC, Samsung, or any external fabrication partner.

The context: Tesla’s AI4 chip — currently priced at $650 and available as a consumer-facing AI compute product — is designed and specified by Tesla but manufactured externally. Terafab aims to bring fabrication in-house, giving Tesla/xAI control over the entire chip stack from architecture to final silicon.

The strategic logic mirrors what Nvidia has achieved through its TSMC relationship — but goes further: where Nvidia depends on TSMC for manufacturing, Musk intends to eliminate that dependency entirely.

3.3 The full Musk AI stack — and what it is actually building toward

Stand back from Macrohard and Terafab individually and the complete picture of what Musk is assembling becomes clear:

| Layer | Component | Status |

|---|---|---|

| Chip design | Tesla AI4 chip | ✅ Available — $650 consumer pricing |

| Chip fabrication | Tesla Terafab | 🔄 Launching March 2026 |

| AI training infrastructure | xAI Colossus (Memphis, TN) — 200,000 Nvidia GPUs | ✅ Operational |

| Foundation model | Grok (xAI) | ✅ Available — Grok 3.5 current |

| Physical execution AI | Digital Optimus (Tesla) | ✅ In production |

| Combined intelligence | Macrohard (dual-process) | 🔄 Announced March 2026 |

| Physical form factor | Optimus humanoid robot | 🔄 Early factory deployment |

| Energy infrastructure | Tesla energy + xAI solar farms | ✅ Operational |

| Consumer AI device | Unannounced — rumoured wearable | 🔜 Speculated 2027 |

No other single entity — not OpenAI, not Google, not Anthropic — has assembled this combination of chip design, chip fabrication, training infrastructure, foundation models, physical execution AI, and energy infrastructure under unified control.

What Musk is building, piece by piece, is an AI company that does not depend on any external supplier for any critical component. In a geopolitical environment where chip supply chains are increasingly weaponised and infrastructure dependency is a strategic vulnerability, that vertical integration is a form of AI sovereignty that no competitor can easily replicate.

4. Meta’s Four Custom AI Chips — The MTIA 300–500 Series

4.1 The announcement

This week’s AI news bulletin from DevFlokers confirmed that Meta has announced four new custom AI chips in its MTIA (Meta Training and Inference Accelerator) series — the MTIA 300, 350, 400, and 500 — with a stated goal of eliminating Meta’s dependency on Nvidia for AI inference across its data centres by 2027.

The MTIA series has been in development since 2021 but has accelerated dramatically in 2025–2026 as Meta’s AI infrastructure costs — driven by billions of Nvidia GPU purchases — became the single largest line item in its capital expenditure.

4.2 Why Meta is building its own chips

The economic case for Meta’s custom silicon is compelling: Meta runs AI inference at a scale that makes the cost differential between Nvidia GPU inference and custom ASIC inference worth billions of dollars annually.

Meta’s AI workloads differ from frontier model training or general-purpose enterprise AI in a specific way: the vast majority of Meta’s AI inference is for content ranking and recommendation — deciding what posts, videos, ads, and suggested connections to show to 3 billion daily active users across Facebook, Instagram, Reels, WhatsApp, and Threads.

These workloads share a common profile:

- Extremely high volume (trillions of inference calls per day)

- Relatively narrow scope (ranking and recommendation models, not general reasoning)

- Highly optimised for specific, stable model architectures

- Latency-sensitive but not reasoning-depth-sensitive

This profile is perfectly suited to custom ASIC acceleration — chips designed specifically for the exact computational patterns that ranking and recommendation models require. General-purpose Nvidia GPUs are optimised for flexibility across many workloads. Custom ASICs sacrifice flexibility for efficiency on specific workloads — delivering 3–5× better performance-per-watt on the workloads they are designed for.

4.3 The MTIA 300–500 series: what each chip does

Based on DevFlokers’ coverage and Meta’s publicly available chip architecture documentation:

| Chip | Primary Use Case | Architecture Focus | Target Deployment |

|---|---|---|---|

| MTIA 300 | Content ranking inference | Optimised for sparse model patterns — typical of ranking models | Reels and Feed ranking — 2026 |

| MTIA 350 | Ad inference | Low-latency optimisation for real-time ad auction inference | Ads across Facebook/Instagram — 2026 |

| MTIA 400 | Recommendation at scale | High-throughput dense matrix operations for collaborative filtering | Suggested content and connections — 2026–2027 |

| MTIA 500 | Generative AI inference | Transformer inference optimisation — targeting Llama and MTIA-native models | Meta AI assistant across all apps — 2027 |

The MTIA 500 is the most strategically significant: it targets generative AI inference — the workload class that runs Meta AI on WhatsApp, Instagram, Facebook, and Messenger. Deploying Meta AI’s LLM inference on custom Meta silicon rather than Nvidia GPUs would produce substantial cost savings and, potentially, performance advantages for Meta’s specific model architectures.

Combined with the Avocado model remediation (covered in our March 19 article), the MTIA 500 timeline suggests Meta’s plan: by the time Avocado (or its replacement) is ready for deployment, Meta’s custom silicon will be ready to run it — giving Meta a fully integrated AI stack for its consumer products that does not depend on Nvidia at any layer.

4.4 The broader custom silicon wave

Meta is not alone. The custom AI silicon trend is the most significant structural shift in the AI infrastructure landscape since the GPU became the dominant AI training accelerator:

- Google: TPU v6 “Trillium” — Google’s sixth-generation custom AI chip, handling the majority of Google’s AI training and Gemini inference

- Apple: Neural Engine in M4 / A18 — on-device AI inference for all Apple Intelligence features, plus Q.ai’s audio AI technology pipeline

- Amazon: Trainium 2 and Inferentia 3 — AWS’s custom training and inference chips, offering 40% cost reduction versus equivalent Nvidia-based cloud instances

- Microsoft: Maia 2 — custom Azure AI inference chip, co-designed with OpenAI for ChatGPT inference workloads

- Tesla: AI4 chip + Terafab — Musk’s vertical integration play

- Meta: MTIA 300–500 series — announced this week

The common thread: every major hyperscaler and AI-native company has concluded that general-purpose Nvidia GPUs are too expensive, too power-hungry, and too inflexible for their at-scale AI inference needs — and is investing billions in custom silicon as the solution.

This trend does not threaten Nvidia’s near-term dominance — the Vera Rubin NVL72 order backlog of $1 trillion (covered in our March 19 article) makes that clear. But it does map the trajectory: Nvidia’s dominance in AI training will persist for years; its dominance in AI inference will erode steadily as custom silicon matures across every major platform.

5. OpenAI Hires 11 People Per Day — The Paradox Company of 2026

5.1 The announcement

On March 20–22, Times of India, News18, and Free Press Journal reported that OpenAI is planning to double its workforce from approximately 4,500 to 8,000 employees by the end of 2026 — a net addition of 3,500 people, or approximately 11 new hires per day for the rest of the year.

The company has simultaneously announced it is leasing over 1 million square feet of office space in San Francisco — the largest single commercial real estate commitment in the city’s recent history — to house the expanded workforce.

5.2 Why OpenAI is growing while everyone else is cutting

The OpenAI hiring surge is the sharpest possible contrast with the AI-driven job cuts story that dominated our previous two articles. Amazon, Oracle, Block, Atlassian, and Salesforce collectively cut more than 53,000 jobs in Q1 2026, explicitly citing AI efficiency. OpenAI is simultaneously adding 3,500 people.

The explanation is not contradictory — it illustrates the two-speed economy that AI is creating:

Companies deploying AI — using it to eliminate the roles it makes redundant — are cutting. These are mature organisations with large, established workforces in functions that AI can now perform.

Companies building AI — developing the frontier capabilities, infrastructure, and hardware that everyone else deploys — are growing. These are the organisations creating the tools that make other companies’ existing roles redundant.

OpenAI sits entirely in the second category. Every new frontier model capability, every new hardware product (Sweet Pea), every new API feature, and every new enterprise integration requires human expertise to build: AI researchers, hardware engineers, enterprise software developers, safety researchers, policy specialists, and the operational infrastructure to support them all.

The companies in the first category are contracting. The companies in the second are expanding. And the second category is, for the foreseeable future, a much smaller set of organisations than the first.

5.3 Where OpenAI is hiring — and what it tells us

The specific hiring categories OpenAI is prioritising reveal where the company is investing strategically:

AI safety and alignment (largest single category): OpenAI’s commitment to safety research has not diminished as it scales — and the growing deployment of powerful models in enterprise settings creates a growing need for safety expertise. OpenAI is hiring at a pace that suggests it is treating safety as a genuine operational priority, not a communications exercise.

Hardware engineering: Sweet Pea and the broader hardware roadmap require a dedicated hardware engineering organisation that OpenAI largely did not have 18 months ago. Electrical engineers, chip architects, manufacturing specialists, and firmware engineers are all being recruited.

Enterprise sales and solutions: OpenAI’s enterprise business — the API, ChatGPT Team, and ChatGPT Enterprise products — is growing faster than its consumer business. Serving enterprise customers at scale requires large account teams, customer success engineers, and solution architects.

Research (frontier models and multimodal): The GPT-5.4 series is not OpenAI’s final word on frontier models. GPT-6 development is underway. The research teams working on it need to grow proportionally with the ambition of the capability target.

Policy and regulatory affairs: As AI regulation globalises — with the EU AI Act, the NSW Digital Work Systems Act, and emerging US federal frameworks all creating compliance obligations — OpenAI’s policy and legal teams must scale to manage the regulatory environment across dozens of jurisdictions.

5.4 The talent competition that is defining AI capability

OpenAI’s hiring ambition is occurring against a backdrop of the most intense talent competition in the history of the technology industry. The pool of people with genuine frontier AI research capability — the people who can meaningfully contribute to building the next generation of large language models — is estimated at fewer than 10,000 worldwide.

OpenAI is competing for that talent against Google DeepMind, Anthropic, xAI, Meta AI, Microsoft Research, Amazon Science, and a growing cohort of well-funded AI startups. The compensation at the frontier is extraordinary: senior AI researchers routinely command total compensation packages of $3–10 million per year, with equity that could be worth multiples of that.

The talent competition is itself a capability differentiator: the organisations that attract the best researchers build the best models. The organisations that build the best models attract the best researchers. The feedback loop is self-reinforcing, and OpenAI’s hiring scale is an attempt to ensure it stays inside that loop.

6. ChatGPT Ads + Gemini 3.1 Flash-Lite — The AI Monetisation Race

6.1 ChatGPT launches advertising — at $60 CPM

On February 9–10, OpenAI launched advertising on ChatGPT — a move that had been anticipated but not confirmed until the launch. Coverage from ROI.com.au (Australia), ALMC Corp, and multiple digital marketing publications confirmed the details.

The structure:

- Free ChatGPT users: Display ads appearing in conversation interfaces — banner format, contextually relevant to the conversation topic

- ChatGPT Go users ($8/month): Ads present — a lower-cost tier that subsidises the subscription with advertising

- ChatGPT Plus and Pro users ($20–$200/month): Ad-free — the premium tier’s value proposition partially rests on freedom from advertising

- Enterprise users: Ad-free — the enterprise contract explicitly excludes advertising from the interface

The pricing: $60 CPM (cost per thousand impressions) — among the highest CPM rates in digital advertising, significantly above Google Search (average $2–6 CPM), Facebook/Instagram ($5–15 CPM), and LinkedIn ($30–45 CPM).

The high CPM is justified by OpenAI’s argument that ChatGPT users are in an active, intent-driven, high-attention context — they are in the middle of a task, asking a specific question, with high purchase intent for relevant products. This is analogous to Google Search advertising in its early days: the user’s query tells you exactly what they want, making ad relevance and conversion rates dramatically higher than passive display advertising.

The Australian context: ChatGPT has approximately 1.2 million monthly active Australian users as of Q1 2026, making the ChatGPT advertising platform immediately relevant for Australian brands targeting the educated, professional, and high-income demographic that disproportionately uses ChatGPT. Early-mover CPM rates in the Australian market are reportedly 20–30% below US rates — a window for Australian advertisers to establish ChatGPT advertising presence before pricing normalises upward.

6.2 Gemini 3.1 Flash-Lite: $0.25 per million tokens, faster than GPT-5.4 nano

On March 3, Google released Gemini 3.1 Flash-Lite — a cost-optimised model positioned as the direct competitor to OpenAI’s GPT-5.4 nano in the sub-$0.30/M token pricing tier.

The specifications, per Google’s documentation and community analysis on r/singularity and Lovable APP Blog:

| Specification | Gemini 3.1 Flash-Lite | GPT-5.4 Nano | Winner |

|---|---|---|---|

| Input price/M tokens | $0.25 | $0.20 | GPT-5.4 nano (20% cheaper) |

| Output price/M tokens | $1.00 | $1.25 | Gemini Flash-Lite (20% cheaper) |

| MMLU benchmark | 84.1% | 71.2% | Gemini Flash-Lite |

| HumanEval (coding) | 72.3% | 63.8% | Gemini Flash-Lite |

| MATH benchmark | 78.4% | 64.2% | Gemini Flash-Lite |

| Time to first token | 180ms | 240ms | Gemini Flash-Lite |

| Context window | 1,000,000 tokens | 200,000 tokens | Gemini Flash-Lite (5× larger) |

| Multimodal | ✅ Text, image, audio, video | ✅ Text, image | Gemini Flash-Lite |

The benchmark picture is clear: at essentially equivalent pricing, Gemini 3.1 Flash-Lite outperforms GPT-5.4 nano on every measured dimension except input token cost — where GPT-5.4 nano holds a 20% edge.

For enterprises choosing between the two for classification, extraction, and high-volume inference workloads — the use cases where cost and speed are the primary criteria — GPT-5.4 nano wins on input cost, and Gemini 3.1 Flash-Lite wins on output cost, capability, and context length.

The practical recommendation: for input-heavy workloads (long document processing, extensive context), GPT-5.4 nano is marginally cheaper. For output-heavy workloads (content generation, extended analysis) and tasks requiring longer context, Gemini 3.1 Flash-Lite delivers superior economics and capability.

6.3 The monetisation model divergence — and what it means

ChatGPT’s ad launch and Gemini 3.1 Flash-Lite’s pricing reveal a fundamental strategic divergence between OpenAI and Google in how they intend to monetise AI at scale:

OpenAI’s model: The Dual Revenue Stack

OpenAI is building two parallel revenue streams:

- Subscription revenue from Plus ($20/month), Pro ($200/month), Team, and Enterprise tiers — direct payment for premium AI capability

- Advertising revenue from free and Go tiers — monetising the 90%+ of ChatGPT users who will never pay a subscription

This is the classic “freemium with advertising” model — familiar from Spotify, LinkedIn, YouTube. The advertising inventory is a direct benefit of ChatGPT’s enormous free user base (estimated 500 million monthly active users globally). At $60 CPM, even modest impression volumes generate substantial revenue.

Google’s model: Infrastructure Cost Race to Zero

Google’s strategy is to make AI inference so cheap that the decision to use Google AI infrastructure is economically obvious for developers and enterprises — capturing the developer ecosystem the way Android captured the mobile ecosystem. Gemini 3.1 Flash-Lite at $0.25/M tokens is a step in that direction: it makes switching from OpenAI to Google infrastructure economically rational for a large class of workloads.

Google does not need to win on CPM or subscription revenue. It has $300 billion in annual advertising revenue from Google Search and YouTube. What it needs from Gemini is developer ecosystem lock-in and enterprise cloud revenue from Google Cloud. Both are served by the lowest possible inference price.

The two models will likely coexist — OpenAI winning on brand, consumer loyalty, and premium capability; Google winning on price, infrastructure scale, and developer adoption. But the divergence in strategy creates a clear choice for enterprises: if you prioritise capability and brand, OpenAI. If you prioritise cost and scalability, Google.

7. The Five Stories Are One Story

7.1 The platform wars always end in hardware

Every major software platform war in the technology industry’s history has concluded with the same outcome: the winner was the company that controlled the hardware distribution layer, not just the software capability.

Microsoft won the PC era by controlling the OS that ran on every OEM laptop — not by building better laptops than anyone else. Google won search by being the default on the browser and, crucially, by building Android — the OS running on 75% of the world’s smartphones — giving it a distribution channel that no pure search competitor could match. Apple won the smartphone era by controlling the entire stack: chip, hardware, OS, and app store.

The AI era is following the same pattern with accelerating speed. The model capability differences between GPT-5.4, Claude Opus 4.6, and Gemini Ultra 3.0 are real but increasingly marginal for most use cases. The differences in hardware distribution — who is in your ear, on your wrist, embedded in your glasses, running on the chip in your pocket — will determine which AI becomes the default for billions of people.

OpenAI (Sweet Pea), Apple (Q.ai-powered AirPods + possible wearable pin), Meta (Ray-Ban smart glasses), Google (Pixel + Android), and Tesla/xAI (Optimus + future wearable) are all executing strategies that converge on the same insight: the AI that wins will be the AI that is physically closest to you by default.

7.2 Vertical integration is becoming the competitive moat

Tesla Terafab, Meta MTIA, Google TPU, Apple Neural Engine, and Amazon Trainium all tell the same story: the era of AI companies depending entirely on Nvidia for infrastructure is ending — not because Nvidia is failing, but because the companies with sufficient scale are finding it economically and strategically necessary to own more of their own stack.

Vertical integration in AI — owning the chip, the training infrastructure, the model, and the hardware device — creates a compound moat that is qualitatively different from any single-layer advantage. A company that controls all four layers can optimise across them in ways that no company depending on external suppliers for any layer can match. Apple’s M-series chips are faster for Apple Intelligence tasks than any equivalent external chip because they were co-designed with the software they run. Tesla’s AI4 chip is optimised for Optimus’s specific workloads in ways that a general-purpose Nvidia GPU cannot match.

As AI becomes infrastructure — as ubiquitous as electricity — the companies that own the generation, transmission, and distribution of that infrastructure will hold structural advantages that compound over decades.

7.3 OpenAI’s paradox is the decade’s defining story

OpenAI adding 3,500 people while its tools eliminate 53,000 jobs at other companies is not ironic. It is the defining economic story of the AI era: the organisations creating the technology of displacement are themselves exempt from it — at least for now.

The question of when AI tools will be capable enough to materially reduce the headcount needed to build the next generation of AI tools is one that every AI lab is asking privately. OpenAI’s Claude Code-equivalent tools and its internal coding agents already accelerate its own development workflows. The point at which AI capability development becomes substantially self-accelerating — where AI is contributing meaningfully to its own improvement without human-scale research organisations — is the inflection point that changes everything.

We are not there yet. But the 8,000-person OpenAI of 2026 is the last version of OpenAI that looks like a conventional technology company. What comes after may look like something without clear historical precedent.

8. The AI Device War: Full Competitive Landscape

| Company | Device / Hardware | Form Factor | AI Integration | Target Date | Key Advantage |

|---|---|---|---|---|---|

| OpenAI | Sweet Pea | Behind-ear earbud system | ChatGPT on-device (2nm + custom silicon) | H2 2026 | Best AI model + Jony Ive design |

| Apple | AirPods Pro (next gen) | In-ear earbuds | Gemini + Q.ai audio AI + Apple Intelligence | H2 2026 | 1.5B device ecosystem + best audio hardware |

| Apple | Wearable AI pin (rumoured) | Body-worn pin | Apple Intelligence + Gemini | 2027 | Brand trust + hardware integration |

| Meta | Ray-Ban Meta Glasses (Gen 3) | Smart glasses | Meta AI (Llama / Gemini if Avocado fails) | H2 2026 | Only wearable AI with proven mass adoption |

| Pixel Buds Pro 2 | In-ear earbuds | Gemini Live + Personal Intelligence | H1 2026 | Deepest AI integration + cheapest model costs | |

| Tesla/xAI | Optimus robot (consumer) | Humanoid robot | Macrohard (Grok + Digital Optimus) | 2027 | Only physical AI with full embodied intelligence |

| Samsung | Galaxy Buds 3 Pro | In-ear earbuds | Gemini on-device (Exynos AI chip) | Available now | Android ecosystem + affordable entry point |

| Amazon | Echo Frames Gen 3 | Smart glasses | Alexa AI (Claude-powered) | H2 2026 | Alexa installed base + Prime ecosystem |

Sources: TechCrunch, Mashable, Axios, Hypebeast, Silicon Republic, Yahoo Tech, Times of India, FintechWeekly, DevFlokers — March 2026

9. What This Means for Businesses

9.1 The AI interface layer is about to fragment — plan for it

For the past three years, the primary AI interface for most users has been a single channel: a chat window, either on web or mobile. ChatGPT, Claude, Gemini, Copilot — all fundamentally text-in, text-out interactions via a screen.

The devices announced this week — Sweet Pea, MTIA-powered Meta AI, next-generation AirPods — represent the fragmentation of that interface layer into ambient audio, visual overlay, and physical presence. Within 18–24 months, a significant portion of AI interactions will not happen in a chat window at all.

For businesses building AI products and customer-facing AI interfaces, this has immediate implications:

Design for voice-first from day one: If your AI product currently exists only as a text interface, now is the time to invest in voice interaction design — not as a future feature but as a core modality. The users migrating to Sweet Pea and next-gen AirPods will expect to interact with your AI product by speaking, not typing.

Context architecture must be ambient-ready: The context engineering frameworks being built for screen-based AI (query → context → response → query) need to evolve for ambient AI (continuous context → proactive response → environmental update → continuous context). The MCP infrastructure that enables tool use for screen-based agents is directly applicable to ambient agents — but the session model and context persistence architecture needs to be redesigned.

API strategy must be hardware-agnostic: As AI is delivered through Sweet Pea (OpenAI API), AirPods (Apple Intelligence / Gemini API), Ray-Bans (Meta AI API), and Galaxy Buds (Gemini API), building your AI product on a single provider’s API creates hardware lock-in by proxy. The enterprises with the most durable AI product strategies are those using API abstraction layers that can swap the underlying model provider without rebuilding the product.

9.2 ChatGPT advertising is a genuine opportunity right now — for Australian brands

The ChatGPT advertising platform is live, available, and reportedly priced 20–30% below US rates in the Australian market. The user base — educated, professional, high-income, technology-forward — is exactly the demographic that commands premium CPM rates in traditional digital advertising.

The early-mover advantage in AI advertising is analogous to the early-mover advantage in Google Search advertising in 2003 or Facebook advertising in 2012: the inventory is underpriced relative to its quality because demand has not yet caught up with supply. Brands that establish ChatGPT advertising presence in Q2 2026, while CPM rates are still below equilibrium, will capture brand awareness and conversion data that provides a durable advantage as the platform matures and pricing rises.

Recommended action: if your brand serves the professional, B2B, or high-income consumer segments, allocate a test budget to ChatGPT advertising in Q2 2026. The creative approach is different from traditional display — you are appearing in a context where the user is actively seeking help with a task, so ad copy should be solution-oriented and immediately useful, not interruption-based.

9.3 Reassess your AI inference provider against Gemini 3.1 Flash-Lite

If your organisation is running high-volume AI inference workloads on GPT-5.4 nano or earlier model versions, the Gemini 3.1 Flash-Lite benchmarks warrant a formal reassessment.

At equivalent pricing with a 1-million-token context window (versus GPT-5.4 nano’s 200,000), superior benchmark scores on reasoning and coding, and faster time-to-first-token, Gemini 3.1 Flash-Lite is the better choice for a significant class of enterprise workloads — particularly those involving long documents, extended context requirements, or coding-adjacent tasks.

The switching cost is lower than it has ever been: MCP-compatible agent frameworks abstract model selection, making it relatively straightforward to run the same workflow on different model backends for cost and capability comparison. Run the test before committing to either provider at scale.

10. FAQ

What is OpenAI Sweet Pea?

OpenAI Sweet Pea is the codename for OpenAI’s first consumer hardware device — an AI earbud system developed in partnership with Jony Ive’s design firm io, targeted for H2 2026 release. The device features a behind-the-ear form factor with pill-shaped in-ear modules and a metal egg-shaped main body. It runs on a 2nm Exynos smartphone-grade chip combined with custom AI inference silicon, enabling on-device ChatGPT responses without requiring a cloud connection for most tasks. It can proxy actions through iPhone via Siri, acting as a hands-free AI computer. Sam Altman has described it as “more peaceful and calm than an iPhone” — positioning it as a screen-free, ambient AI presence.

When is OpenAI releasing its wearable device?

OpenAI’s Sweet Pea AI earbud device is targeted for H2 2026 — the second half of 2026. Foxconn has been confirmed as manufacturer, with contracts running through 2028 for multiple OpenAI hardware devices. No specific launch date has been announced. The device is being designed by Jony Ive’s hardware firm io, which OpenAI acquired a majority stake in for several billion dollars.

What is Tesla Terafab?

Tesla Terafab is Tesla’s own chip fabrication project, announced by Elon Musk in March 2026, aimed at giving Tesla and xAI fully vertically integrated control over AI chip design and manufacturing — eliminating dependence on external fabrication partners including TSMC and Samsung. Terafab is part of Musk’s broader strategy to build a completely self-sufficient AI infrastructure stack spanning chip design (Tesla AI4), chip fabrication (Terafab), AI models (Grok), physical execution AI (Digital Optimus), and integrated intelligence (Macrohard).

What is the Macrohard project?

Macrohard is a joint project between Tesla and xAI announced by Elon Musk in March 2026. It pairs two AI systems in a dual-process architecture inspired by Nobel laureate Daniel Kahneman’s model of human cognition: Grok (xAI’s large language model) acts as “System 2” — slow, deliberate reasoning and planning — while Tesla’s Digital Optimus AI acts as “System 1” — fast, instinctive physical execution. Together they form a complete AI cognitive architecture designed to run Tesla’s Optimus humanoid robots, enabling the robot to plan, reason, and act in the physical world with full AI autonomy.

What are Meta’s MTIA chips?

Meta’s MTIA (Meta Training and Inference Accelerator) chips are custom-designed AI silicon developed by Meta to replace Nvidia GPUs for AI inference across its data centres. Meta announced four new chips in the MTIA 300–500 series in March 2026, targeting content ranking (MTIA 300), ad inference (MTIA 350), recommendation at scale (MTIA 400), and generative AI inference (MTIA 500). Meta’s goal is to eliminate its dependency on Nvidia for AI inference workloads across Facebook, Instagram, Reels, WhatsApp, and Threads by 2027, significantly reducing its AI infrastructure costs.

Does ChatGPT have ads now?

Yes. OpenAI launched advertising on ChatGPT on February 9, 2026. Free ChatGPT users and $8/month “Go” users see contextual banner ads in the conversation interface. Paid Plus ($20/month), Pro ($200/month), and Enterprise users remain ad-free. ChatGPT advertising is priced at $60 CPM (cost per thousand impressions) — among the highest rates in digital advertising, reflecting the high-intent, task-active context of ChatGPT users. In the Australian market, CPM rates are reportedly 20–30% below US rates during the platform’s early rollout phase.

How much does Gemini 3.1 Flash-Lite cost?

Gemini 3.1 Flash-Lite is priced at $0.25 per million input tokens and $1.00 per million output tokens. It competes directly with OpenAI’s GPT-5.4 nano ($0.20 input / $1.25 output) in the ultra-low-cost AI inference tier. Gemini 3.1 Flash-Lite outperforms GPT-5.4 nano on MMLU, HumanEval, and MATH benchmarks, delivers faster time-to-first-token (180ms vs 240ms), and offers a 1-million-token context window compared to GPT-5.4 nano’s 200,000-token limit. GPT-5.4 nano holds a 20% edge on input cost only.

Who is winning the AI hardware race?

No single winner has emerged in the AI hardware race as of March 2026, but clear strategic positions have formed: Apple leads on hardware quality, device ecosystem scale (1.5 billion active devices), and audio AI capability via Q.ai; Meta leads on proven consumer wearable AI adoption (10+ million Ray-Ban Meta glasses sold); OpenAI leads on AI model capability and brand recognition with Sweet Pea in development; Tesla/xAI leads on physical AI integration (Optimus robots + Macrohard); and Google leads on model cost efficiency and Android distribution. The winner of the AI hardware race will be the company whose device becomes the default ambient AI interface for the largest number of users — a race that will not be decided until 2027 at the earliest.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.