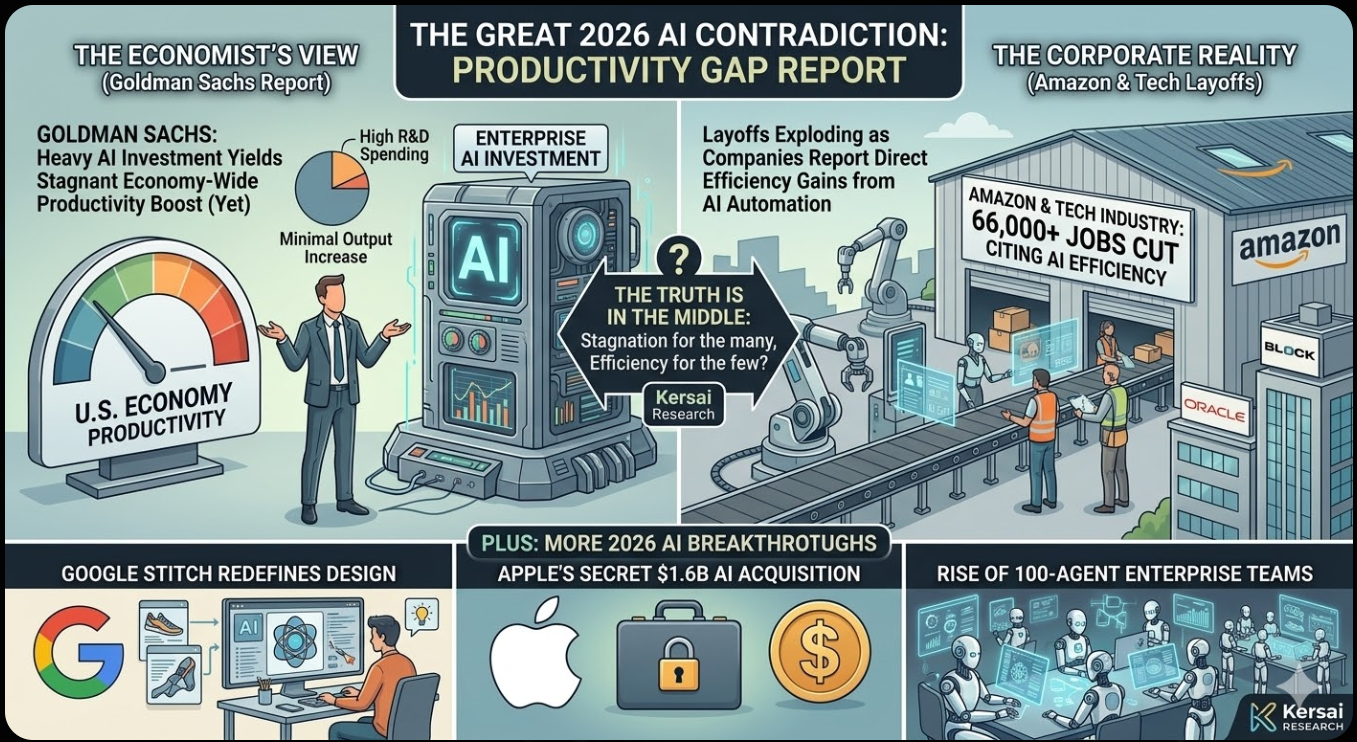

Goldman Sachs Says AI Hasn’t Boosted the Economy. Amazon Just Cut 30,000 Jobs Because It Has. Here’s the Truth.

The Biggest Contradiction in AI Right Now — Plus Google’s “Vibe Designing” Tool, Apple’s $1.6 Billion Secret Acquisition, and the 100-Agent Enterprise Teams Running Without Humans

Published: March 24, 2026 | By the Kersai Research Team | Reading Time: ~26 minutes

Last Updated: March 24, 2026

Quick Summary: Goldman Sachs published a major economic analysis finding “no meaningful relationship between AI and productivity at the economy-wide level” — despite $667 billion in global AI capital expenditure in 2026. In the same period, Amazon cut 30,000 corporate jobs citing AI, Oracle set aside $2.1 billion for AI-driven restructuring, and Block eliminated 40% of its workforce. How can AI be simultaneously failing to move the economic needle and eliminating tens of thousands of jobs? The answer to that paradox is the most important thing any business leader can understand about AI in 2026. Alongside that core story: Google quietly launched Stitch — a free “vibe designing” tool that generates complete app and web interfaces from a text prompt, threatening the core workflow of every UI/UX designer alive. Apple spent $1.6 billion on an Israeli AI audio startup that almost no one in the mainstream tech press covered — and the acquisition points directly at the next generation of AirPods and a rumoured AI wearable. And inside enterprise technology teams, a new architecture is emerging: coordinated teams of 50–100 AI agents operating in parallel, each with a specialised role, communicating and delegating without human involvement in the internal coordination.

Table of Contents

- The Contradiction at the Heart of AI in 2026

- Story 1: Goldman Sachs — No AI Productivity Gains (Except Where It Counts)

- Story 2: Amazon Joins the AI Jobs Reckoning — 30,000 Cuts and Counting

- Resolving the Contradiction: Why Both Goldman and Amazon Are Right

- Story 3: Google Stitch — “Vibe Designing” Arrives and Designers Are Nervous

- Story 4: Apple’s $1.6 Billion Secret — The AI Acquisition Nobody Covered

- Story 5: The 100-Agent Enterprise Team — Agentic AI’s Next Phase

- The Five Stories Are One Story

- What This Means for Businesses

- The AI Productivity Paradox: A Framework for Leaders

- FAQ

1. The Contradiction at the Heart of AI in 2026

Hold two facts in your head simultaneously.

Fact one: Goldman Sachs, one of the world’s most rigorous and well-resourced economic research organisations, published an analysis in March 2026 finding that despite hundreds of billions of dollars in AI investment, there is “no meaningful relationship between AI and productivity at the economy-wide level.” AI’s estimated contribution to GDP is 0.1–0.2 percentage points. Essentially zero.

Fact two: In the same 90-day period, Amazon cut 30,000 corporate jobs citing AI. Oracle set aside $2.1 billion to restructure thousands of roles made redundant by AI. Block eliminated 40% of its entire global workforce because, per its CEO, AI made those positions structurally unnecessary. Combined with earlier cuts at Atlassian, Salesforce, and others, more than 60,000 jobs have been eliminated by major technology companies in Q1 2026 with AI cited as the explicit cause.

If AI is not boosting the economy, why are 60,000 people losing their jobs to it?

If AI is eliminating so many jobs, why isn’t it showing up in productivity statistics?

The answer to this apparent paradox is not that one of these facts is wrong. Both are correct. The paradox is real — and understanding it is more valuable than any individual AI tool, model, or capability comparison we could discuss this week.

This article resolves the paradox, covers four additional major stories from the past 48 hours, and gives you the framework to act on all of it.

2. Goldman Sachs: No AI Productivity Gains — Except Where It Counts

2.1 The report

On March 3, 2026, Fortune and Yahoo Finance reported on a major Goldman Sachs economic analysis authored by senior economist Ronnie Walker under the headline that immediately ignited debate across every AI forum and LinkedIn community: “Goldman finds no meaningful relationship between AI and productivity.”

The full Goldman Sachs research paper — published on goldmansachs.com on March 17 under the title “How Will AI Affect the US Labor Market?” — provides the most rigorous economy-wide analysis of AI’s actual, measured productivity impact published to date by a major financial institution.

Its findings challenge the dominant AI industry narrative directly. They deserve careful, complete engagement — not dismissal, and not panic.

2.2 The core finding: economy-wide, AI is invisible

Goldman’s analysis examined productivity data across the US economy, cross-referenced with AI adoption metrics, AI-related patent filings, AI hiring signals in job postings, and enterprise AI investment data.

The conclusion:

“We still do not find a meaningful relationship between productivity and AI adoption at the economy-wide level.”

Despite an estimated $667 billion in global AI capital expenditure forecast for 2026 — a 62% increase from 2025’s $413 billion — AI’s estimated contribution to US GDP growth is approximately 0.1–0.2 percentage points.

For context: that is the equivalent of a rounding error in most economic models. It is not statistically distinguishable from noise. By economy-wide productivity measures, the AI era has not yet happened.

2.3 The productivity paradox is not new — but it is newly urgent

This finding is not unique to AI. It echoes a pattern that economic historians have documented across every major technological transition in the industrial era.

The most famous example is the Solow Paradox, named after Nobel economist Robert Solow, who observed in 1987: “You can see the computer age everywhere except in the productivity statistics.” Computers were transforming offices, workflows, and industries throughout the 1970s and 1980s — but measurable economy-wide productivity growth was flat or declining for two decades.

Then, in the mid-1990s, productivity growth surged — reaching levels not seen since the post-war boom. The computers that had been “failing” for 20 years suddenly delivered their gains all at once, after a long period of investment, learning, and workflow redesign.

The pattern across major technological transitions:

| Technology | Investment Boom | Productivity Lag | Eventual Surge |

|---|---|---|---|

| Railways (UK) | 1840s–1860s | 20–30 years | 1870s–1890s |

| Electricity | 1880s–1910s | 25–30 years | 1920s–1940s |

| Computers | 1970s–1980s | 15–20 years | Mid-1990s |

| Internet | 1990s–2000s | 10–15 years | 2005–2015 |

| AI | 2022–present | ? | ? |

Goldman’s economists are not saying AI will not deliver productivity gains. They are saying it has not delivered them yet — and that this is historically normal for transformative technologies at this stage of adoption.

2.4 The exception: 30% gains in two specific domains

The finding that received far less coverage than the “no productivity gains” headline: Goldman found significant, measurable productivity gains from AI in two specific, localised domains.

Customer support: Companies deploying AI for customer service have achieved productivity gains of approximately 30% — measured in cost per resolution, time per resolution, and customer satisfaction scores. This is consistent with Oracle and Block’s experience: these are the roles being eliminated, because AI is genuinely 30% more efficient at performing them.

Software development tasks: Teams using AI coding assistants — Claude Code, GPT-5.4 for code generation, Copilot — show productivity gains of approximately 30% on defined coding tasks. Lines of code per engineer per day, bug fix turnaround time, and feature delivery speed all show measurable improvement.

Two sectors. Thirty percent gains. Consistent, measurable, reproducible.

The rest of the economy: near zero.

2.5 The labour market signal Goldman is watching

Beyond productivity, Goldman identified a leading indicator that suggests economy-wide effects may be approaching: companies that mention AI in the context of hiring are cutting job openings 12% faster than companies that do not.

This is a lagging-to-leading indicator transition. When companies stop hiring for AI-affected roles before those roles show up in productivity statistics, it means:

- The organisational changes are beginning before the outputs are measurable

- The “productivity” will show up in headcount reduction rather than output increase — which can take years to appear in economy-wide statistics

- The employment effects of AI are arriving faster than the productivity effects, consistent with prior technology transitions

Goldman’s long-term forecast: 11 million US workers (6–7% of the workforce) will eventually be displaced — with a net positive outcome over a 10–20 year horizon, but significant transitional disruption concentrated in the next 3–5 years.

3. Amazon Joins the AI Jobs Reckoning — 30,000 Cuts and Counting

3.1 The announcement

On January 27–28, 2026, Amazon published a corporate announcement — initially described by ABC Australia as “accidentally published” before its intended release — confirming 16,000 corporate layoffs in what the company described as an effort to “reduce layers and bureaucracy” and “invest further in AI and robotics.”

The announcement was covered by TechCrunch, CNN, Reuters, Yahoo Finance, Silicon Republic, and ABC Australia. CEO Andy Jassy simultaneously appeared at the World Economic Forum in Davos, where he made the relationship between AI and the layoffs explicit.

These 16,000 cuts were Amazon’s second major corporate restructuring round — bringing the company’s total corporate workforce reduction since 2023 to approximately 30,000 roles.

3.2 What Jassy said at Davos

Andy Jassy’s remarks at Davos are among the clearest statements from a major tech CEO about the expected long-term employment impact of AI:

“Over the next couple of years I could see us having fewer people than we had before. People are going to be impacted by what’s happening with AI over time.”

Jassy framed the layoffs not as a response to business difficulty but as a proactive structural reconfiguration in advance of further AI capability deployment. Amazon is eliminating the roles now — before AI has fully displaced them — to reduce transition costs and move toward a leaner, AI-augmented operating model.

3.3 The $100 billion question

The number that makes Amazon’s job cuts most staggering in context: Amazon spent close to $100 billion on AI in 2025 — the single largest AI investment by any company in a single year. The company that is spending the most on AI is simultaneously cutting the most corporate headcount citing AI.

This is not a contradiction. It is the business logic of AI adoption at scale: you invest enormous capital in the AI systems, and those systems then reduce your need for the human workforce those systems are replacing. The $100 billion is the capital expenditure. The 30,000 job cuts are the operating expense reduction that the capital expenditure enables.

3.4 The full picture: 60,000+ jobs, 90 days, four companies

Amazon’s cuts, combined with the Oracle and Block announcements covered in yesterday’s Kersai article, paint the clearest picture yet of the pace of AI-driven workforce restructuring in early 2026:

| Company | Jobs Cut | Explicit AI Attribution | Timeframe |

|---|---|---|---|

| Amazon | 30,000 (cumulative) | ✅ Yes — “invest in AI, reduce bureaucracy” | Jan 2026 |

| Oracle | ~10,000+ | ✅ Yes — “AI makes these roles less necessary” | Mar 2026 |

| Block | ~3,800 (40% workforce) | ✅ Yes — “AI enabled a smaller team” | Mar 2026 |

| Atlassian | ~1,500 | ✅ Yes — AI tools reducing engineering headcount | Feb 2026 |

| Salesforce | ~2,000 | ✅ Yes — Agentforce replacing service roles | Feb 2026 |

| Microsoft | ~6,000 | ⚠️ Partial — “performance and restructuring” | Jan 2026 |

| Total | 53,000+ | Q1 2026 |

Sources: TechCrunch, Bloomberg, Reuters, Silicon Republic, CNN — Q1 2026

More than 53,000 jobs cut across six major technology companies in a single quarter, with AI cited as a direct or contributing cause in every case. This is not anecdotal. It is a structural pattern.

4. Resolving the Contradiction: Why Both Goldman and Amazon Are Right

4.1 The paradox explained

Goldman says AI has not improved economy-wide productivity. Amazon cut 30,000 jobs because AI has made them unnecessary. Both are factually correct. Here is how:

AI’s productivity gains are narrow, deep, and concentrated — not broad and distributed.

Goldman’s economy-wide analysis measures productivity across the entire US economy — hundreds of millions of workers across every industry, from farming to healthcare to retail to finance to technology. In that vast average, the 30% gains in customer support and software development at technology companies are statistical noise.

Amazon, Oracle, and Block are not the economy. They are the narrow leading edge of the organisations best positioned to deploy AI at scale: technology-native, data-rich, digitally-integrated, and operating in the specific domains where AI is already demonstrably effective.

The productivity gains are real — they are just concentrated. And where they are concentrated, they are driving significant restructuring. The economy-wide signal will follow — but with the 10–20 year lag that every prior transformative technology has produced.

4.2 The three stages of AI’s economic impact

The Goldman analysis, combined with the historical patterns of prior technology transitions, points to a three-stage model for AI’s economic impact:

Stage 1: Investment without measurement (2022–2026, current)

Enormous capital investment in AI infrastructure, tools, and deployment. Early adopters — primarily technology companies — begin capturing productivity gains in specific, narrow domains. Economy-wide statistics show minimal impact because adoption is concentrated and productivity gains are offset by transition costs, learning curves, and organisational disruption. Job cuts begin in the most AI-affected roles at the most AI-native companies.

Stage 2: Diffusion and workflow redesign (2026–2030)

AI capabilities diffuse beyond technology companies into healthcare, finance, legal, education, manufacturing, and services. Organisations redesign workflows around AI execution rather than bolting AI onto human-designed processes. Productivity gains become measurable at the sector level. Workforce displacement accelerates but is offset by growing demand for AI management and governance roles. Economy-wide productivity statistics begin showing meaningful gains.

Stage 3: Structural transformation (2030+)

AI is embedded infrastructure — as ubiquitous as electricity or broadband. Productivity gains are substantial and economy-wide. New industries enabled by AI have scaled to significant employment. The jobs displaced in Stage 1 have been partially replaced by new roles, but the sectoral and geographic concentration of displacement has created lasting structural inequality for workers in the most-affected categories who did not successfully transition.

We are currently in Stage 1 — which means the economy-wide productivity statistics are where you would expect them to be for this point in the transition. Goldman’s finding is not evidence that AI is failing. It is evidence that we are where the historical model predicts we should be.

4.3 What this means for the “AI winter” narrative

Every time Goldman’s report is cited in isolation — without the historical context, without the 30% domain-specific gains, without the historical technology transition pattern — it feeds a growing “AI winter” narrative: the claim that AI has been overhyped, will not deliver on its promises, and that the investment bubble is about to burst.

This narrative is wrong, and it is important to say so clearly.

The evidence does not support an AI winter interpretation. It supports exactly the opposite:

- Model capability improvements are accelerating, not plateauing

- Enterprise adoption is expanding, not contracting

- The specific domains where AI delivers measurable gains are clearly identified and delivering consistently

- The organisations most deeply invested in AI deployment are executing large-scale restructurings that would only make sense if AI were delivering the expected gains at the operational level

What Goldman’s analysis confirms is that the gains are real but narrow, and that the economy-wide harvest is years away. That is not a winter. That is a spring that has not yet arrived everywhere — but has absolutely arrived in certain fields.

5. Google Stitch: “Vibe Designing” Arrives and Every Designer Is Paying Attention

5.1 What launched

On March 18, 2026, Google updated Stitch — its AI-native design platform, available free on Google Labs at stitch.withgoogle.com — with a major feature expansion that immediately sparked debate across design communities on Reddit, Dribbble, and LinkedIn.

Coverage came from Storyboard18 and SiliconAngle, with the story gaining significant traction in design and developer communities within 48 hours.

The core concept Stitch introduces: “vibe designing” — a deliberate parallel to the “vibe coding” concept (where developers describe what they want in plain English and AI writes the code) applied to visual and interactive interface design.

5.2 What Stitch actually does

Stitch takes a plain-English description of an interface and generates complete, interactive, production-ready UI designs — for apps, websites, dashboards, and mobile interfaces — without requiring any design software knowledge, design system familiarity, or visual design skills.

The new features in the March 2026 update:

Simultaneous multi-screen generation: Stitch now generates up to 5 connected screens simultaneously from a single prompt — maintaining visual and structural consistency across the entire user flow. Previously, AI design tools generated one screen at a time, forcing manual work to achieve consistency. Stitch generates the onboarding flow, the home screen, the settings page, and the key feature screens as a coherent set.

AI-infinite canvas: An unlimited workspace where Stitch can place, organise, and iterate on designs. The AI maintains awareness of the full design context — meaning changes to the design system, colour palette, or component style propagate across all related screens automatically.

Voice controls: Designers can modify designs by speaking — “make the navigation bar darker,” “change this button to a full-width CTA,” “apply the branding from our existing style guide” — without clicking through menus or manually adjusting elements.

AI design agent: A persistent AI assistant within Stitch that proactively suggests improvements — identifying accessibility issues, flagging inconsistencies, suggesting component alternatives from Google’s Material Design system, and recommending layout changes based on UX research data.

MCP server integration: Stitch connects directly to developer tools via MCP — allowing designs to be exported as functional code (React, Flutter, HTML/CSS) automatically, closing the design-to-development handoff gap that has historically been one of the most costly and friction-heavy parts of the product development process.

5.3 A practical example: from idea to interactive prototype in minutes

To understand what “vibe designing” means in practice, consider the workflow transformation Stitch enables:

Before Stitch (traditional design workflow):

- Product manager writes a brief (1–2 days)

- Designer interprets the brief and creates wireframes in Figma (2–3 days)

- Stakeholder review and revision cycles (3–5 days)

- Designer produces high-fidelity mockups (2–3 days)

- Developer handoff — design to code translation (2–4 days)

- Total: 10–17 days from brief to functional prototype

With Stitch:

- Product manager types: “Design a mobile onboarding flow for a personal finance app targeting 25–40 year olds. Include account creation, bank connection, and a dashboard. Use a clean, professional aesthetic with green as the primary brand colour.”

- Stitch generates 5 connected screens with consistent design system, complete interactions, and export-ready code (15 minutes)

- Voice refinements: “Make the dashboard more data-forward. Add a spending chart above the transaction list.” (5 minutes)

- MCP export to React Native (10 minutes)

- Total: Under 30 minutes from brief to functional prototype

This is not a marginal improvement. It is a 20–30× acceleration of the design-to-prototype workflow.

5.4 What this means for designers

The reaction in design communities has been predictably mixed — ranging from dismissal (“it makes generic, soulless interfaces”) to anxiety (“this is what happened to stock photographers”) to pragmatic adoption (“I use it for rapid prototyping and spend my time on the differentiated design work”).

The honest assessment, based on what Stitch currently does well and what it does not:

Stitch is excellent at:

- Rapid prototyping and stakeholder communication

- Standard UI patterns (e-commerce, SaaS dashboards, onboarding flows, mobile apps)

- Consistency enforcement across a design system

- Generating first-draft designs for non-designers to communicate intent

- Eliminating the design-to-code handoff for standard component libraries

Stitch struggles with:

- Genuinely novel interaction paradigms that depart from established patterns

- Brand identity and visual differentiation — it produces competent, not distinctive, design

- Complex information hierarchies that require deep UX research and testing

- Emotional resonance — the difference between a functional interface and an interface that delights

- Accessibility edge cases beyond the standard Material Design guidelines

The practical career implication for designers: the roles most threatened are junior and mid-level designers performing standard UI production work — the wireframing, mockup production, and component assembly that constitutes most design agency billing hours. The roles most protected are senior designers and design directors whose value is strategic direction, brand identity, and the judgment calls that AI cannot make — what to build, why, and for whom.

This is the same pattern as AI’s impact on software development: the junior production work is being automated, the senior judgment and direction work is being amplified. The career trajectory that survives is one that moves steadily toward the latter.

5.5 Try Stitch free today

Google Stitch is available free at stitch.withgoogle.com — no subscription, no waitlist for most users. If you are a founder, product manager, developer, or designer, 30 minutes with Stitch is the most efficient way to understand what “vibe designing” actually means in practice.

6. Apple’s $1.6 Billion Secret — The AI Acquisition Nobody in the Tech Press Covered

6.1 The acquisition

On January 29, 2026, Reuters confirmed that Apple has acquired Q.ai — an Israeli AI audio startup — for approximately $1.5–1.6 billion, making it Apple’s second-largest acquisition in its history, surpassed only by the $3 billion Beats Electronics purchase in 2014.

The deal was confirmed by Reuters and extensively reported by Israeli technology publication Calcalist Tech, which had followed Q.ai since its founding. In the mainstream US and international tech press — including TechCrunch, The Verge, and Wired — the acquisition received remarkably thin coverage relative to its scale. Most outlets ran brief news items without analysis.

That coverage gap is the opportunity: the Q.ai acquisition is one of the most strategically significant AI moves Apple has made in a decade, and almost no one has connected its implications to Apple’s product roadmap clearly.

6.2 What Q.ai built

Q.ai was founded by Yoav Freund — the same engineer who led the hardware and machine learning development of PrimeSense, the Israeli company whose depth-sensing technology powered the original Microsoft Kinect and later became the foundation of Face ID in Apple’s iPhone X.

Freund’s second company applied a similar philosophy — advanced sensory AI at the hardware-software intersection — to audio rather than visual depth sensing.

Q.ai’s core technologies, as reported by Calcalist Tech:

Whispered speech recognition

Q.ai developed AI models capable of accurately recognising and transcribing whispered speech — one of the hardest problems in audio AI, because whispered speech lacks the acoustic energy, pitch variation, and resonance characteristics that make standard speech recognition reliable. Q.ai’s model achieves near-parity accuracy on whispered speech versus normal speech — a capability no other commercial audio AI product has demonstrated at this quality level.

Challenging acoustic environment processing

AI models trained to reliably recognise speech in environments with significant background noise, reverberation, multiple simultaneous speakers, and distance attenuation. The technology works at ranges and in conditions where current voice assistant systems fail.

Wearable audio AI

Machine learning architectures optimised for the constraint profile of wearable audio devices — processing audio AI inference on-device, at low power, with the form-factor limitations of earbuds, hearing aids, and pin-sized devices.

Bone conduction and multi-sensor fusion

Audio AI that fuses bone conduction microphone data (which captures speech vibration through the skull rather than air) with standard microphone input — dramatically improving noise cancellation and speech isolation in high-noise environments.

6.3 What this tells us about Apple’s product roadmap

Apple does not make $1.6 billion acquisitions without a clear and near-term product application. Based on Q.ai’s technology profile, two product directions emerge with high confidence:

Direction 1: AirPods Pro — The Generation That Hears Everything

The next generation AirPods Pro will almost certainly incorporate Q.ai’s whispered speech recognition and challenging environment audio AI. The practical result: you will be able to whisper to Siri in a loud environment and have it understand you accurately — a capability that transforms AirPods from ambient audio devices into always-on personal AI interfaces. Combined with the Gemini integration confirmed for Siri (see our earlier coverage), next-generation AirPods become the most capable wearable AI device on the market.

Direction 2: Apple’s Rumoured AI Wearable Pin

Multiple independent sources have reported Apple is developing a small, wearable AI device — approximately AirTag-sized, designed to be worn as a pin or clip — targeted at 2027. If accurate, Q.ai’s wearable audio AI and miniaturised inference architecture are exactly the technology required. The wearable pin concept — a device that listens to your environment, understands context, and assists proactively without requiring you to raise your phone — is the product category that Humane’s AI Pin attempted and failed to deliver. Q.ai’s technology gives Apple the audio AI foundation to do it properly.

6.4 The Israel AI connection

Q.ai is the third major Israeli AI acquisition by a US technology company in the past 18 months:

- Apple / Q.ai: ~$1.6 billion (audio AI)

- Microsoft / Deci AI: $350 million (inference optimisation)

- Google / Wiz (cybersecurity AI, not pure AI): $32 billion

Israel’s AI ecosystem — particularly in sensor AI, computer vision, cybersecurity AI, and edge inference — has emerged as one of the world’s most productive sources of frontier AI capability, driven by deep military technology investment and a research culture that prizes hardware-software integration. For enterprises building AI strategy, Israel’s AI startup ecosystem is a leading indicator of capability developments that will appear in consumer and enterprise products within 2–4 years.

7. The 100-Agent Enterprise Team — Agentic AI’s Next Phase

7.1 The emergence of agent teams

The AI agent conversation in enterprise technology has, until recently, focused on single agents: one AI system executing one defined workflow. A customer support agent. A coding agent. A research synthesis agent.

That framing is rapidly becoming outdated.

Across Reddit’s r/artificial and r/MachineLearning communities, LinkedIn’s enterprise AI strategy groups, and the enterprise technology forums where the practitioners building these systems share patterns, a new architectural model is dominating discussion: coordinated teams of 50–100 AI agents operating in parallel, each with a specialised role, communicating with each other, and producing outcomes that no single agent could achieve alone.

7.2 How a 100-agent team works

The architecture mirrors a human organisational structure — because that is what it is designed to replace or augment.

Consider an enterprise that deploys a 100-agent team for a competitive intelligence function:

The manager agent receives the objective: “Produce a complete competitive analysis of our top five competitors’ Q1 2026 product launches, pricing changes, and go-to-market strategy, with recommendations for our product roadmap.”

It decomposes the objective and delegates:

- 5 × research agents (one per competitor) assigned to scrape, retrieve, and summarise all publicly available information about each competitor’s Q1 activity — press releases, product announcements, pricing pages, job postings, patent filings

- 5 × social listening agents assigned to monitor each competitor’s LinkedIn, Twitter/X, and community presence — identifying sentiment, customer feedback, and strategic signals

- 5 × financial analysis agents assigned to review each competitor’s investor communications, earnings calls, and financial disclosures

- 10 × synthesis agents assigned to combine data from multiple research agents and produce structured analysis modules

- 1 × quality control agent assigned to review all synthesis outputs for factual accuracy, logical consistency, and completeness

- 1 × recommendation agent assigned to generate actionable product roadmap recommendations based on the complete analysis

All 28 agents operate simultaneously. The research agents use MCP tool access to query databases, retrieve web pages, and access financial data. The synthesis agents access the research agents’ outputs as they are produced. The manager agent monitors progress, reallocates resources when an agent encounters an obstacle, and assembles the final report.

Total wall clock time: 45–90 minutes.

Human equivalent: A team of 6–8 analysts working for 2–3 weeks.

7.3 The Goldman connection — why 30% gains are just the beginning

Goldman Sachs found 30% productivity gains from AI in software development specifically. That 30% was measured in studies of single AI coding assistants augmenting individual developers — tools like GitHub Copilot and basic Claude integrations.

Multi-agent coding teams — where a manager agent decomposes a feature request, delegates components to specialist agents, has a testing agent write and run tests, a review agent assess code quality, and a documentation agent produce technical documentation simultaneously — are producing gains that multiple practitioners on Reddit and LinkedIn describe as 5–10× above the single-agent baseline.

These gains are not yet in Goldman’s economy-wide statistics. The multi-agent architecture is still being deployed by early-adopter technology companies. But the trajectory is clear: as multi-agent architectures move from early adopters to mainstream enterprise deployment, the productivity gains that Goldman currently measures at 30% in narrow domains will expand in both magnitude and breadth.

7.4 The governance challenge: who is responsible for a 100-agent team?

The emergence of multi-agent architectures creates governance challenges that the industry has not yet fully resolved.

When a single AI agent produces an incorrect output, accountability is relatively clear: the human who deployed and configured the agent is responsible. When a 100-agent team produces an output that incorporates errors from three different research agents, a synthesis failure in one of the synthesis agents, and a recommendation based on the contaminated synthesis — accountability becomes genuinely complex.

The governance principles that enterprise teams deploying multi-agent architectures are converging on:

- Human principal accountability: A named human is responsible for every multi-agent team’s outputs, regardless of the internal complexity of how those outputs were produced

- Output verification at each delegation boundary: Human or automated checks at the handoff points between agent layers, not just at final output

- Audit trail requirements: Complete logging of every agent’s inputs, outputs, and tool calls — enabling post-hoc investigation when outputs are questioned

- Scope and authority limits: Explicit constraints on what each agent layer can do, what external systems it can access, and what decisions it can make autonomously versus escalate

The NSW Digital Work Systems Act (covered in our March 19 article) is the first legislative framework to address the accountability question for AI-driven systems in workplace contexts. Multi-agent architectures will stress-test that framework — and every equivalent framework that other jurisdictions will introduce — as deployments scale.

8. The Five Stories Are One Story

8.1 AI is creating value unevenly — and the unevenness is the point

Goldman’s finding, Amazon’s layoffs, Google Stitch, Apple’s Q.ai acquisition, and multi-agent enterprise teams are all expressions of the same underlying dynamic: AI is creating enormous value in specific, well-defined domains while leaving the broader economy largely untouched — and the gap between these two realities is widening.

The companies and individuals inside the narrow leading edge — technology companies with the infrastructure and talent to deploy AI effectively, in the specific domains where it works — are capturing extraordinary gains and restructuring aggressively to lock them in. The companies and individuals outside that leading edge are, for the most part, still waiting.

This is not a technology problem. The technology is available to almost everyone — GPT-5.4 nano at $0.20 per million tokens, Google Stitch for free, DeepSeek V4 open source. The barrier is not access to AI. The barrier is the workflow redesign, context infrastructure, and governance capability required to deploy AI effectively enough to capture the gains.

8.2 The audio AI race has a new entrant — and it is the most important hardware company alive

Apple’s Q.ai acquisition, combined with the Gemini-Siri integration confirmed last month, signals that the next AI battleground is ambient audio — AI that listens to your environment continuously, understands context without being explicitly prompted, and assists proactively through the device you are already wearing in your ears.

This is the form factor that changes everything about AI adoption: it requires no screen, no keyboard, no deliberate action. It is simply always present, always listening, always capable — within the limits you define. Google, Apple, Amazon (Alexa), and Meta (Ray-Ban glasses) are all converging on this form factor from different directions. Q.ai’s technology gives Apple the audio AI capability to lead that race.

8.3 The multi-agent architecture is the infrastructure play of 2026

The 100-agent enterprise team is not a novelty or an experiment. It is the organisational model that the most capable AI-deploying enterprises are building toward — and the gap between organisations that understand this architecture and those that do not will be one of the defining competitive differentiators of the next 24 months.

The infrastructure for multi-agent teams — MCP for tool access, OpenClaw for agent communication, context engineering for information architecture, NemoClaw for hardware orchestration — is available now. The organisations that are building on it now are accumulating organisational learning and institutional capability that compounds over time.

9. What This Means for Businesses

9.1 Use Goldman’s report strategically — not as reassurance

If you have been watching the AI investment race from the sidelines and using “no evidence of economy-wide productivity gains” as justification for caution, Goldman’s report does not support that position.

What it supports is: the gains are real, they are concentrated, and the organisations capturing them are restructuring aggressively. The gap between AI-native organisations and AI-cautious organisations is widening at pace. Waiting for economy-wide evidence is waiting for the train to have already left.

Use Goldman’s findings productively: they tell you exactly where to focus. Customer support and software development show 30% gains with current tools. Those are your highest-confidence starting points. Deploy there first, measure rigorously, and use the demonstrated results to build the business case for expansion.

9.2 Evaluate Google Stitch for your product development workflow this week

Google Stitch is free. It takes 30 minutes to evaluate. And if your organisation has any product development activity — internal tools, customer-facing apps, marketing websites, dashboards — it is directly relevant to your workflow and cost structure.

Specifically: assign a product manager or developer (not a designer) to produce a prototype of one of your existing or planned interfaces using Stitch, without any designer involvement. Compare the output quality, time investment, and iteration speed with your current process. The result of that experiment will tell you more about Stitch’s relevance to your organisation than any amount of reading about it.

9.3 Rethink your Apple device strategy

For enterprises making decisions about device procurement, voice interface strategy, or wearable AI integration — the Q.ai acquisition is a 12–18 month preview of Apple’s hardware roadmap.

Enterprises that have standardised on Apple hardware should expect:

- Next-generation AirPods Pro with significantly enhanced AI capability — potentially replacing dedicated voice AI devices for enterprise use cases

- A possible Apple AI wearable pin in 2027 that creates a new category of enterprise AI interface

Enterprises building voice and audio AI interfaces should assess: are you building for today’s voice interface capabilities, or designing for the capabilities that Q.ai’s technology will deliver in the next product cycle?

9.4 Start your multi-agent architecture with three agents, not 100

The 100-agent enterprise team is the destination. The starting point is much simpler — and starting is more important than starting big.

The minimum viable multi-agent architecture that demonstrates the value of the model:

- A research agent: Collects and synthesises relevant information for a defined task domain

- An execution agent: Produces the primary output (analysis, content, code, communication)

- A review agent: Checks the execution agent’s output for quality, accuracy, and completeness before delivery

This three-agent structure can be built in hours using existing frameworks (LangGraph, CrewAI, OpenClaw) and existing models (Claude Sonnet 4.6, GPT-5.4 mini, DeepSeek V4). The output quality improvement over a single-agent system is immediate and measurable. The governance overhead is manageable. The organisational learning is directly applicable to scaling toward more complex architectures.

Start with three. Learn. Scale.

10. The AI Productivity Paradox: A Framework for Leaders

The Goldman finding creates a genuine strategic challenge for leaders trying to build the business case for AI investment: how do you justify investment in a technology that, by economy-wide measures, is not yet delivering returns?

The framework we recommend has three components:

10.1 Measure at the right level

Do not measure AI’s productivity impact at the company level or the economy level. Measure it at the workflow level.

Economy-wide averages hide the reality that some workflows are delivering 30%+ gains while adjacent workflows are delivering nothing. The relevant question is not “is AI improving our company’s productivity?” — it is “is AI improving the productivity of this specific workflow, and is that improvement sufficient to justify the deployment cost?”

Set up rigorous, workflow-level measurement before deployment. Define your baseline. Define your success metrics. Measure after 90 days. The results will be clear, actionable, and defensible to any sceptic — because they are real numbers from your actual operation, not economy-wide averages.

10.2 Distinguish between efficiency gains and structural transformation

Goldman’s 30% gains in customer support and software development are efficiency gains — doing the same thing faster and cheaper. These are valuable and relatively easy to capture with current tools.

Structural transformation — like Amazon’s 30,000 job cuts — goes further: it changes what the organisation is and does, not just how efficiently it does it. Structural transformation produces larger and more durable competitive advantages but requires more investment, longer timelines, and deeper organisational change.

Your AI strategy should have both components:

- Short-term efficiency gains: Identify your customer support, data processing, and routine knowledge work functions. Deploy current tools. Capture the 30% gains. Fund the next wave with the savings.

- Medium-term structural transformation: Identify the roles and functions that AI will make structurally unnecessary in 24–36 months. Design the transition now. Retrain people proactively. Build the AI systems that replace the functions before the competitive pressure to do so becomes acute.

10.3 Build for the Stage 2 economy, not the Stage 1 economy

Goldman’s three-stage model (investment, diffusion, structural transformation) tells you where to position your AI infrastructure investment.

The organisations that captured the most value from the internet were not the ones that deployed email in Stage 1. They were the ones that built their core business models around internet infrastructure — Amazon, Google, Salesforce — in Stage 2, when diffusion made internet access ubiquitous.

The equivalent for AI: build your core operational processes and competitive differentiation around AI execution — not AI assistance — in 2026, during Stage 1, so that when Stage 2 diffusion arrives and AI capability becomes ubiquitous, your processes are already optimised for it while competitors are still adapting.

The $100 billion Amazon spent on AI in 2025, combined with the 30,000 jobs cut in Q1 2026, is Stage 2 positioning executed from a Stage 1 starting point. That is the playbook.

11. FAQ

Did Goldman Sachs say AI has no productivity gains?

Goldman Sachs published a March 2026 analysis finding “no meaningful relationship between AI and productivity at the economy-wide level,” despite approximately $667 billion in global AI capital expenditure forecast for 2026. However, the same analysis found approximately 30% productivity gains in two specific domains — customer support and software development — where AI deployment is most mature. Goldman’s finding is consistent with the historical pattern of transformative technologies (electricity, computers, the internet) that show near-zero economy-wide productivity impact for 10–20 years before a significant surge as adoption diffuses and workflows are redesigned.

Why is Amazon cutting jobs while spending $100 billion on AI?

Amazon cut approximately 30,000 corporate jobs in 2025–2026 citing AI efficiency and “eliminating bureaucracy,” while simultaneously spending close to $100 billion on AI infrastructure in 2025. This is not a contradiction — it is the business logic of AI investment at scale. Amazon spent capital to build AI systems that could perform functions previously performed by people; having built those systems, it is now eliminating the human roles the AI systems have made redundant. CEO Andy Jassy stated explicitly at Davos 2026: “Over the next couple of years I could see us having fewer people than we had before.”

What is Google Stitch AI?

Google Stitch is a free AI-native design platform available at stitch.withgoogle.com that generates complete, interactive app and web interfaces from plain-English descriptions. The March 2026 update introduced “vibe designing” — generating up to 5 connected screens simultaneously, voice controls for design modification, an AI design agent that suggests improvements, and MCP server integration for exporting designs directly to production code. It is designed to enable non-designers to produce professional-quality UI prototypes in minutes rather than days, and to eliminate the design-to-development handoff overhead for standard interface patterns.

What is vibe designing?

Vibe designing is a term for the practice of generating software user interfaces from natural language descriptions using AI tools, without requiring traditional design skills or design software. It is the design equivalent of “vibe coding” — where developers describe what they want in plain English and AI writes the code. Google Stitch is currently the most capable publicly available vibe designing tool, generating production-ready multi-screen interfaces from text or voice prompts. The term reflects the shift from precise, specification-driven design to approximate, intent-driven design where AI interprets and implements the designer’s vision.

What did Apple acquire Q.ai for?

Apple acquired Q.ai — an Israeli AI audio startup — for approximately $1.5–1.6 billion in January 2026, making it Apple’s second-largest acquisition ever. Q.ai specialises in whispered speech recognition, audio AI for challenging acoustic environments (noise, distance, multiple speakers), wearable device audio inference, and bone conduction/multi-sensor audio fusion. The acquisition is expected to power next-generation AirPods Pro with significantly enhanced AI audio capability, and potentially Apple’s rumoured AI wearable pin device targeted for 2027. Q.ai was founded by Yoav Freund, the engineer behind PrimeSense’s depth-sensing technology that became the foundation of Apple’s Face ID.

What is an AI agent team?

An AI agent team is a coordinated group of multiple AI agents — typically 10 to 100 — each assigned a specialised role, operating in parallel and communicating with each other to accomplish a complex objective. Modelled on human organisational structures, agent teams include manager agents that decompose objectives and delegate to specialist agents, research agents that gather information, synthesis agents that produce outputs, and review agents that check quality. The architecture enables AI to tackle complex, multi-step objectives that require parallel processing and specialisation — producing in minutes what a human team of equivalent expertise would take days or weeks to achieve.

How many jobs has AI eliminated in 2026?

In Q1 2026 alone, at least six major technology companies cut a combined total of more than 53,000 jobs, explicitly citing AI efficiency as a direct or contributing cause: Amazon (30,000 cumulative), Oracle (~10,000+), Block (3,800 — 40% of workforce), Atlassian (~1,500), Salesforce (~2,000), and Microsoft (~6,000). These figures represent only the explicitly AI-attributed job cuts at major publicly traded companies — the true number, including smaller organisations and cuts not publicly attributed to AI, is significantly higher. Goldman Sachs forecasts 11 million US workers (6–7% of the workforce) will eventually be displaced by AI over a multi-decade horizon.

Is AI actually helping the economy?

At the economy-wide level, AI’s measured contribution to productivity is currently minimal — 0.1–0.2 percentage points of GDP, per Goldman Sachs’s March 2026 analysis. However, in specific domains — particularly customer support and software development — AI is delivering measurable productivity gains of approximately 30%. The economy-wide impact gap is consistent with the historical pattern of transformative technologies: electricity showed near-zero economy-wide productivity impact for 25–30 years before a major surge in the 1920s–1940s. AI economists broadly expect the economy-wide productivity impact to become measurable as adoption diffuses from technology-native companies to mainstream enterprise deployment — a process expected to take 5–15 years from current levels.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.