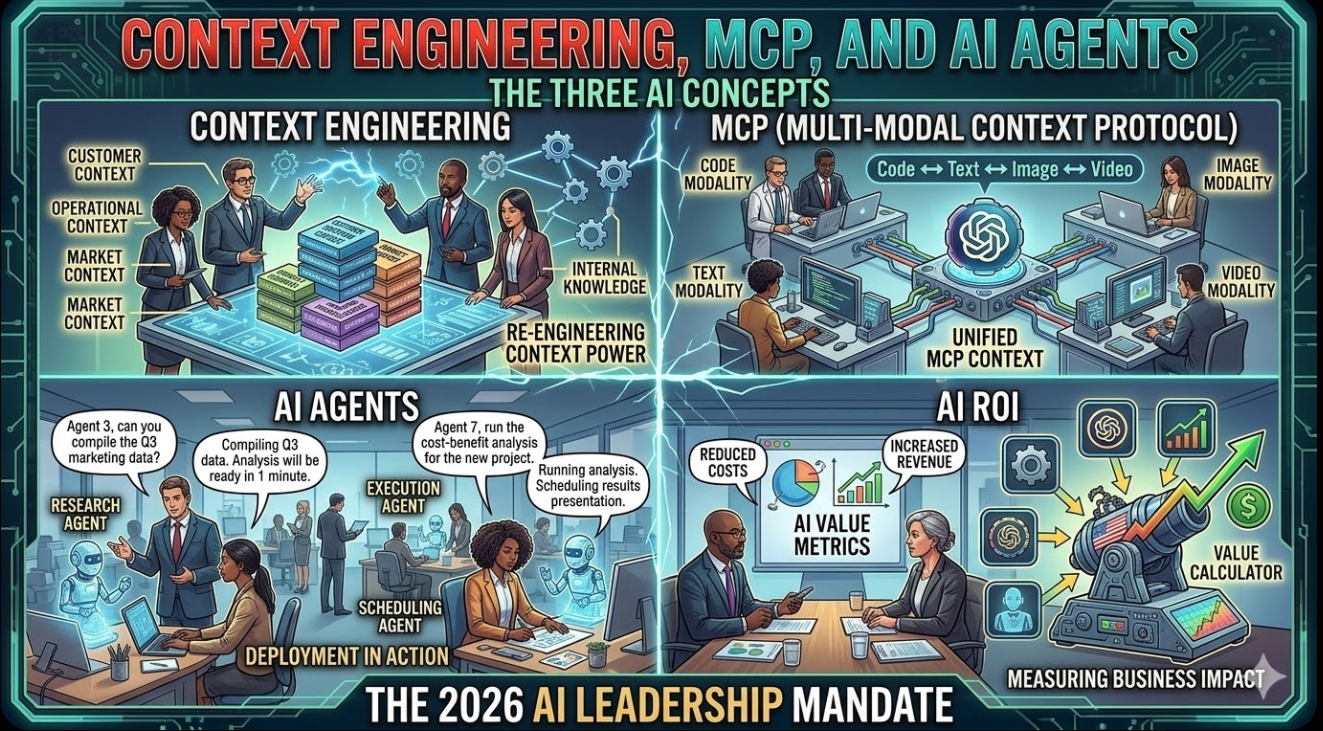

Context Engineering, MCP, and AI Agents: The Three AI Concepts Every Business Leader Needs to Understand in 2026

The Jargon Is Everywhere. Here Is What It Actually Means — And Why It Changes How You Build, Buy, and Deploy AI

Published: March 12, 2026 | By the Kersai Research Team | Reading Time: ~22 minutes

1. The Problem With AI Education in 2026

If you have spent any time in AI circles recently — on LinkedIn, Reddit, in boardrooms, or at technology conferences — you have noticed something: the vocabulary keeps changing, and nobody agrees on what anything means.

Twelve months ago, everyone was talking about prompt engineering. Now the same crowd is talking about context engineering. Six months ago, AI agents were a niche concept discussed by researchers. Now every SaaS company claims to have built one. And somewhere in the background, a technical standard called MCP is being described as “the USB-C of AI” — without most people having the faintest idea what that means or why it matters.

The problem is not that these concepts are genuinely difficult. They are not. The problem is that most explanations are written either for software engineers (too technical) or for marketing teams (too shallow) — leaving the business leaders who actually make AI investment and deployment decisions without a clear, usable framework.

This article is written for that gap.

By the end of it, you will understand:

- What context engineering actually is, why it has replaced prompt engineering as the most important AI skill, and what it means for how you use AI in your organisation.

- What MCP (Model Context Protocol) is, why every major AI lab has adopted it, and why it matters for any enterprise building AI-powered products or workflows.

- The real difference between AI agents and AI assistants, why the distinction matters enormously for enterprise AI strategy, and how to evaluate the “agentic AI” claims flooding your inbox.

No code. No mathematics. No jargon left unexplained. Just clear, usable understanding.

2. Context Engineering: The Skill That Replaced Prompt Engineering

2.1 What prompt engineering was — and why it is not enough anymore

When large language models first became widely available in 2022 and 2023, the people who got the best results were the ones who were best at writing prompts.

A prompt is simply the instruction or question you give to an AI model. “Summarise this document.” “Write a marketing email for this product.” “Explain quantum computing to a 10-year-old.”

The insight that drove the “prompt engineering” movement was that the way you phrased your prompt made an enormous difference to the quality of the output. Specific prompts beat vague ones. Structured prompts beat unstructured ones. Prompts that included examples beat prompts that did not.

For a while, prompt engineering was a genuine skill — and a valuable one. Books were written. Courses were sold. LinkedIn profiles proudly listed “Prompt Engineer” as a job title.

Then three things happened that made prompt engineering, by itself, insufficient.

First, the models got dramatically better. GPT-4, Claude 3, and Gemini Ultra are so capable at interpreting intent that the marginal value of prompt craftsmanship has declined significantly. You no longer need to learn arcane phrasing tricks to get good outputs from a modern frontier model. You just need to be clear.

Second, AI moved from answering single questions to running multi-step workflows. When AI is doing a one-shot task — “write me a subject line” — prompt quality is the main variable. When AI is running a 20-step process, making decisions, calling external tools, and maintaining consistency across hours of work, prompt quality is a small part of the picture. What matters is the entire context in which the AI is operating.

Third, enterprises started building products and workflows on top of AI, not just using AI through a chat interface. When you are building a system — not just asking a question — you need to think architecturally about what information the AI has access to, when, and in what form. That is context engineering.

2.2 What context engineering actually is

Here is the clearest definition:

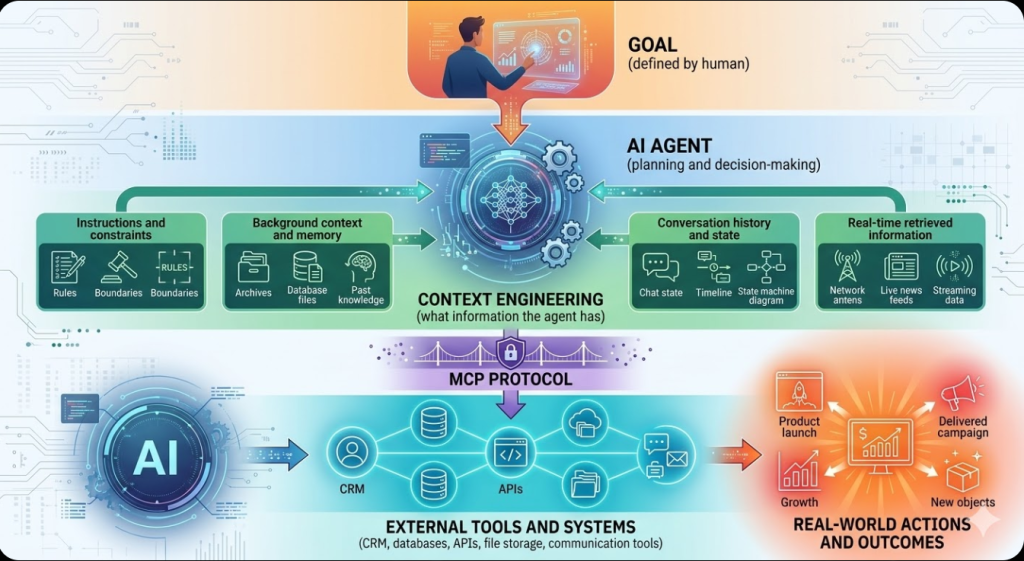

Context engineering is the discipline of designing, managing, and optimising the complete information environment in which an AI model operates — so that it consistently produces accurate, relevant, and useful outputs across complex, multi-step tasks.

While prompt engineering focuses on the wording of a single instruction, context engineering focuses on the entire system: what the AI knows, what it remembers, what tools it can use, what constraints it operates under, and how all of that information is structured and delivered.

Think of it this way.

Prompt engineering is like giving a new employee a well-worded task description: “Please write a client proposal for the Johnson account.”

Context engineering is everything that makes that employee capable of doing the task well: their understanding of your company’s style and values, their knowledge of the Johnson account’s history, their access to the client database and previous proposals, the guidelines they have been given about pricing and positioning, and the feedback loop that tells them whether their work is on the right track.

The words of the task description matter. But they matter far less than the overall context in which the work happens.

2.3 The seven components of context

Context engineering practitioners — and the r/ContextEngineering community on Reddit, which has been one of the most active AI forums in early 2026 — have converged on a framework of seven distinct components that together constitute the “context” an AI model operates in.

Understanding these components is the foundation of context engineering as a practical discipline.

Component 1: Instructions

The explicit directives that tell the AI what to do, how to behave, and what constraints to operate within. This includes system prompts (the background instructions set by the developer before the user interacts with the AI), persona definitions, and behavioural rules. Instructions are the foundation of context — they define the AI’s role and operating parameters.

Example: “You are a senior financial analyst at a global investment bank. Your outputs must always include a risk disclaimer. You never speculate about specific stock movements.”

Component 2: Background Context

Domain-specific knowledge that the AI needs to perform its task well but that is not part of the live conversation. This might include company information, industry knowledge, product specifications, regulatory frameworks, or any other information the AI needs to understand its operating environment.

Example: Providing an AI with your company’s complete pricing model, product catalogue, and competitive positioning before asking it to handle sales enquiries.

Component 3: Conversation History

The record of what has been said in the current interaction — including both the user’s inputs and the AI’s previous outputs. Managing conversation history is more complex than it sounds: AI models have context window limits, and intelligent decisions must be made about what to include, summarise, or discard as conversations grow longer.

Example: An AI customer service agent that remembers everything discussed earlier in a support session — including previous complaints, offered solutions, and the customer’s stated preferences.

Component 4: Memory

Information that persists across separate interactions or sessions. Unlike conversation history (which is within a single session), memory is the AI’s ability to remember relevant information from previous interactions over time. This is one of the most technically challenging components to implement well, and one of the most commercially valuable when done right.

Example: A sales AI that remembers a prospect’s stated budget constraints from a conversation three weeks ago and incorporates that context into today’s follow-up.

Component 5: Tools and Actions

The external capabilities the AI can invoke — web search, database queries, API calls, code execution, calendar access, file management. Context engineering includes designing which tools the AI has access to, when it should use them, and what constraints apply to their use.

Example: An AI research assistant given access to your internal document library, a web search API, and a structured data query tool — but explicitly prohibited from sending external emails or modifying files.

Component 6: Retrieved Information (RAG Output)

Information retrieved in real time from external sources — databases, document libraries, knowledge bases, live APIs — in response to the specific task at hand. RAG (Retrieval-Augmented Generation) is the technical mechanism; context engineering governs how retrieved information is selected, formatted, and integrated into the AI’s working context.

Example: A legal AI that retrieves the specific contract clauses and relevant case law needed for the current task, rather than relying on general legal knowledge trained into the model.

Component 7: Conversation State

The structured tracking of where the AI is in a multi-step process — what steps have been completed, what decisions have been made, what the current objective is, and what comes next. State management is what allows AI systems to run complex workflows reliably rather than losing track of their position in a process.

Example: An AI that is managing a multi-week onboarding process for a new enterprise client, tracking which tasks have been completed, which approvals are pending, and which stakeholders have been contacted.

2.4 Why this matters for your organisation

The shift from prompt engineering to context engineering has a direct implication for how organisations should approach AI investment and implementation.

Stop thinking about AI as a chat interface. Start thinking about AI as an information architecture problem.

The quality of your AI outputs is determined primarily by the quality of the context you provide — which means it is determined by:

- How well your internal knowledge, processes, and data are documented and structured.

- How effectively your AI systems can access and retrieve that information in real time.

- How well your memory and state management systems maintain continuity across complex, multi-step workflows.

- How precisely your instructions and constraints define the AI’s role and operating parameters.

Organisations that invest in these infrastructure components will consistently outperform organisations that invest the same budget in better prompts or more expensive models. The model is the least controllable variable in your AI system. The context is entirely within your control.

2.5 Context engineering in practice: three enterprise examples

Financial services — credit analysis workflow

A global bank replaces its credit analyst assistant system. Instead of building a better prompt for “analyse this credit application,” the context engineering team builds a system that:

- Maintains a memory of each applicant’s interaction history across all channels.

- Retrieves the applicant’s transaction data, credit bureau information, and sector risk profile in real time via RAG.

- Has access to the bank’s internal credit policy documents and regulatory compliance requirements as background context.

- Tracks the state of each application through a multi-stage approval workflow.

The result: analysts using the system produce more consistent outputs, make fewer errors on edge cases, and complete applications 60% faster — not because the prompt is better, but because the context is complete.

Healthcare — clinical documentation

A hospital system builds an AI documentation assistant. The context engineering includes:

- Patient history and current medication list retrieved from the EMR system at the start of each session.

- Department-specific clinical documentation standards as background context.

- Regulatory compliance constraints (HIPAA, clinical coding standards) as hard instructions.

- Memory of the clinician’s documentation preferences and common patterns from previous sessions.

The AI produces draft clinical notes that require minimal editing — not because the model is clever, but because the context ensures it always has the relevant patient and regulatory information available.

Content and marketing — brand consistency at scale

A global brand builds an AI content system for its marketing team. Context engineering includes:

- Complete brand guidelines, tone of voice documentation, and messaging hierarchy as background context.

- A library of approved content examples as RAG-retrievable reference material.

- Channel-specific constraints as instructions (LinkedIn tone vs Instagram tone vs email tone).

- Campaign state tracking so the AI maintains narrative consistency across a multi-week campaign without repeating messages or contradicting previous content.

The result: a marketing team of 12 produces content at the output level of a team of 40, with brand consistency scores that exceed the manual process.

3. MCP: The “USB-C of AI” — And Why It Changes Everything

3.1 The problem MCP solves

Imagine you want to build an AI system that can help your team manage projects. You want it to:

- Read and write tasks in your project management tool (Jira, Asana, Monday).

- Access relevant files in your document storage (Google Drive, SharePoint, Notion).

- Query your CRM for customer context (Salesforce, HubSpot).

- Send updates via your messaging platform (Slack, Teams).

- Run calculations using your internal data systems.

To build this, you need to connect your AI model to five different external systems. Each of those systems has its own API, its own authentication protocol, its own data format, and its own documentation. Your engineering team needs to write custom integration code for each one — and then maintain it as each system updates its API.

Now multiply that across an enterprise. Dozens of AI applications. Hundreds of tools. Thousands of custom integrations. Each one built slightly differently, each one fragile, each one requiring dedicated maintenance.

This was the state of enterprise AI integration in 2023 and 2024. It was slow, expensive, and structurally inefficient. The engineering cost of connecting AI to business systems was often larger than the cost of the AI itself.

MCP — the Model Context Protocol — was built to solve this problem.

3.2 What MCP is

Model Context Protocol (MCP) is an open standard that defines a universal method for AI models to connect to, communicate with, and use external tools and data sources.

It was developed and open-sourced by Anthropic in late 2024, and has since been adopted by OpenAI, Google, Microsoft, and virtually every major AI platform as the de facto standard for AI-tool integration.

The USB-C analogy that has become popular on Reddit and LinkedIn is genuinely apt:

- Before USB-C, every device had its own charging port. Laptops, phones, cameras, headphones — all different connectors, all requiring different cables, all incompatible with each other.

- USB-C created a single universal standard. One cable, one port, everything connects.

- Before MCP, every AI model had its own way of connecting to every tool. Building integrations required custom code for every combination of model and tool.

- MCP creates a single universal standard. One protocol, every tool, every model.

Under MCP, if a tool (say, your Jira project management system) is built to the MCP standard, it can be connected to any MCP-compatible AI model — Claude, GPT-5, Gemini, an open-source model — without any custom integration work. The connection is standardised, the communication format is standardised, and the security model is standardised.

3.3 How MCP actually works (without the code)

MCP defines three types of entities:

MCP Hosts

The AI applications or environments that need to access external tools. Claude Desktop, ChatGPT, a custom enterprise AI agent — these are all MCP hosts. The host is where the AI model lives and where user interactions happen.

MCP Clients

Components within the host that manage connections to external servers. Think of the client as the “socket” that holds the plug. It handles the communication protocol, manages authentication, and routes requests between the AI model and the external tools.

MCP Servers

The tools, data sources, or systems that the AI wants to access. An MCP server is a lightweight piece of software that wraps an existing system (your CRM, your file storage, your database) in the MCP standard, making it accessible to any MCP-compatible AI model.

The flow works like this:

- A user asks the AI to do something that requires external information — “What is the status of the Johnson account renewal?”

- The AI recognises it needs CRM data to answer this.

- The MCP client connects to the Salesforce MCP server.

- The MCP server retrieves the relevant account data from Salesforce.

- That data is returned to the AI in a standardised format.

- The AI uses the data to answer the question accurately.

The user sees none of this. From their perspective, the AI just knows about the Johnson account. The MCP layer handles everything in between.

3.4 Why MCP matters for enterprises

It dramatically reduces the cost of AI integration.

Under the pre-MCP world, connecting an AI to five business systems might require 3–6 months of engineering work, producing fragile, custom code that breaks whenever either the AI or the tool updates. Under MCP, the same integration can be done in days — and maintained with a fraction of the ongoing engineering effort.

It creates a reusable, composable AI infrastructure.

When your tools are MCP-compatible, any AI model can use them. When you switch from Claude to GPT-5 — or add a new specialised model to your stack — you do not need to rebuild your integrations. Your MCP servers work with any MCP client. This portability is enormously valuable as the AI landscape evolves.

It enables the context engineering described in Section 2.

MCP is the technical mechanism that makes several of the context components — particularly tools, retrieved information (RAG), and real-time data access — practically achievable at enterprise scale. Without a standardised integration protocol, context engineering at scale requires enormous custom engineering. With MCP, it becomes an architectural decision rather than an engineering marathon.

It is already the industry standard.

This is not a technology that might become standard. It already is. Anthropic built it. OpenAI adopted it. Google built it into the Gemini API. Microsoft integrated it into Azure AI Foundry. The MCP ecosystem now includes thousands of pre-built servers for common enterprise tools — Slack, GitHub, Google Drive, Salesforce, Jira, Notion, PostgreSQL, and hundreds more.

If you are building AI-powered products or workflows in 2026 and you are not using MCP-compatible tools, you are building on a proprietary foundation that will require complete rework as the ecosystem matures.

3.5 What MCP means for non-technical leaders

You do not need to understand the implementation details of MCP. But you do need to understand one thing:

When evaluating any AI tool, platform, or vendor, ask whether it supports MCP.

MCP compatibility is the 2026 equivalent of asking whether software supports standard file formats, open APIs, or cloud deployment. It is a basic interoperability requirement that protects your investment against lock-in and ensures your AI infrastructure can evolve as the landscape changes.

An AI tool that does not support MCP is a tool that requires custom integration work for every connection you want to make — and custom integration work is expensive, fragile, and vendor-dependent. That is a hidden cost that compounds over time.

4. AI Agents vs AI Assistants: The Difference That Determines Your Strategy

4.1 Why the distinction matters

“Agentic AI” has become one of the most overused phrases in enterprise technology. Every vendor — from the largest AI labs to the smallest SaaS startups — is claiming to offer AI agents. The word has been applied to everything from a basic chatbot with a memory feature to genuinely autonomous multi-step systems.

This ambiguity is not just marketing noise. It creates real strategic risk. Enterprises that invest in “agentic AI” without understanding what they are actually buying frequently discover that they have purchased a sophisticated AI assistant — not an AI agent — and are confused when it does not behave autonomously.

The distinction is fundamental, and it comes down to one question:

Who decides what to do next?

4.2 AI assistants: the human decides

An AI assistant is a system that responds to human requests. The human decides what task needs doing, initiates the request, reviews the output, and decides on the next action. The AI executes individual tasks with high quality — but it does not initiate, plan, or sequence actions autonomously.

Characteristics of AI assistants:

- Reactive: they respond to inputs, they do not initiate.

- Single-step: they complete one task per interaction.

- Human-supervised: every output requires human review before action.

- Stateless (usually): they do not remember previous sessions or maintain ongoing goals.

- Examples: ChatGPT in standard mode, Copilot in Office, Siri, standard customer service chatbots.

AI assistants are powerful and genuinely valuable. They augment human capability by making individual tasks faster, cheaper, and higher quality. But they do not operate independently. Remove the human from the loop, and they stop.

4.3 AI agents: the AI decides

An AI agent is a system that pursues goals autonomously over multiple steps. The human defines the objective. The AI plans the approach, sequences the actions, makes decisions at each step, uses tools and external resources as needed, and continues working until the objective is achieved — without requiring human approval at every step.

Characteristics of AI agents:

- Proactive: they initiate actions in pursuit of a goal.

- Multi-step: they execute sequences of actions, adapting based on outcomes.

- Autonomous: they make decisions without requiring human sign-off at each step.

- Stateful: they maintain memory of previous actions and current progress toward the goal.

- Tool-using: they call external APIs, query databases, run code, and interact with other systems.

- Examples: Claude Code autonomously modernising a codebase, an AI agent that monitors your inbox, drafts replies, schedules meetings, and follows up on unanswered emails — all without being triggered by a human each time.

The defining feature of an AI agent is autonomy over sequences. It is not just doing what you ask — it is deciding what to do next in pursuit of the goal you have set.

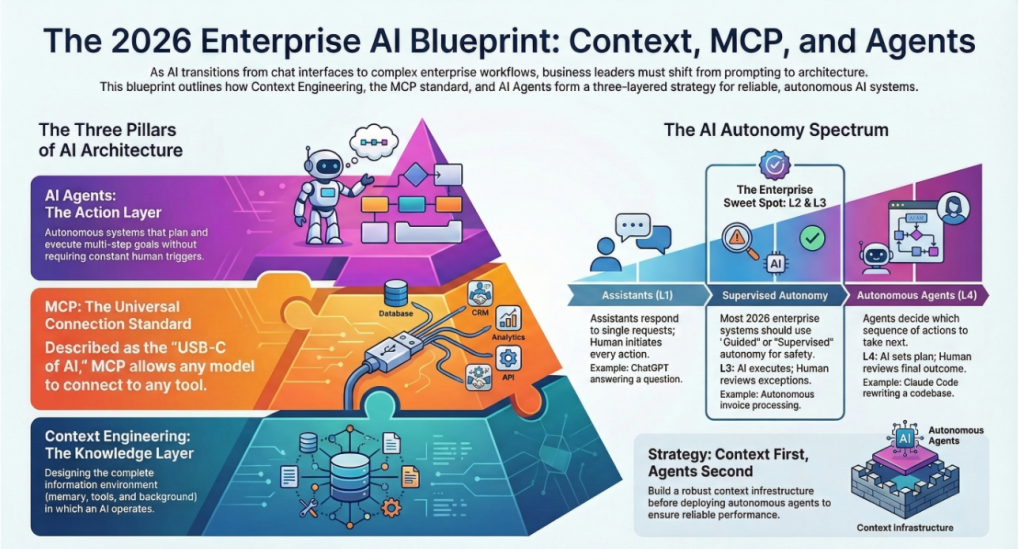

4.4 The spectrum: it is not binary

In practice, AI systems exist on a spectrum between pure assistant and fully autonomous agent. Most enterprise AI systems in production in 2026 sit somewhere in the middle — more autonomous than a simple chatbot, but with human approval gates at key decision points.

A useful framework for thinking about where on the spectrum a system sits:

| Level | Description | Human Role | Example |

|---|---|---|---|

| L1 — Assistant | Responds to single requests | Initiates every action | ChatGPT answering a question |

| L2 — Guided Agent | Executes multi-step plans with human approval at each step | Reviews and approves each action | AI drafting 10 emails for human review before sending |

| L3 — Supervised Agent | Executes autonomously but flags exceptions | Reviews exceptions only | AI processing invoices autonomously, escalating ambiguous cases |

| L4 — Autonomous Agent | Executes fully autonomously toward a defined goal | Sets goal; reviews final outcome | Claude Code autonomously rewriting and testing a codebase |

| L5 — Self-Directed Agent | Sets and pursues its own objectives | Sets boundaries | Not yet deployed commercially at scale |

Most enterprises should be targeting L2 to L3 for production systems in 2026. L4 is appropriate for well-defined, lower-risk tasks with reliable feedback loops (coding, data processing, research). L5 is not commercially available in reliable form and carries risks that are not yet well-understood.

4.5 What makes a genuine AI agent work

Three technical capabilities distinguish a genuine AI agent from a sophisticated AI assistant:

Planning

The ability to decompose a complex goal into a sequence of steps, determine the order in which they should be executed, and adapt the plan when steps fail or produce unexpected results. Planning is what allows an agent to handle “modernise our entire customer onboarding codebase” rather than just “refactor this function.”

Memory and State Management

The ability to remember what has been done, what has been decided, what has failed, and what the current state of the goal is — across multiple steps and sessions. Without reliable memory and state management, an AI agent loses its place and either repeats work or misses critical context. This is where context engineering and MCP become directly relevant to agent capability.

Tool Use

The ability to take actions in external systems — not just generate text about what should be done. A genuine AI agent does not just suggest you query the database; it queries the database. It does not just recommend a meeting; it books the meeting. Tool use, powered by MCP, is what gives agents the ability to create real-world effects.

4.6 The enterprise implications: where agents create value and where they create risk

AI agents create the most value in workflows that are:

- High volume: The agent’s autonomy only pays off if there are enough tasks to justify the overhead of agent infrastructure.

- Well-defined: The goal must be clear enough that the agent can plan toward it reliably. Ambiguous goals produce unreliable agent behaviour.

- Reversible: The agent’s actions should be undoable if something goes wrong. Never deploy a fully autonomous agent for actions with irreversible consequences without robust human oversight.

- Feedback-rich: The agent needs reliable signals about whether its actions are succeeding or failing. Systems with clear success criteria outperform systems where “good” is subjective.

AI agents create the most risk in workflows that are:

- Consequential and irreversible: Financial transactions, legal commitments, communications with customers or regulators.

- Context-dependent in ways the agent cannot access: Decisions that require organisational politics, relationship history, or implicit knowledge not captured in any system.

- Novel or unprecedented: Agents perform reliably on tasks similar to their training data. Novel situations trigger unpredictable behaviour.

The most common enterprise mistake with agentic AI in 2026 is deploying L4 autonomy in workflows that require L2 or L3 supervision — and then being surprised when the agent makes decisions that a human would have caught.

5. How the Three Concepts Connect

Context engineering, MCP, and AI agents are not three separate topics. They are three layers of the same architecture.

Context engineering is the discipline that determines what information the AI has access to and how it is structured. It is the “what does the AI know?” layer.

MCP is the technical standard that enables the AI to access real-time information from external systems — fulfilling the tools, RAG, and state management components of context engineering. It is the “how does the AI get the information?” layer.

AI agents are the systems that use context engineering principles and MCP-based tool access to pursue goals autonomously across multiple steps. They are the “what does the AI do with the information?” layer.

Put together, the architecture looks like this:

An organisation that invests in context engineering without MCP will build agents that are well-informed but isolated — unable to access real-time data or take real-world actions.

An organisation that implements MCP without context engineering will build agents that are connected but inconsistent — with access to the right tools but without the structured information environment needed to use them reliably.

An organisation that deploys agents without investing in either will build autonomous systems that are simultaneously uninformed and poorly constrained — the source of most of the “AI agent horror stories” appearing in Reddit threads and LinkedIn posts in 2026.

6. A Practical Framework for Business Leaders

6.1 Start with context, not with agents

The most common mistake in enterprise AI deployment is starting with the agent — buying or building an autonomous AI system — before investing in the context infrastructure that makes it reliable.

Build your context engineering foundation first:

- Document your processes, knowledge, and decision-making frameworks in forms that AI can use.

- Structure your data so it can be retrieved accurately and quickly via RAG.

- Define clear instruction sets for each AI-assisted workflow.

- Build the memory systems that allow AI to maintain continuity across interactions.

Once your context infrastructure is solid, you will find that even simple AI assistants perform dramatically better. Then, when you add agentic capabilities, they have a reliable information environment to operate in.

6.2 Audit your tool ecosystem for MCP compatibility

Before building new AI integrations, audit your existing tool stack:

- Which of your core business tools have MCP-compatible servers available?

- Which tools require custom integration code?

- Which vendors are on the MCP roadmap but not yet compliant?

Prioritise MCP-compatible tools for new AI integration work. For tools that do not yet support MCP, advocate with vendors for MCP support in their product roadmaps. The ecosystem is growing rapidly — most major enterprise software vendors have MCP compatibility on their 2026 development roadmaps.

6.3 Right-size your agent autonomy

Match your agent autonomy level to your workflow risk profile:

- L2 (Guided) for any workflow involving customer communication, financial transactions, or regulatory compliance.

- L3 (Supervised) for high-volume processing workflows with clear success criteria and human exception handling.

- L4 (Autonomous) only for well-defined, reversible, feedback-rich tasks where you have run sufficient supervised testing to trust the agent’s judgment.

Document your autonomy decisions and review them regularly. As agents accumulate performance data, it becomes safer to increase autonomy on proven workflows — but that decision should be evidence-based, not based on vendor promises.

6.4 Measure context quality, not just output quality

Most organisations measure AI system performance by output quality: is the answer correct? Is the email well-written? Is the code functional?

This is necessary but insufficient. When an AI system produces poor outputs, organisations typically respond by trying different prompts — the prompt engineering instinct. More often, poor outputs are a symptom of poor context:

- The AI did not have access to the relevant background information.

- The retrieved information was outdated or incomplete.

- The instructions were ambiguous or contradictory.

- The conversation state was not properly maintained across a multi-step workflow.

Add context quality metrics to your AI performance dashboards:

- Retrieval precision: Is the AI accessing the right information for each task?

- Instruction adherence: Is the AI consistently following its defined constraints?

- Memory accuracy: Is the AI correctly incorporating relevant context from previous interactions?

- State consistency: Is the AI maintaining accurate awareness of its position in multi-step workflows?

Improving context quality typically produces larger performance gains than any amount of prompt optimisation.

7. FAQ

What is the difference between context engineering and prompt engineering?

Prompt engineering focuses on crafting the wording of individual instructions to get better outputs from a single AI interaction. Context engineering is the broader discipline of designing and managing the entire information environment in which an AI operates — including instructions, background knowledge, memory, tool access, retrieved information, conversation history, and state management. As AI models have become more capable and are increasingly used for complex, multi-step workflows, context engineering has become far more important than prompt crafting.

Does my organisation need to understand MCP to use AI tools?

Not technically — most AI tools handle MCP implementation behind the scenes. But business and technology leaders need to understand MCP as a procurement and strategy concept. When evaluating AI tools, asking whether they support MCP tells you whether the tool will be portable, interoperable, and maintainable as your AI stack evolves. Tools that require custom integration code for every connection are more expensive to build, more fragile to maintain, and harder to replace when better alternatives emerge.

What is the minimum viable MCP setup for a small or mid-sized business?

Start with two or three high-value integrations. Identify the external systems your AI assistant most frequently needs information from — typically your CRM, your document storage, and your project management tool. Check whether those systems have publicly available MCP servers (many do — the Anthropic and OpenAI documentation sites maintain lists of certified MCP servers). Connect those servers to your AI host environment. This gives you a working MCP foundation that you can expand systematically without building custom integrations.

How do I know if a vendor is selling me an AI assistant or a genuine AI agent?

Ask four specific questions. First: can the system initiate actions without a human trigger? Second: can it execute a sequence of more than three steps autonomously, adapting based on intermediate results? Third: does it maintain memory and state across sessions without human re-briefing? Fourth: can it take real-world actions — sending emails, modifying records, executing transactions — not just generate text recommendations? If the answer to all four is yes, you are looking at a genuine agent. If the answer to any is no, you are looking at a sophisticated assistant — which may still be valuable, but requires different deployment expectations and governance.

Is it safe to deploy autonomous AI agents in enterprise environments?

Autonomous AI agents can be deployed safely in enterprise environments, but only when the deployment follows appropriate governance. This means starting with supervised autonomy (L2 or L3), establishing clear human escalation paths for edge cases, running sufficient testing before expanding to full autonomy, restricting autonomous action to reversible or low-consequence tasks initially, and building monitoring systems that detect when agent behaviour deviates from expected patterns. The agents causing enterprise problems in 2026 are almost universally ones deployed at higher autonomy levels than their context infrastructure and testing warrant.

What should I read or study to go deeper on context engineering?

The r/ContextEngineering subreddit is currently the most active community of practitioners sharing real-world patterns and case studies. Anthropic’s documentation on building with Claude includes detailed context engineering guidance. The MCP specification at modelcontextprotocol.io provides the technical reference for MCP implementation. For a business-oriented framework, the Kersai research library contains additional articles on enterprise AI deployment that build on the concepts introduced here.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.