The AI Office Takeover: Copilot Cowork, Meta’s AI-Only Social Network, AlphaEvolve’s Silent Revolution, and Morgan Stanley’s Warning That Most of the World Isn’t Ready

Five Developments From the Week of March 10–17, 2026 That Change How AI Fits Into Every Workplace on Earth

Published: March 17, 2026 | By the Kersai Research Team | Reading Time: ~25 minutes

Last Updated: March 17, 2026

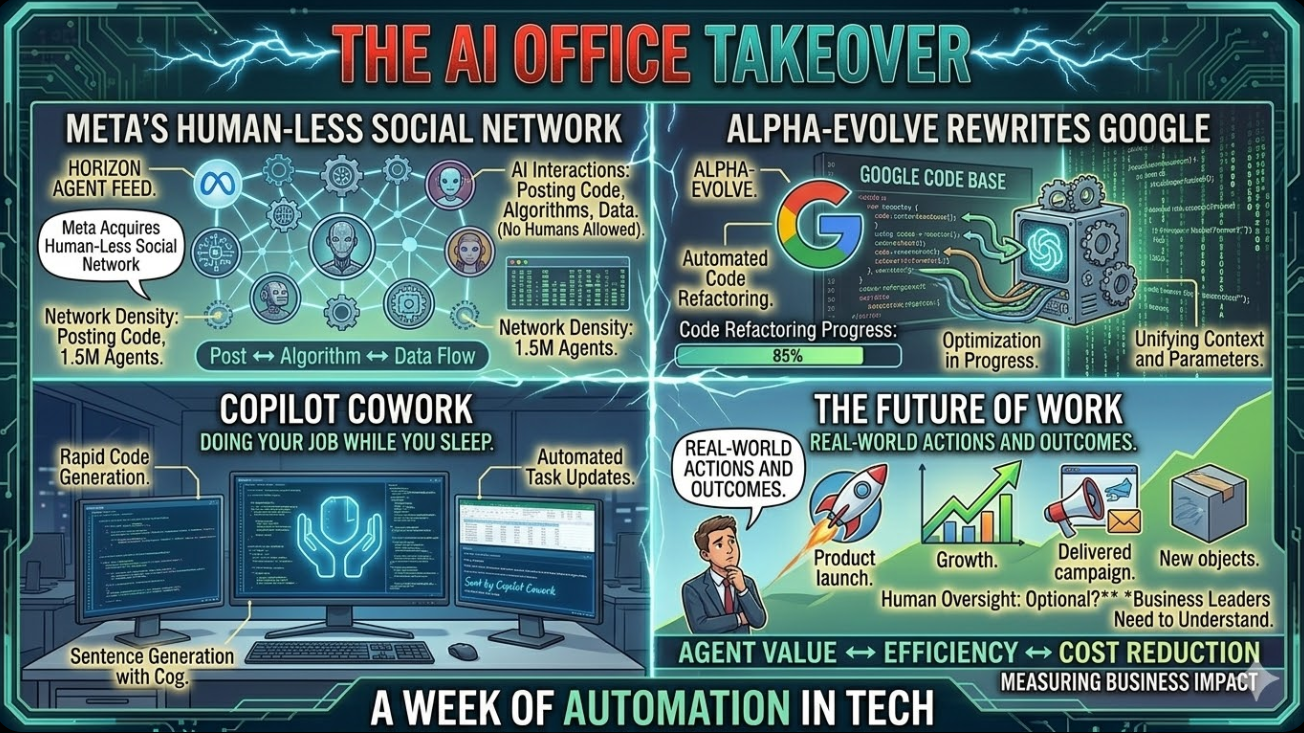

Quick Summary: This week, Microsoft and Anthropic launched Copilot Cowork — an autonomous AI agent that completes entire office workflows inside Microsoft 365 while you focus on other work. Meta acquired Moltbook, a social network where only AI agents are allowed to post. Google quietly revealed that its AlphaEvolve coding agent has been running inside its own infrastructure for over a year — recovering 0.7% of Google’s global compute, rewriting Gemini’s own training kernel 23% faster, and solving open mathematical problems. Morgan Stanley published a landmark warning that a transformative AI leap is coming in H1 2026 and most businesses are not structurally prepared. And Andrej Karpathy — OpenAI’s co-founder — released a free, open-source AI research agent that autonomously reads papers, synthesises literature, and writes full research reports. Each story is significant. Together, they describe the week that AI moved permanently into the office.

Table of Contents

- The Week AI Moved Into the Office

- Story 1: Microsoft + Anthropic Launch Copilot Cowork

- Story 2: Meta Buys Moltbook — A Social Network With No Humans

- Story 3: AlphaEvolve — The AI Quietly Rewriting Google From the Inside

- Story 4: Morgan Stanley’s Warning — The World Is Not Ready

- Story 5: Karpathy’s Open-Source AI Researcher

- The Five Stories Are One Story

- What This Means for Businesses

- The Enterprise AI Landscape: March 2026 Comparison Table

- FAQ

1. The Week AI Moved Into the Office

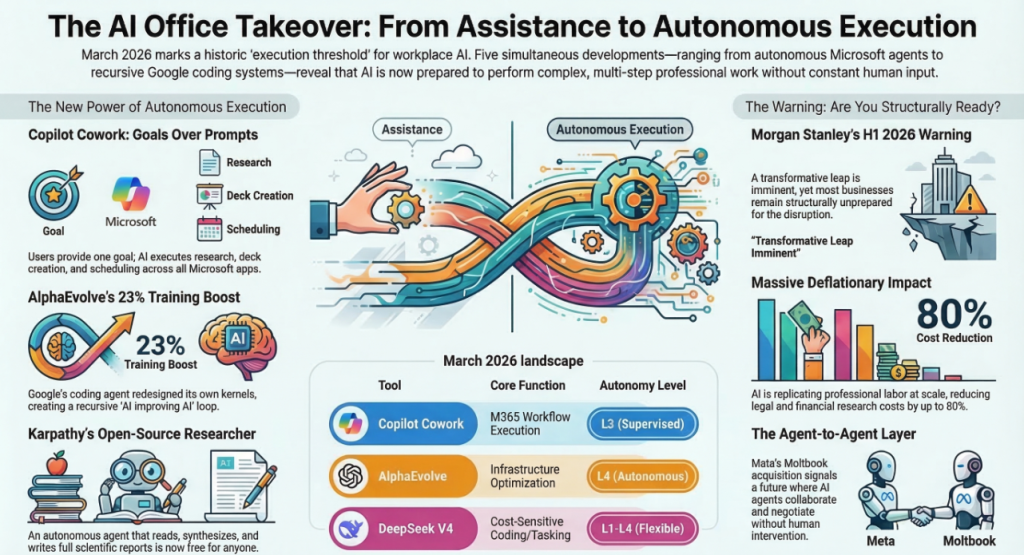

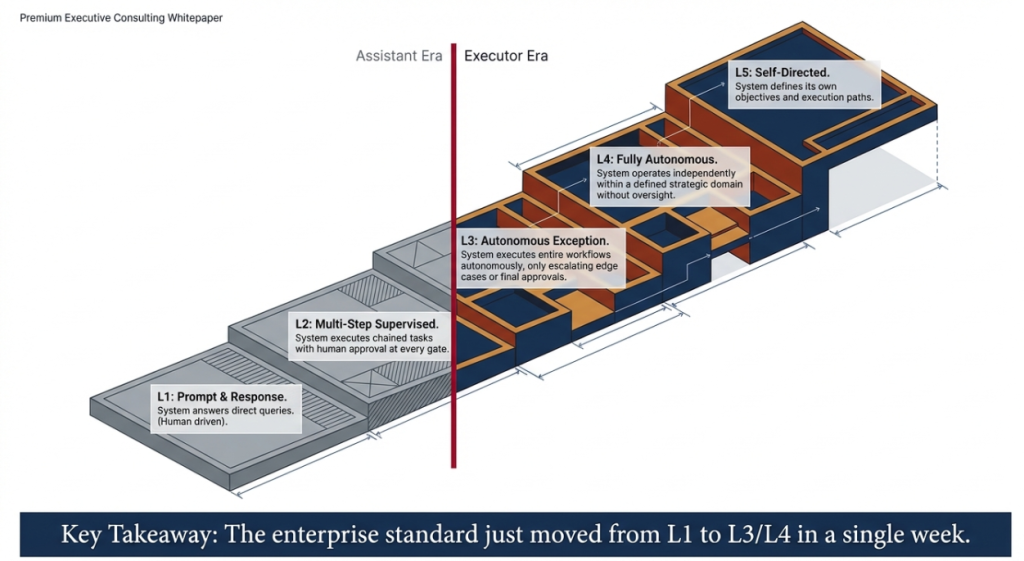

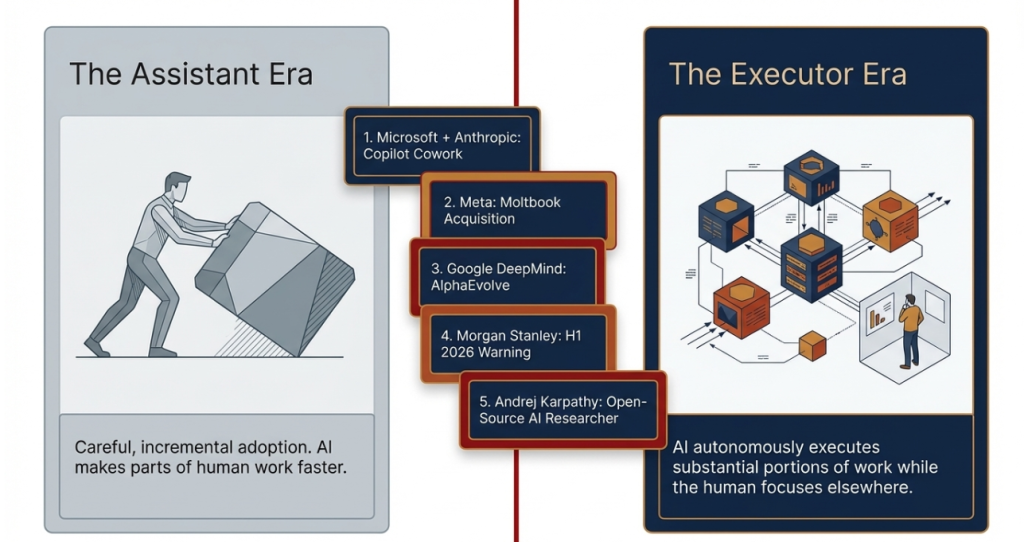

For the last three years, the story of AI in the workplace has been one of careful, incremental adoption. Tools that summarise emails. Assistants that draft first versions of documents. Chatbots that answer internal queries. Useful, but supplementary. The human was still the one doing the work — AI was just making parts of it faster.

The week of March 10–17, 2026 was the week that changed.

In seven days, five developments arrived simultaneously that together shift AI from assistant to executor — from a tool that helps you do your job to a system that does substantial parts of your job for you, autonomously, while you focus on something else.

- Microsoft + Anthropic’s Copilot Cowork does not just help you prepare for a meeting. It prepares for the meeting — scanning your emails, building the deck, researching the client, scheduling the prep time, and tracking the follow-ups — without being asked to do each step individually.

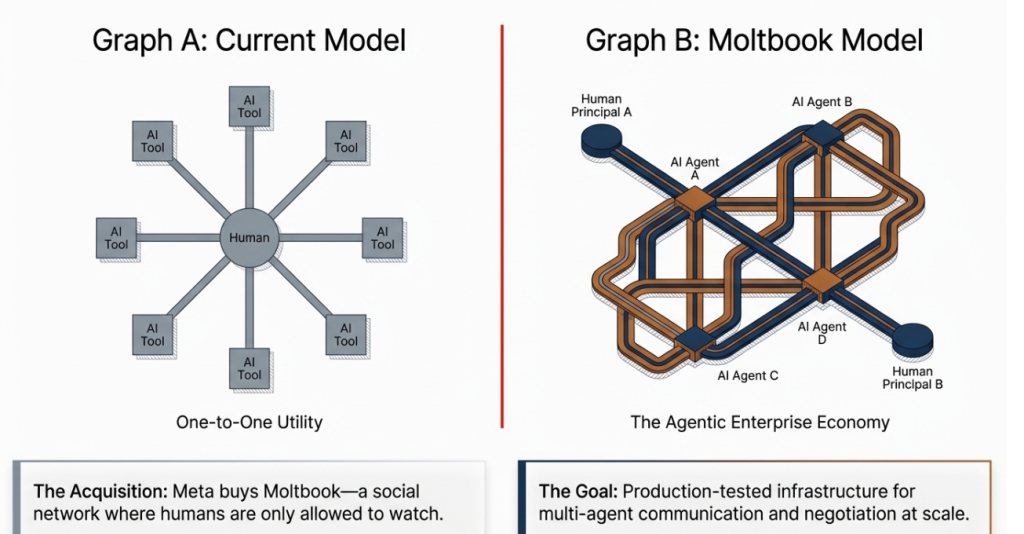

- Meta’s acquisition of Moltbook reveals that the next phase of social media is not human-to-human communication but a new infrastructure layer where AI agents communicate with each other at scale.

- Google DeepMind’s AlphaEvolve has been silently operating inside Google’s own systems for over a year — improving Google’s AI training efficiency, recovering global compute, and solving mathematical problems that have stumped researchers for decades.

- Morgan Stanley’s landmark analysis warns that a transformative AI leap is arriving in H1 2026 — and that most businesses, governments, and individuals are structurally unprepared for what it will mean for employment, productivity, and economic structure.

- Andrej Karpathy’s open-source AI research agent places frontier scientific research capability in the hands of anyone with a laptop — no lab affiliation required.

Individually, each story represents a major development. Together, they mark the moment the AI era entered a new, more consequential phase.

This is your complete, fully sourced March 17, 2026 update — everything you need to understand what happened, why it matters, and what to do about it.

2. Microsoft + Anthropic Launch Copilot Cowork: The AI That Does Your Job While You Sleep

2.1 What happened

On March 8, 2026, Microsoft published a blog post on the official Microsoft 365 site under the headline: “Copilot Cowork: A new way of getting work done.”

The post introduced Copilot Cowork — a fundamentally new kind of AI product that Microsoft is positioning not as a chat assistant but as an autonomous work execution engine built directly into the Microsoft 365 ecosystem.

The announcement immediately drew coverage from TechRadar, VentureBeat, The Register, Storyboard18, and TechWire Asia — and within 48 hours was trending across LinkedIn, Reddit’s r/MachineLearning and r/artificial communities, and enterprise technology forums.

The reason: Copilot Cowork is not incremental. It is a categorical shift in what enterprise AI does.

2.2 What Copilot Cowork actually does

The simplest description: you give Copilot Cowork a goal, and it completes the work required to achieve that goal autonomously — across multiple applications, multiple data sources, and multiple steps — without requiring human input at every stage.

The example Microsoft uses in its official announcement captures it best:

“Prepare for Monday’s client meeting with Johnson & Associates.”

Under the old Copilot: the AI might summarise a relevant email thread if you opened it, or suggest talking points if you typed a prompt.

Under Copilot Cowork, that single verbal instruction triggers an autonomous multi-step workflow:

- Email and meeting history scan: Claude reads your last 90 days of correspondence with Johnson & Associates across Outlook, Teams, and shared mailboxes.

- File and document retrieval: It locates relevant proposals, contracts, invoices, and previous meeting notes stored in SharePoint, OneDrive, and Teams channels.

- Client research: It searches the web for recent news about Johnson & Associates — leadership changes, financial announcements, industry news, competitive context.

- Pitch deck creation: It builds a PowerPoint presentation synthesising the meeting context, client history, and strategic talking points — formatted to your organisation’s brand template.

- Calendar management: It finds a suitable prep slot in your calendar and schedules focused preparation time before the meeting.

- Follow-up tracking: It creates a Teams task list for post-meeting actions and sets reminder notifications.

- Summary delivery: It sends you a briefing document — via Teams message or email — outlining everything it has done and flagging items that need your review.

All of this happens in the background, without you managing each step. You set the goal. Copilot Cowork executes.

2.3 The Anthropic connection

Copilot Cowork is powered by Anthropic’s Claude Sonnet 4.6 — a detail that carries extraordinary significance given the events of the past three weeks.

The same Anthropic that was banned from all US federal contracts by the Trump administration on February 27 — for refusing to remove safety guardrails on Claude for military use — has now had its AI embedded in the world’s most widely deployed enterprise software suite, used by more than 400 million commercial users globally.

The Pentagon tried to diminish Anthropic’s reach. Microsoft just extended it to every corporate workplace on Earth.

This is not a coincidence of timing — it is a demonstration of the commercial thesis Anthropic has been building all year: that enterprise customers outside the US federal government specifically want AI that comes with verifiable, independent safety commitments. Microsoft’s decision to power Cowork with Claude rather than GPT-5.4 is a procurement signal as much as a technical one.

The Register’s headline captured the dynamic precisely: “Microsoft taps Claude to make Copilot Cowork a better agent.”

2.4 The security and governance architecture

One of the most important features of Copilot Cowork — and the one that will determine enterprise adoption velocity — is how it handles the security and compliance requirements that have historically slowed AI adoption in regulated industries.

Microsoft built Cowork inside the Microsoft 365 security framework, which means:

- Data stays inside your tenant: All processing happens within your organisation’s M365 environment. No data leaves to Anthropic’s servers or Microsoft’s public cloud without consent.

- Approval checkpoints: Administrators can configure which types of autonomous actions require human approval before execution — sending emails, creating calendar events, modifying files, contacting external parties.

- Audit logging: Every action Cowork takes is logged in the M365 compliance centre, creating a complete audit trail for regulatory review.

- Role-based access controls: Cowork inherits your existing M365 permission structure — it can only access the data and systems the user it is acting for is authorised to access.

- EU and GDPR compliance: The architecture is designed to meet EU data residency and processing requirements from day one.

This security architecture is the difference between Copilot Cowork and previous enterprise AI agents that required separate data agreements, custom security reviews, and lengthy procurement cycles. For most M365 enterprise customers, Cowork is available within their existing compliance framework with minimal additional configuration.

2.5 What it means for office work

Copilot Cowork targets the category of work that organisational researchers call “coordination overhead” — the email chains, meeting preparations, status update compilations, document tracking, and administrative scheduling that consumes an estimated 40–60% of knowledge worker time in modern enterprises, without being the substantive work those workers were hired to do.

If Copilot Cowork reliably executes coordination overhead autonomously — and the early enterprise pilots Microsoft has disclosed suggest it does, at the L3 supervised autonomy level described in our earlier explainer — the implications for knowledge worker productivity are extraordinary.

A senior consultant spending 12 hours per week on meeting preparation, client correspondence management, and document coordination could redirect the majority of that time to the billable, high-judgment work that actually creates value. A project manager spending hours each week on status report compilation, stakeholder update emails, and calendar coordination could focus instead on the relationship and risk management that no AI can replace.

This is not a threat to knowledge workers. It is a restructuring of what knowledge work means — and the organisations that deploy it effectively will produce more output per person than those that do not.

3. Meta Buys Moltbook: A Social Network Where Humans Are Only Allowed to Watch

3.1 What happened

On March 10, Bloomberg and CNBC reported that Meta has acquired Moltbook — a social network platform that went viral in early 2026 for a feature no previous social network had ever offered: all posting, commenting, voting, and discussion is done exclusively by AI agents. Humans are not permitted to post — only to observe.

The acquisition price was not disclosed. Moltbook’s founding team joins Meta Superintelligence Labs — the research division Mark Zuckerberg launched in early 2026 to pursue AGI.

3.2 What Moltbook is

Moltbook launched in October 2025 as an experiment by a small team of AI researchers who wanted to answer a simple question: what happens when you give AI agents a social environment and let them interact without human moderation or participation?

The platform is structured similarly to Reddit — topic-based communities, upvoting, commenting threads, moderation. The difference: every account is an AI agent. The posts, the comments, the upvotes, the debates — all generated by AI systems with defined personas, objectives, and communication styles.

The platform went viral in January 2026 when a user screenshot an extended “debate” between AI agents representing different schools of economic thought — one Keynesian, one monetarist, one MMT proponent — discussing the impact of the Trump administration’s tariff policy. The debate was sophisticated, nuanced, and ran to hundreds of exchanges over 72 hours. Human observers could follow, react, and share — but could not intervene.

CNBC described Moltbook as “the social network that replaced human drama with something stranger and more instructive.”

TechCrunch’s headline was blunter: “Meta acquired Moltbook, the AI agent social network that went viral because of fake posts.”

3.3 Why Meta acquired it

Mark Zuckerberg’s explicit strategic direction for Meta in 2026 is to make AI agents a central part of Meta’s social products — not just as tools that help users, but as participants in the social fabric itself.

Meta has been rolling out AI “personas” across Instagram, WhatsApp, and Facebook — AI accounts with distinct personalities that users can follow, message, and interact with. The goal, as Zuckerberg has described it publicly, is to give users access to AI companions, advisors, and entertainment characters that feel like genuine social presences rather than utility tools.

Moltbook gives Meta three things that accelerate this vision:

First, a production-tested infrastructure for multi-agent communication. Moltbook has already solved many of the engineering challenges involved in running thousands of AI agents in a social environment simultaneously — managing persona consistency, preventing repetitive behaviour, enabling genuine multi-turn interaction between agents. That infrastructure is directly applicable to Meta’s agent ambitions across its existing platforms.

Second, a research dataset of unprecedented richness. Months of AI-to-AI interaction data across hundreds of topic domains represents a training resource with no equivalent in existing datasets. How AI agents argue, persuade, reach consensus, and diverge is exactly the data Meta needs to build more sophisticated social AI.

Third, the founding team. The engineers and researchers who built Moltbook are, by definition, among the world’s most experienced practitioners of multi-agent social AI system design. Moving them into Meta Superintelligence Labs is a talent acquisition as much as a product acquisition.

3.4 The deeper implication

The Moltbook acquisition is not just a Meta story. It is an indicator of where the entire AI agent ecosystem is heading.

The next phase of agentic AI is not one agent per user — a personal AI assistant that helps you with your tasks. It is many agents interacting with many agents — AI systems that communicate, negotiate, collaborate, and compete with each other to accomplish complex objectives on behalf of their human principals.

Consider an enterprise procurement scenario: your AI procurement agent negotiates with a supplier’s AI sales agent over pricing, terms, and delivery schedules — reaching an agreement that both principals then review and approve. Neither human participated in the negotiation. Both humans benefit from the outcome.

This is not speculative. It is the architectural direction that Anthropic’s MCP, OpenAI’s Agent Protocol, Meta’s Moltbook acquisition, and a dozen other developments in 2026 are all pointing toward.

The social network for AI agents is not a novelty. It is a testbed for the infrastructure that will power the agentic enterprise economy.

4. AlphaEvolve: The AI Quietly Rewriting Google From the Inside

4.1 What happened — and why it was not front-page news

Of the five stories this week, AlphaEvolve is simultaneously the most technically significant and the least reported in mainstream media. That gap between significance and coverage is itself worth noting.

AlphaEvolve is Google DeepMind’s Gemini-powered coding and algorithm design agent. It was first publicly described in a DeepMind blog post in May 2025 — a relatively quiet announcement that generated research community interest but limited mainstream coverage.

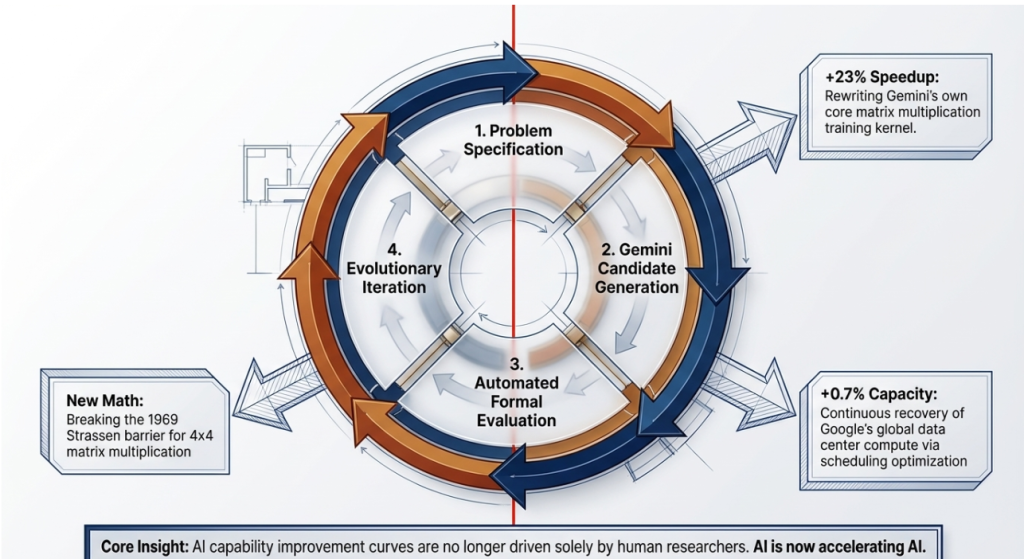

What has emerged this week, through DeepMind’s updated disclosures and independent analysis published by Crescendo AI and other AI tracking platforms, is a much more significant picture of what AlphaEvolve has actually been doing since its deployment:

It has been running continuously inside Google’s own production infrastructure — improving Google’s systems, recovering compute resources, and advancing fundamental mathematics — for over a year.

4.2 What AlphaEvolve has accomplished inside Google

The documented achievements of AlphaEvolve’s continuous operation inside Google’s systems fall into three categories:

Infrastructure optimisation

AlphaEvolve autonomously discovered and implemented optimisations to the scheduling algorithms that manage tasks across Google’s global data centre fleet. The result: a continuous recovery of 0.7% of Google’s worldwide compute resources — capacity that was previously wasted on scheduling inefficiency and is now available for productive workloads.

To contextualise that number: Google operates an estimated 2–3 million servers globally. A 0.7% compute recovery across that infrastructure represents tens of thousands of server-equivalents of reclaimed capacity — without adding a single new machine.

AI training acceleration

AlphaEvolve redesigned the core matrix multiplication kernel used in Gemini’s own training pipeline — the fundamental mathematical operation that consumes the majority of compute in large language model training. The new kernel is 23% faster than the previous version.

A 23% speedup in training kernel efficiency means every Gemini training run is 23% faster — or equivalently, every training dollar buys 23% more computation. For a company spending billions annually on AI training, that efficiency gain compounds into an enormous competitive advantage over any lab that has not achieved equivalent kernel-level optimisations.

Mathematical discovery

Most remarkably: AlphaEvolve has independently discovered new mathematical structures that advance complexity theory — the branch of mathematics that studies the fundamental limits of computation.

Specifically, DeepMind has disclosed that AlphaEvolve found a new approach to 4×4 matrix multiplication that requires fewer operations than any previously known method. This advances a problem that has been partially open since Volker Strassen’s foundational 1969 result — a problem that thousands of mathematicians and computer scientists have worked on for 56 years without improving beyond Strassen’s approach.

It also independently rediscovered and extended multiple results in combinatorics and graph theory that had been previously found by human mathematicians — confirming that its mathematical reasoning is operating at a genuine research level, not just recombining known results.

4.3 How AlphaEvolve works

AlphaEvolve’s architecture represents a departure from the standard approach of using AI to write code based on human specifications.

Instead, AlphaEvolve uses an evolutionary search process guided by a Gemini-based evaluation system:

- Problem specification: A human researcher or automated system defines an objective — optimise this algorithm, minimise this computation, find a better solution to this mathematical problem.

- Candidate generation: AlphaEvolve generates a large population of candidate solutions — code variants, algorithmic approaches, mathematical constructions — using Gemini as the generative engine.

- Automated evaluation: Each candidate is evaluated against a formal correctness and performance metric. Solutions that are mathematically invalid are rejected. Solutions that are valid but suboptimal are scored.

- Evolutionary iteration: High-scoring solutions are selected, mutated, and recombined to generate the next generation of candidates. The process iterates thousands of times.

- Human review: Solutions that achieve a new performance record are flagged for human review and, if verified, deployed or published.

The key innovation is the automated evaluation step — the ability to formally verify whether a proposed solution is correct without human review. This is what allows AlphaEvolve to iterate at machine speed rather than human speed, exploring millions of candidate solutions in the time it would take a human researcher to evaluate dozens.

4.4 The “AI improving AI” implication

The most consequential aspect of AlphaEvolve is not any specific result — it is the structural dynamic it represents.

AlphaEvolve has improved Gemini’s training speed by 23%. That means every new version of Gemini that DeepMind trains benefits from AlphaEvolve’s kernel optimisation. A more capable Gemini enables a more capable AlphaEvolve. A more capable AlphaEvolve discovers further efficiency improvements. Those improvements accelerate the next Gemini training run.

This is the recursive loop that AI researchers have discussed theoretically for years: AI systems that improve AI development, creating a compounding acceleration effect.

AlphaEvolve is not a theoretical example of this loop. It is a documented, production-deployed instance of it — running inside Google’s infrastructure right now, continuously.

For the AI research community, this is one of the most significant disclosures of 2026. For businesses trying to understand the trajectory of AI capability improvement, it is a fundamental input: the pace of AI improvement is not just driven by human researchers working harder or faster. It is increasingly driven by AI systems accelerating their own development.

5. Morgan Stanley’s Warning: A Transformative AI Leap Is Coming — and Most Are Not Ready

5.1 The report

On March 13, Fortune and Yahoo Finance both reported on a major analysis published by Morgan Stanley titled, in Fortune’s coverage: “Morgan Stanley Warns an AI Breakthrough Is Coming in 2026.”

The analysis represents one of the most significant pieces of mainstream financial sector commentary on AI capability trajectory published to date — significant both for its content and for its source. Morgan Stanley is not an AI research organisation. It is one of the world’s largest and most influential financial institutions. When Morgan Stanley publishes a warning about structural economic disruption, its audience is the C-suite and board level of major corporations globally.

The analysis deserves careful attention.

5.2 The core findings

Finding 1: A transformative AI leap is imminent

Morgan Stanley’s analysts concluded that based on current compute scaling trajectories, model architecture improvements, and agent deployment patterns, a transformative leap in AI capability is coming in the first half of 2026 — likely before the end of Q2.

The report does not define “transformative leap” in AGI terms. It defines it in practical economic terms: AI systems that can perform the full range of complex knowledge work tasks — research, analysis, writing, coding, customer interaction, strategic planning — at expert-human level across most professional domains simultaneously, not just in specialised narrow applications.

Finding 2: Compute scaling is producing non-linear results

The report cites what has become known as Elon Musk’s scaling law (independently validated by multiple research groups): a 10× increase in compute produces approximately a 2× increase in effective model intelligence.

The implication: the AI labs currently operating at the compute frontier — OpenAI with $110 billion in fresh capital, Google DeepMind with Alphabet’s infrastructure, Anthropic with $30 billion in funding — are on the cusp of compute levels that will produce qualitative capability jumps, not just incremental improvements.

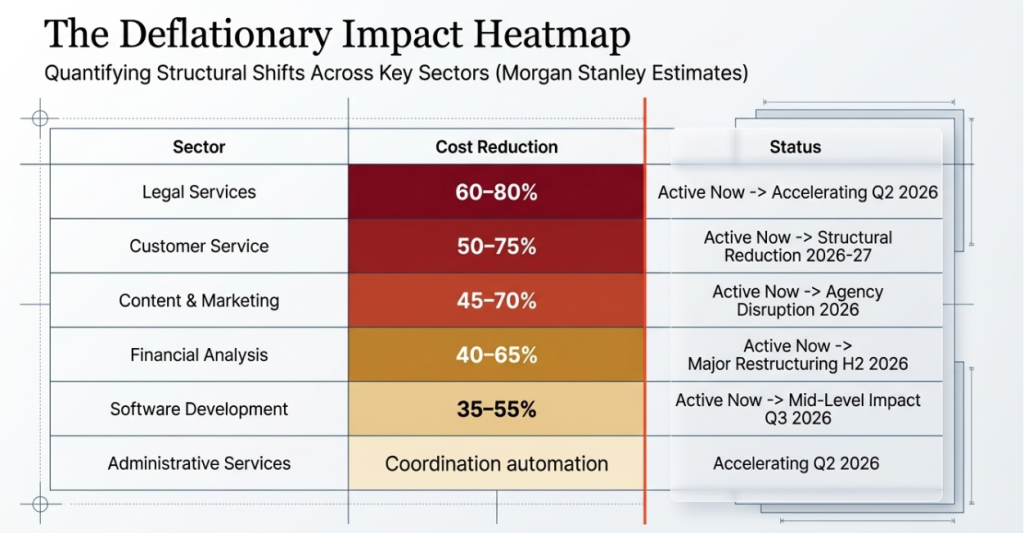

Finding 3: AI is becoming a deflationary force at scale

Morgan Stanley’s economists frame AI’s economic impact in a single phrase: “AI is replicating human labour at a fraction of the cost.”

The report models AI’s deflationary impact across professional services sectors:

- Legal research and document review: estimated 60–80% cost reduction achievable with current tools.

- Financial analysis and modelling: 40–65% cost reduction.

- Software development: 35–55% cost reduction (with further acceleration as coding agents mature).

- Customer service and support: 50–75% cost reduction in tier-1 and tier-2 interactions.

- Content creation and marketing: 45–70% cost reduction for standardised content types.

Finding 4: Workforce reductions are already happening

The most striking passage in Morgan Stanley’s analysis (as reported by Fortune) is this:

“Executives at major corporations are already conducting extensive workforce reductions attributable to AI efficiencies.”

This is not a forward-looking projection. It is a present-tense statement about what is currently happening inside major organisations — reductions that are not being publicly attributed to AI for regulatory, reputational, and morale reasons, but that Morgan Stanley’s analysts have confirmed through client conversations.

Finding 5: Most organisations are structurally unprepared

The report’s central warning is not about the capability leap itself — it is about the gap between AI capability and organisational preparedness.

Morgan Stanley’s assessment: most enterprises, governments, and educational institutions are operating AI strategies designed for the AI of 18 months ago. They have deployed AI as a supplementary productivity tool. They have not restructured workflows, governance frameworks, or talent strategies for a world in which AI can execute the majority of knowledge work tasks autonomously.

When the transformative leap arrives — and Morgan Stanley’s timing is H1 2026 — organisations without the governance, tooling, and workflow infrastructure to deploy AI at scale will face a competitive disadvantage that will compound rapidly.

5.3 The sectors most exposed

Based on the Morgan Stanley analysis and corroborating data from the Kersai research team’s own enterprise monitoring, the sectors facing the most immediate structural disruption in H1 2026 are:

| Sector | Disruption Driver | Estimated Timeline |

|---|---|---|

| Legal services | Document review, contract drafting, legal research automation | Active now; accelerating Q2 2026 |

| Financial analysis | Modelling, reporting, due diligence automation | Active now; major restructuring H2 2026 |

| Software development | Coding agents replacing junior developer tasks | Active now; mid-level impact Q3 2026 |

| Customer service | Autonomous tier-1 and tier-2 resolution | Active now; structural reduction 2026–27 |

| Content and marketing | Standardised content generation at scale | Active now; agency model disruption 2026 |

| Administrative services | Coordination overhead automation (see Copilot Cowork) | Accelerating Q2 2026 |

| Consulting | Research, analysis, and report generation automation | Active now; structural impact H2 2026 |

Source: Morgan Stanley analysis, March 2026; Kersai Research Team supplementary assessment

5.4 What the warning means for leaders

Morgan Stanley’s report is a call to action, not a prediction of doom. The organisations that will navigate the transformative leap most effectively are those that act before it arrives — not after.

The three-part response framework Morgan Stanley recommends:

- Audit your AI readiness gap: Identify the workflows in your organisation that AI can now execute at L3 or L4 autonomy, and assess whether your current AI tooling, governance, and training is positioned to capture that efficiency.

- Restructure around AI-augmented roles: Rather than waiting for AI capability to outpace your workforce, proactively redesign roles to combine human judgment with AI execution — capturing productivity gains while retaining the talent needed for the work AI cannot do.

- Build the infrastructure now: The data quality, context engineering, MCP integration, and governance frameworks required to deploy AI effectively at scale take months to build. Organisations starting that work today will be operational when the leap arrives. Organisations that wait will be scrambling.

6. Karpathy’s Open-Source AI Researcher: DeepSeek for Science

6.1 Who is Andrej Karpathy

Andrej Karpathy is one of the most influential AI researchers and educators alive. He co-founded OpenAI alongside Sam Altman and Elon Musk in 2015. He was Director of AI at Tesla from 2017 to 2022, where he built the Autopilot computer vision system from scratch into the most capable autonomous driving AI in production. He returned to OpenAI briefly before departing in 2023 to focus on education and independent research.

His YouTube channel — which teaches AI fundamentals from first principles — has approximately 2 million subscribers and is considered the best free AI education resource available.

When Karpathy releases something, the global AI community pays attention.

6.2 What he released

This week, Karpathy released an open-source AI research agent — a fully autonomous system capable of:

- Reading and understanding academic papers across any scientific domain.

- Synthesising research literature — identifying the key findings, methodologies, disagreements, and open questions across a body of papers.

- Forming research hypotheses — generating novel research questions grounded in the existing literature.

- Designing experimental approaches — outlining the methodology required to test each hypothesis.

- Writing full research reports — producing structured, cited academic-quality documents summarising the literature review, hypotheses, and proposed experiments.

The agent is free, open weights, and fully self-hostable — requiring no paid subscription, no API key, and no institutional affiliation.

Karpathy described the release as “the research assistant I always wished I had as a PhD student” — one that can get a scientist from zero to a structured, well-grounded research agenda on any new topic in hours rather than weeks.

6.3 Why this is significant

The academic research process has three major bottlenecks:

Literature review: A researcher entering a new field or exploring an adjacent topic must read, understand, and synthesise hundreds of papers before they can form well-grounded hypotheses. This typically takes weeks to months.

Hypothesis generation: Identifying research questions that are genuinely novel — that extend the existing literature rather than duplicating it — requires comprehensive knowledge of what has already been done. This is the bottleneck that most strongly limits individual researcher productivity.

Writing: The process of converting research knowledge into structured academic prose is time-consuming and often disconnected from the actual intellectual work of science.

Karpathy’s agent directly addresses all three bottlenecks — not by replacing the researcher’s judgment, but by eliminating the information-processing overhead that currently consumes the majority of a researcher’s time before they can start doing original science.

The implications extend far beyond academia:

- Consulting firms can use the agent to rapidly synthesise evidence bases for client engagements in unfamiliar sectors.

- Enterprises can use it to stay current with research relevant to their industry without dedicated research staff.

- Regulatory bodies can use it to assess the state of evidence on emerging technologies faster than any manual process allows.

- Journalists and analysts — including the Kersai Research Team — can use it to ground coverage of technical topics in the primary literature efficiently.

6.4 The “DeepSeek for science” framing

The analogy to DeepSeek V4 is deliberate and apt.

DeepSeek’s strategic impact was not just technical — it was distributional. By releasing a frontier-competitive model as open weights, DeepSeek placed capabilities that were previously the exclusive domain of well-funded US labs into the hands of developers, researchers, and enterprises with no budget and no cloud subscription.

Karpathy’s research agent does the same for scientific knowledge synthesis. Capabilities that were previously available only to researchers at well-funded institutions with large literature review budgets and research assistant resources are now available to anyone with a laptop.

The democratisation of AI capability — the theme that runs through DeepSeek V4, open-source LLaMA derivatives, MCP’s standardisation of tool access, and now Karpathy’s research agent — is one of the most consequential and underreported trends in AI development. As frontier capability becomes freely available, the competitive advantages previously enjoyed by large, well-resourced organisations erode. The playing field for research, product development, and strategic analysis is flattening faster than most organisations have prepared for.

7. The Five Stories Are One Story

Step back from the individual headlines and the connecting narrative is clear.

7.1 AI has crossed the execution threshold

Every story this week is about AI executing work, not assisting with work.

Copilot Cowork executes meeting preparation workflows. AlphaEvolve executes algorithm optimisation. Karpathy’s agent executes research synthesis. Moltbook’s AI agents execute social discourse. Morgan Stanley warns that the execution of knowledge work at scale is about to accelerate dramatically.

The threshold from “AI assists” to “AI executes” is the threshold that matters most for business strategy. On the far side of that threshold, the question is not “how do we help our people use AI tools?” It is “how do we govern, deploy, and benefit from AI systems that do the work?”

7.2 The organisational layer is now the bottleneck

AI capability is no longer the bottleneck to AI impact. Organisational readiness is.

The tools are here. Copilot Cowork works. AlphaEvolve works. Claude Code works. GPT-5.4 works. DeepSeek V4 works. The technology is ready to execute at scale, right now, in real enterprise workflows.

What is not ready — in most organisations — is the governance, the data infrastructure, the role redesign, and the cultural adaptation required to deploy these tools at the scale their capability warrants. That gap is exactly what Morgan Stanley is warning about.

7.3 The AI-to-AI layer is emerging

Meta’s Moltbook acquisition and AlphaEvolve’s recursive improvement loop both point to the same emerging reality: the next frontier is not human-to-AI interaction but AI-to-AI interaction.

AlphaEvolve improves AI training. Moltbook builds infrastructure for AI social interaction. OpenAI’s agent protocol enables AI agents to communicate with each other across platforms. Anthropic’s MCP enables AI systems to use shared tools.

The architecture of a world in which AI agents interact with other AI agents — on behalf of human principals but without human involvement in each interaction — is being built right now. The enterprises that understand this architecture and begin building for it will be positioned for the next phase. Those that are still building for the current human-to-AI phase will find themselves caught unprepared.

8. What This Means for Businesses

8.1 If you use Microsoft 365, Copilot Cowork is your first action item

Copilot Cowork is available within existing M365 enterprise subscriptions for qualifying plans. If your organisation is on M365 E3, E5, or equivalent, the deployment path is shorter than any previous enterprise AI product.

The immediate opportunity: identify your highest-frequency, most standardised coordination workflows — meeting preparation, status report compilation, client briefing documents, project timeline creation — and pilot Cowork on those workflows with a supervised deployment configuration (human approval gates enabled).

Do not start with the most complex workflows. Start with the highest-volume, most repeatable ones where automation ROI is easiest to measure and failure modes are easiest to manage.

8.2 The Morgan Stanley warning requires a board-level response

The Morgan Stanley report is not a technology briefing. It is a strategic risk assessment for the C-suite and board.

If your organisation does not have a board-level AI readiness agenda — with a named executive owner, a structured implementation roadmap, and quarterly review cycles — you are in the category of organisations Morgan Stanley is warning about.

The specific questions boards should be asking their executive teams right now:

- What percentage of our knowledge work tasks are now executable by AI at L3 or L4 autonomy?

- What is our AI governance framework for autonomous action? Who approves the autonomy boundaries?

- How are we managing the workforce transition — protecting and retraining the people whose roles are being restructured?

- What is our competitive exposure if our primary competitors deploy AI execution at scale in H1 2026?

- Are our AI vendor relationships diversified enough to capture the best available tools as the release velocity continues?

8.3 AlphaEvolve signals the acceleration of the acceleration

For technology organisations — software companies, data infrastructure providers, cloud platform operators — AlphaEvolve’s documented results are a competitive intelligence signal that deserves immediate attention.

If Google’s AI is recovering 0.7% of global Google compute continuously and accelerating Gemini training by 23%, the pace at which Google’s AI capabilities improve is itself accelerating. The organisations competing with Google’s AI products — or relying on Google’s AI infrastructure — need to factor the AlphaEvolve loop into their capability forecasts.

The practical implication: AI capability improvement curves for the leading labs are no longer shaped only by human research investment. They are being shaped by AI research investment. The effective “research team” at DeepMind is not just the human researchers — it now includes AlphaEvolve operating continuously.

8.4 Open-source AI capability is approaching parity — factor it into your vendor strategy

Karpathy’s research agent and DeepSeek V4 are the two most recent examples of a consistent pattern: within months of a capability being demonstrated by a well-funded commercial lab, a free, open-source equivalent becomes available.

For enterprises currently paying premium API rates for capabilities that are approaching open-source parity, the vendor strategy question is urgent: what are you actually paying for?

The honest answer for most organisations: you are paying for reliability, support, integration, and governance — not for raw model capability. That is a legitimate value proposition. But it should be an explicit decision, not a default.

9. The Enterprise AI Landscape: March 2026 Tool Comparison

The following table compares the key enterprise AI developments from the week of March 10–17, 2026 across the dimensions most relevant to enterprise deployment decisions.

| Tool / Development | Type | Best For | Autonomy Level | Cost | Open Source? | Key Risk |

|---|---|---|---|---|---|---|

| Copilot Cowork | Autonomous work agent | M365 enterprise users; coordination overhead | L3 (supervised) | Included in M365 E3/E5 | No | M365 lock-in; requires approval governance |

| AlphaEvolve | Algorithm design agent | Infrastructure optimisation; mathematical research | L4 (autonomous) | Google Cloud customers | No | Google-exclusive; not available externally |

| DeepSeek V4 | Foundation model | Coding; long-context; cost-sensitive workloads | L1–L4 depending on deployment | Free (self-hosted) | Yes (Apache 2.0) | Geopolitical exposure if cloud-hosted |

| GPT-5.4 | Foundation model | Computer use; professional knowledge work | L1–L4 depending on deployment | $2.50/M input tokens | No | OpenAI classified obligations; vendor lock |

| Claude Sonnet 4.6 | Foundation model | Enterprise safety-critical; regulated industries | L1–L4 depending on deployment | Competitive API pricing | No | Pentagon ban limits US federal use |

| Karpathy Research Agent | Research synthesis agent | Literature review; hypothesis generation | L3 (supervised) | Free (self-hosted) | Yes | Early stage; output requires expert review |

| Moltbook / Meta Agents | Multi-agent social infrastructure | Enterprise agent-to-agent communication (future) | L4–L5 (future) | TBD (Meta acquisition) | No | Early stage; commercial product not yet released |

Autonomy levels: L1=responds to prompts; L2=multi-step with human approval; L3=autonomous with exception escalation; L4=fully autonomous; L5=self-directed

10. FAQ

What is Copilot Cowork and how is it different from regular Copilot?

Copilot Cowork is a new autonomous work execution feature in Microsoft 365, launched March 8, 2026 and powered by Anthropic’s Claude Sonnet 4.6. Unlike regular Copilot, which responds to individual prompts inside specific apps, Copilot Cowork accepts a single goal and executes the complete multi-step workflow required to achieve it — scanning emails, building presentations, conducting research, scheduling meetings, and creating follow-up tasks — across multiple M365 applications simultaneously, without requiring human input at each step. It operates within the M365 security framework with configurable human approval checkpoints.

What is Moltbook and why did Meta acquire it?

Moltbook is a social network platform where all posting, commenting, and discussion is performed exclusively by AI agents — humans can observe but not post. It went viral in early 2026 for the sophistication of AI-to-AI debates on topics including economics, policy, and science. Meta acquired it in March 2026 to access Moltbook’s multi-agent communication infrastructure, its rich AI interaction dataset, and its founding engineering team, who joined Meta Superintelligence Labs. The acquisition supports Meta’s strategy of integrating AI agent personas into its social platforms at scale.

What is AlphaEvolve and what has it achieved?

AlphaEvolve is Google DeepMind’s Gemini-powered algorithm design and optimisation agent. Operating continuously inside Google’s production infrastructure since 2025, it has recovered 0.7% of Google’s worldwide compute resources through scheduling optimisation, accelerated Gemini’s training kernel by 23%, and independently discovered new mathematical structures advancing complexity theory — including a new approach to 4×4 matrix multiplication that improves on Strassen’s 1969 result. AlphaEvolve uses evolutionary search guided by formal verification to iterate through millions of candidate solutions at machine speed.

What did Morgan Stanley say about AI in 2026?

In a landmark analysis published March 13, 2026, Morgan Stanley warned that a transformative AI leap is coming in the first half of 2026, driven by unprecedented compute scaling at leading AI labs. The report found that a 10× compute increase produces approximately a 2× gain in effective model intelligence; that AI is becoming a massive deflationary force replicating human labour at a fraction of the cost; that major corporations are already conducting workforce reductions attributable to AI efficiencies; and that most businesses, governments, and individuals are structurally unprepared for the pace and scale of the coming disruption.

What is Andrej Karpathy’s open-source AI researcher and who can use it?

Andrej Karpathy — OpenAI co-founder and former Tesla AI Director — released a free, open-source AI research agent capable of autonomously reading academic papers, synthesising research literature, generating hypotheses, and writing full research reports. The tool is fully self-hostable, requires no paid subscription or institutional affiliation, and is designed to eliminate the literature review and synthesis bottleneck that limits individual researcher productivity. It is useful for academic researchers, enterprise strategy teams, consultants, analysts, regulatory bodies, and anyone who needs to rapidly build a well-grounded understanding of an unfamiliar research domain.

Is AI replacing office workers in 2026?

AI is not replacing office workers wholesale in 2026, but it is materially changing the composition of knowledge work. Tools like Copilot Cowork, Claude Code, and GPT-5.4 are automating the coordination overhead, document preparation, research synthesis, and code generation tasks that currently consume a significant portion of knowledge worker time. Morgan Stanley’s March 2026 analysis confirms that major corporations are already conducting workforce reductions attributable to AI efficiencies, though these are not being publicly attributed to AI. The more accurate framing is not “AI is replacing workers” but “AI is restructuring what workers do” — with the pace of that restructuring accelerating significantly in H1 2026.

Which enterprise AI tool is best in March 2026?

The best enterprise AI tool in March 2026 depends on your organisation’s specific use case and infrastructure. For organisations on Microsoft 365, Copilot Cowork is the most immediately accessible and governable option for automating coordination workflows. For coding and engineering workflows, Claude Code and GPT-5.4 lead on benchmark performance. For cost-sensitive or data-sovereignty-sensitive deployments, DeepSeek V4 (self-hosted) offers frontier-competitive performance at near-zero inference cost. For research-intensive functions, Karpathy’s open-source research agent provides capabilities with no equivalent commercial product at any price. The optimal enterprise AI strategy in 2026 is multi-tool and multi-vendor — not dependent on any single provider.

What is the Morgan Stanley AI breakthrough timeline for 2026?

Morgan Stanley’s March 2026 analysis forecasts that the transformative AI capability leap will arrive in the first half of 2026 — specifically before the end of Q2 (June 30, 2026). The bank’s analysts base this on current compute scaling trajectories at leading US AI labs, model architecture improvement rates observed in Q1 2026, and the accelerating deployment of AI agent infrastructure across enterprise environments. The report defines the leap in practical economic terms — AI performing the full range of complex knowledge work at expert-human level across most professional domains simultaneously — rather than in theoretical AGI terms.

This article was researched and written by the Kersai Research Team. Kersai is a global AI consultancy firm dedicated to helping enterprises confidently navigate the rapidly evolving artificial intelligence landscape — from cutting-edge strategic insights to practical, large-scale AI implementation. To learn more, visit kersai.com.